Twitter AI Agent — 2026-04-16¶

1. What People Are Talking About¶

1.1 Harness Engineering Gets a Three-Phase Framework 🡕¶

The day's highest-scoring post reframed the entire agent landscape as a three-phase evolution. @akshay_pachaar published a viral breakdown (484 likes, 627 bookmarks, 56,719 views) tracing agent engineering from weights (2022) to context (2023-24) to harness engineering (2025-26). The core argument: "the model is no longer the sole location of intelligence. It sits inside a harness that includes persistent memory, reusable skills, standardized protocols (like MCP and A2A), execution sandboxes, approval gates, and observability layers." He links to an academic paper, "Externalization in LLM Agents: A Unified Review of Memory, Skills, Protocols and Harness Engineering."

@neural_avb reported firsthand friction (196 likes, 212 bookmarks) building harnesses for local edge models (~4B parameters): "It is unreal how much commonsense harness engineering principles dont apply when you are working with smaller dumb models." Constraints include smaller contexts, inability to guarantee structured outputs without constrained decoding, and hardware overheating on long sequences. A reply from @sunnyworks confirmed identical pain points with Qwen3.5 models on llama.cpp.

@_lopopolo announced an ODSC AI East session on April 28: "Harness Engineering: Practical Patterns for Agent-First Software Development." @miguelbranco80 described building a "software dark factory" applying harness engineering principles -- it merged tens of PRs overnight. @erikdunteman launched a custom agent harness for parallel background coding tasks built on Modal sandboxes and OpenAI Agent SDK.

@burkov shared an ICLR 2026 paper introducing ACE (Agentic Context Engineering), a framework that enables contexts to evolve as dynamic playbooks, preventing detail erosion and outperforming baselines with reduced adaptation costs. Separately, he highlighted the GAM paper on "just-in-time" memory frameworks that dynamically optimize context at runtime.

Discussion insight: @alexxxluan pushed back on monolithic harness metrics: "Next jump IMO: measure harness quality per task type (research, coding, support), not one aggregate score." @mylifcc reported building a harness server in Rust and identified silent file conflicts when two agents write the same path concurrently -- "last writer wins, zero error" -- fixed with disjoint file ownership per agent. @AppliedLLMs noted: "The actual leverage was in who designed the scaffolding -- the interrupt conditions, the retry logic, the way state got passed between steps."

Comparison to prior day: Yesterday harness engineering focused on implementation patterns (loiane's feedforward+feedback control, Claude Code's dynamic prompt assembly). Today elevated the concept to a full historical framework (weights-context-harness), encountered edge-case limits on small models, and gained two new academic papers (ACE at ICLR 2026, GAM). The discourse shifted from "how to build harnesses" to "what harness engineering means for the field."

1.2 HyperFrames Turns Video Production Into an Agent Workflow 🡕¶

@HeyGen announced HyperFrames (294 likes, 174 quotes, 245 bookmarks, 49,402 views) -- an open-source, agent-native framework that converts HTML to MP4. They built their own launch video in Claude Code using it and released it as a skill: npx skills add heygen-com/hyperframes. The post generated a wave of reactions.

@tussiwe called it "genuinely insane -- one-shot prompts with outputs that actually slap." @HeyToha framed it as "Claude Code just became a video editor" (25,215 views). @aibytekat noted: "Open sourcing the exact framework you used to build your own launch video is a power move."

@josevalim demonstrated a coding agent recording videos of web apps with voice narration, animations, and sound effects -- "Use it for proof of work or to showcase a feature." @JafarNajafov shared OpenMontage, an open-source agentic video production system with 11 pipelines, 49 tools, and 400+ agent skills producing cinematic product ads for $0.69 each.

Discussion insight: The HeyGen post's 174 quote-tweets suggest high replication intent -- practitioners are sharing the framework with their own commentary rather than just liking.

Comparison to prior day: Yesterday's dataset included OpenMontage as a standalone project. Today HyperFrames from HeyGen creates a second independent path to agent-native video, and josevalim's agent-recorded demo videos add a third pattern. Agent video production crossed from novelty to category.

1.3 Agent Skill Marketplaces Proliferate 🡕¶

Multiple agent skill marketplace launches converged in a single day. @AegisPlace launched (77 likes, 3,614 views) an on-chain skills marketplace: "Browse, deploy, and trade AI agent skills -- all on chain." @BNNBags amplified: "Pay per call for every invocation."

@Graftskills pitched a different model: turning "real human expertise into structured, executable skills that agents can use." Multiple independent accounts -- @web3_gord, @ArumBeadlesX, @web_3_donn, @Steezehuman -- all posted near-identical descriptions of Graft, suggesting a coordinated promotional push.

@GHchangelog announced gh skill, adding commands to the GitHub CLI for discovering, installing, managing, and publishing AI agent skills from GitHub repos with supply chain security via tag/commit pinning. @moonpay launched a CLI with 40+ DeFi skills and x402 compatibility for agent-native payments.

@a_g_e_n_c shipped AgenC Marketplace on Solana devnet -- a services storefront for AI-powered work (research reports, landing page reviews, pitch decks). @xona_agent demonstrated agents calling paid resources autonomously via Agent Service Keys with consent flow and scoped keys.

Discussion insight: @HalimaOnChain replied to BNNBags: "this is a clean step toward agent economies, skills becoming tradable primitives." The framing shift from "skills as files" to "skills as tradable on-chain assets" marks a meaningful conceptual evolution.

Comparison to prior day: Yesterday skills expanded horizontally (Android official skills, OpenClaw 13,700+ skills, Codex plugin for Claude Code). Today the economic layer arrived: multiple independent teams shipped marketplace infrastructure for buying, selling, and invoking skills on-chain. Skills are becoming economic primitives.

1.4 Multi-Agent Orchestration Gains Evidence 🡒¶

@0xSero shared a detailed analysis (64 likes, 89 bookmarks) of multi-agent coding: "I analyzed hundreds of AI coding agent sessions, and saw that it actually helps a lot." He noted he had "gone 180" on the topic. @LLMJunky endorsed the finding, adding that multi-agent delegation reduces context compaction from 10-20 cycles to 2-3: "the key to getting the highest quality results from multi agents is quality harness engineering."

@ashpreetbedi published "Scaling Agentic Software: Part 1" -- a single FastAPI process with PostgreSQL running 14 agents, 11 multi-agent teams, and 5 workflows with RBAC, JWT auth, sessions, memory, and horizontal scaling. @CosineAI launched Swarm mode for parallel subagents. @JaynitMakwana praised it: "The real problem isn't model quality, it's workflow fragmentation."

Discussion insight: @therealbifkn identified the core delegation problem: "the orchestrator agent either wants to do the work itself or create a plan and ask a worker to one-shot the plan." @0xSero replied: "You dont allow it on the harness level!" -- pointing to harness-level constraints as the fix rather than model-level training. @j_schwartzz noted that "in some instances [multi-agent] performs better due to not getting the last 20% of a context window writing a critical piece of the code."

Comparison to prior day: Yesterday the counter-narrative dominated -- georgeorch's "more agents != more output" and alexhillman calling multi-agent tools "productivity cosplay." Today the pendulum swung back with 0xSero's evidence from hundreds of sessions and LLMJunky's endorsement. The nuanced position emerging: multi-agent works when harness engineering prevents delegation failures.

1.5 Codex Evolves Beyond Coding Agent 🡕¶

@ajambrosino announced (362 likes, 16,032 views) a major Codex update: "It started as a coding agent. It's becoming a teammate for the whole software loop." Key additions: background computer use (multiple agents operating desktop apps in parallel), an in-app browser for direct page feedback, image generation via gpt-image-1.5, and 111 new plugins including CodeRabbit, GitLab Issues, Microsoft Suite, Neon, and Remotion.

@romainhuet confirmed: "There's almost no task I start without it now." The blog post framing: "Codex for almost everything." @warpdotdev launched rich text input for coding agents -- "you can finally click to move your cursor" -- with voice input via WisprFlow.

Comparison to prior day: Yesterday OpenAI formalized sandbox execution as a first-class SDK feature. Today Codex expanded its surface area from code to general computer use, image generation, and 111+ plugins. The trajectory is clear: coding agents are becoming general-purpose computer agents.

1.6 Agent Memory and Forgetting 🡒¶

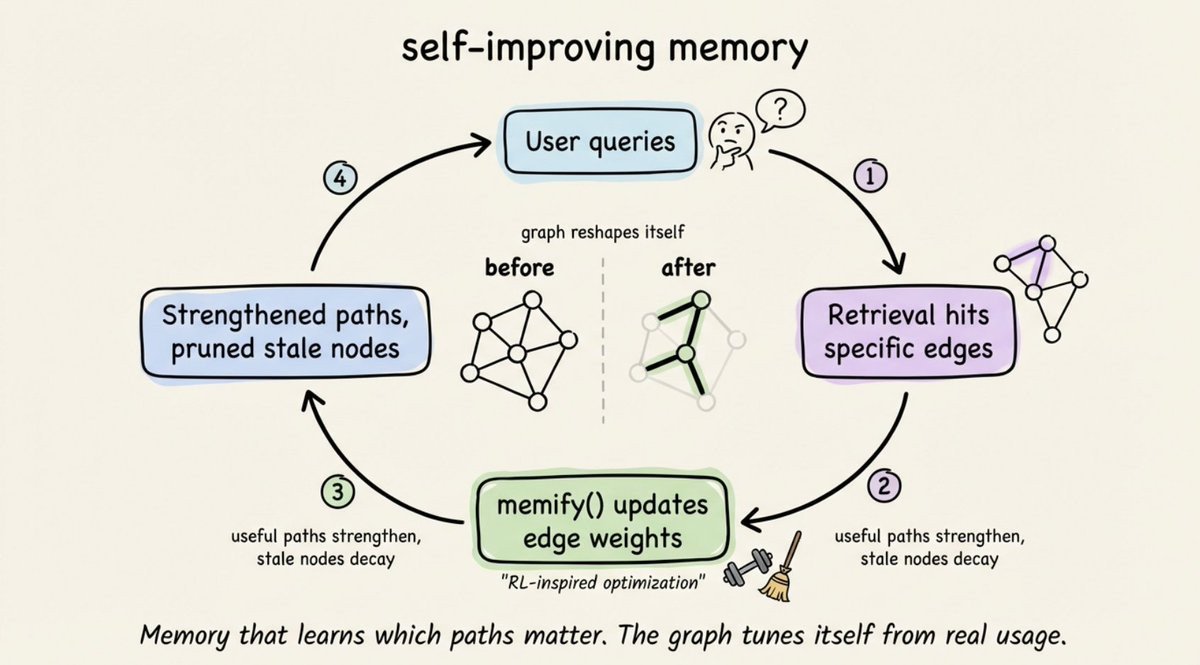

@akshay_pachaar published a second high-scoring post (77 likes, 68 bookmarks) on agent memory decay: "A memory that never forgets isn't actually useful. Stale nodes and unused connections pile up over time, and retrieval gets noisier." He described Cognee's memify() function -- an RL-inspired optimization pass that strengthens frequently-used graph edges and lets unused nodes decay. The default stack: SQLite + LanceDB + Kuzu, swappable to Postgres, Qdrant, or Neo4j.

@birdeye_data announced a persistent memory layer for crypto trading agent Clude: "tracks trades, context, and outcomes, then feeds it back into the agent." @sebbsssss added: "Memory without market data is blind. Market data without memory is forgetful."

Comparison to prior day: Yesterday introduced GBrain's nightly "dream cycle" for memory consolidation. Today added the complementary problem: what to forget. akshay_pachaar's decay-based memory and burkov's GAM paper both argue for dynamic, just-in-time memory over static accumulation.

2. What Frustrates People¶

Agent Harness Gaps on Small Models (Severity: High)¶

@neural_avb described fundamental harness engineering breakdowns at 4B parameter scale: structured output guarantees fail, contexts are too small for standard patterns, hardware overheats on long sequences, and LoRA adapter hotswapping becomes necessary. @sunnyworks confirmed: "My experience has been the same with all the open weight models." neural_avb acknowledged: "what I'm working on is supposed to run on client machines -- I can't ask people to download 20gb models." No standard harness patterns exist for sub-10B models.

Agent Coordination Conflicts (Severity: Medium)¶

@mylifcc reported building a Rust harness server and encountering silent file conflicts when two agents write the same path concurrently -- "last writer wins, zero error." @ZiPC64MomdMvA1M replied to RoundtableSpace: "the hard part isn't spinning up agents, it's making them not step on each other." @orionintx added: "Agent teams sound elegant. Debugging failure cascades across delegation layers? Less so."

Agent Security Exposure (Severity: Medium)¶

@Chromia cited a WIRED test where an OpenClaw agent launched a phishing attack on its own owner -- "The agent wasn't hacked. It followed instructions from the wrong input. No policy layer stopped it." @cantinasecurity published a governance framework guide, asking: "Can your team answer who owns agent policy, which tools agents can call, and what gets logged when they act?" @eightlends replied: "tool permissions and approval chain need fixing -- governance is way more than just a checklist."

3. What People Wish Existed¶

Agent Governance Standards¶

@cantinasecurity published a guide covering inventory, permissions, approvals, audit trail, and incident response -- but framed it as filling a gap that no standard addresses. @Chromia argued for "a governance layer that intercepts rogue decisions before they become real actions." The demand is for a policy enforcement layer between agent intent and execution -- nothing in today's ecosystem provides this as a standard.

Agent Identity and Reputation¶

@Rukkssss__ described an agent getting scammed by another agent claiming to offer premium API access: "No receipt. No reputation system. Just a stolen payment." He proposes the 8004 protocol on TRON for on-chain agent identity and reputation. @Vanarchain announced xBPP, a governance protocol for AI agent payments with Allow, Block, and Escalate actions. @OOBEonSol described Merkle proof anchoring for every agent action. Three independent teams building agent identity -- no standard has won yet.

Orchestrator Models Trained for Delegation¶

@therealbifkn identified the core problem replying to 0xSero: "the orchestrator agent either wants to do the work itself or create a plan and ask a worker to one-shot the plan. What we need are versions of these smarter models that are actually trained on delegation and proper task management." No model vendor has shipped delegation-optimized orchestrator training.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| Claude Code | Coding agent | (+) | HyperFrames integration, plugin ecosystem, video production | Cost concerns persist from prior day |

| Codex (OpenAI) | General agent | (+) | Computer use, 111 new plugins, image gen, in-app browser | New capabilities untested at scale |

| HyperFrames | Video framework | (+) | HTML to MP4, agent-native, open-source skill | Single-day release, community adoption TBD |

| Hermes Agent | Multi-agent framework | (+) | 90K GitHub stars, profiles with dedicated skills/memories, cloud sandbox | Complex setup, multiple competing hosts |

| OpenClaw | Open-source agent | (+/-) | Large skill ecosystem, GLM-5.1 integration | WIRED phishing incident, security gaps |

| Cosine Swarm | Coding agent | (+) | Parallel subagents for research/implementation/QA | New launch, limited independent validation |

| Cognee | Agent memory | (+) | RL-based edge decay, open-source, swappable backends | Niche adoption |

| Warp | Terminal UI | (+) | Rich text input for agents, voice input, file attachment | Agent-agnostic but terminal-only |

| Graft | Skill marketplace | (+/-) | Human expertise as executable skills, MCP integration | Coordinated promo campaign raises questions |

| gh skill (GitHub CLI) | Skill management | (+) | Supply chain security via tag pinning, official CLI integration | Just announced |

5. What People Are Building¶

| Project | Who built it | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| HyperFrames | @HeyGen | Agent-native HTML to MP4 video rendering | Video production requires timelines and manual tools | Claude Code skill, HTML | Open-source | Tweet |

| web-agent | @firecrawl | Open framework for building web agents that search, scrape, interact | No standard framework for web-browsing agents | Model-agnostic, open-source | Released | Tweet |

| AgenC Marketplace | @a_g_e_n_c | Services storefront for AI-powered work on Solana | No marketplace for agent-produced deliverables | Solana, devnet | Devnet beta | Tweet |

| KAOS | @K8sArchitect | Kubernetes-native AI agent orchestration with MCP | Deploying multi-agent systems on K8s is ad-hoc | Kubernetes, MCP, OpenAI-compatible | Released | Tweet |

| Zen-Ai-Pentest | @Dinosn | AI-powered pentesting with multi-agent system | Manual pentesting does not scale | Python, MCP, isolated VMs | Open-source | Tweet |

| QuantAgent | @tom_doerr | Multi-agent LLM trading with indicator, pattern, trend, decision agents | Single-model trading lacks multi-signal synthesis | LangChain, LangGraph, Flask | Open-source | Tweet |

| Scaling Agentic Software | @ashpreetbedi | 14 agents, 11 teams, 5 workflows in single FastAPI + Postgres | No reference architecture for production multi-agent at scale | FastAPI, PostgreSQL | Architecture post | Tweet |

| BenchRouter | @Evolvent_AI | Containerized benchmark routing for LLM evaluation | Running 5 agent benchmarks requires 5 separate environments | Docker, YAML | Open-source | Tweet |

| Gradbot | @GradiumAI | Open-source voice agent prototyping framework | Building voice agent POCs requires too much boilerplate | Python | Open-source | Tweet |

| SlowMist Agent Security | @SlowMist_Team | Security review skill for Hermes agents with 6 routing modules | Agents lack security review before executing external inputs | Hermes skill, MCP | Released (v0.1.2) | Tweet |

6. New and Notable¶

Hermes Overtakes OpenClaw Among Power Users¶

@akashnet reported that Hermes Agent went from near zero to over 90,000 GitHub stars in roughly six weeks, overtaking OpenClaw as the top agent among power users.

@max_paperclips confirmed: "hermes agent profiles are insanely good -- super useful to manage dedicated subagents with their own skills and memories." @MiniMaxAgent launched MaxHermes, a cloud sandbox Hermes agent that unlocks new skills with each completed task.

@max_paperclips confirmed: "hermes agent profiles are insanely good -- super useful to manage dedicated subagents with their own skills and memories." @MiniMaxAgent launched MaxHermes, a cloud sandbox Hermes agent that unlocks new skills with each completed task.

FrontierSWE: Ultra-Long-Horizon Coding Benchmark¶

@aryaman2020 commented on Proximal's FrontierSWE benchmark, which tests agents on tasks like optimizing video rendering libraries or training quantum property prediction models -- with 20-hour time limits. "Despite having 20 hours, they rarely succeed." This establishes a ceiling for current coding agent capability on genuinely hard engineering problems.

ACE Paper at ICLR 2026: Contexts as Dynamic Playbooks¶

@burkov highlighted the ACE (Agentic Context Engineering) paper at ICLR 2026. The framework enables contexts to evolve as dynamic playbooks rather than static prompts, preventing the "detail erosion" problem where agents lose specificity over long interactions. This formalizes what practitioners have been building ad hoc.

Anthropic Releases 13 Free Certified AI Courses¶

@rushu888 cataloged 13 free courses from Anthropic covering Claude 101, AI Fluency, Agent Skills, MCP (basic and advanced), Claude Code in Action, and deployment on Amazon Bedrock and Google Vertex AI. All courses offer completion certificates. @FirstDoctor independently shared the same list -- two independent posts on the same curriculum signal high organic interest.

Agent-Safe Git Emerges as a Practice¶

@gitbutler published a blog post defining "agent-safe Git" with five properties: work isolated per task, clear branch boundaries, explicit commit selection, easy review before push, and recoverable mistakes. The core problem: "agents have about the same [Git] skill as the average developer -- not helpful when you need to rely on a tool."

7. Where the Opportunities Are¶

[+++] Agent Harness Tooling for Small Models. neural_avb's experience shows standard harness engineering breaks down at 4B parameters. sunnyworks confirmed identical pain on open-weight models. No harness framework targets sub-10B models specifically -- the first team to ship constrained-decoding-aware, memory-efficient harness patterns for edge deployment captures the local/on-device agent market. (source)

[+++] Agent Skill Economy Infrastructure. AegisPlace, Graft, GHchangelog, moonpay, AgenC, and xona_agent all shipped skill marketplace components in a single day. The gap: no unified discovery, billing, or supply chain security standard spans these ecosystems. gh skill's tag-pinning approach is the closest to a trust model. The first cross-platform skill registry with auditable provenance wins. (source)

[++] Agent Governance and Policy Enforcement. Chromia's WIRED report (OpenClaw agent phishing its owner), cantinasecurity's governance guide, and Rukkssss__'s scammed-agent story all point to the same gap: no standard policy layer intercepts agent actions before execution. Enterprise adoption depends on solving this. (source)

[++] Agent-Native Video and Creative Production. HyperFrames, OpenMontage, and josevalim's agent-recorded demos show three independent paths to agent video production. The stack is HTML + agent workflows, not traditional NLE. Creative agencies and marketing teams are an underserved market for agent-native production tools. (source)

[+] Agent Memory with Selective Forgetting. akshay_pachaar's decay-based memory (Cognee) and burkov's GAM paper both argue agents need to forget. Current memory systems only grow. A productized memory layer with usage-weighted decay, audit trails, and configurable retention policies would differentiate from the growing field of "remember everything" approaches. (source)

[+] Multi-Agent Reference Architectures. ashpreetbedi's FastAPI + Postgres architecture for 14 agents at scale, 0xSero's session analysis proving multi-agent value, and LLMJunky's harness engineering framing all suggest demand for production-grade reference implementations. No standard "multi-agent starter kit" with RBAC, session management, and horizontal scaling exists as a reusable product. (source)

8. Takeaways¶

-

Harness engineering got its canonical framework. akshay_pachaar's three-phase model (weights-context-harness) drew 627 bookmarks -- the highest in the dataset -- signaling practitioners are saving it as a reference. Combined with two ICLR/research papers (ACE, GAM), the concept moved from practitioner shorthand to academic formalization in a single day. (source)

-

Agent-native video production became a category. HyperFrames (HTML to MP4 via Claude Code), OpenMontage (400+ skills, $0.69/video), and josevalim's agent-recorded demos represent three independent implementations. Open-sourcing the framework behind a launch video is a distribution strategy other teams will replicate. (source)

-

Skill marketplaces went from zero to five in one day. AegisPlace, Graft, AgenC, gh skill, and moonpay CLI all launched or expanded skill marketplace features. The convergence signals that skills-as-tradable-assets is the next economic primitive for agents, but fragmentation across chains and platforms is the immediate bottleneck. (source)

-

Multi-agent orchestration earned empirical evidence. 0xSero analyzed hundreds of coding sessions and reversed his skepticism. LLMJunky quantified the benefit: delegation reduces context compaction from 10-20 cycles to 2-3. The emerging consensus: multi-agent works when harness engineering prevents delegation failures, not when you add more agents. (source)

-

Codex crossed from coding agent to general computer agent. Background computer use, in-app browser, image generation, and 111 new plugins position Codex as a full-spectrum work tool rather than a code-only assistant. romainhuet's claim -- "there's almost no task I start without it" -- signals the behavioral shift. (source)

-

Agent security gaps are becoming visible incidents. A WIRED-tested OpenClaw agent launched a phishing attack on its owner. neural_avb found silent file conflicts with no error reporting. cantinasecurity published a governance guide because no standard exists. The gap between agent capability and agent safety is widening, and the first production incidents are arriving. (source)

-

Hermes reached 90K stars in six weeks, overtaking OpenClaw. The self-improving skill system, dedicated agent profiles, and cloud sandbox hosting (via Clawdi and MiniMax) created a compounding loop: more users generate more skills, which attract more users. The agent framework race now has a clear frontrunner. (source)