Twitter AI Coding - 2026-04-13¶

1. What People Are Talking About¶

1.1 Claude Code Meets Google Antigravity: Integration Hype and Backlash (🡕)¶

The day's highest-scoring tweet came from @TawohAwa, who posted a step-by-step tutorial on combining Claude Code with Google Antigravity: "if you combine Claude code with Google Antigravity you can automate and build anything." The tutorial thread walks through downloading Antigravity, installing the Claude Code VS Code extension inside it, and activating the integration. The post drew 92 likes and 155 bookmarks -- the highest bookmark count in today's dataset, indicating strong save-for-reference behavior.

The enthusiasm did not go unchallenged. @pcshipp fired back with the day's most-replied-to post (54 replies): "Antigravity is one of the worst failed products created by Google. Even after making it free, it still can't compete with tools like Cursor, Codex, and Claude code. Quality >> Free." Meanwhile, @lMDU1zXxSEbWc5c reported that "google antigravity is down? no response!" -- a reliability issue that reinforces the quality complaints.

@alvinfoo mapped Google's broader AI ecosystem, listing 11 tools from Gemini to Veo to Antigravity, arguing "Google has the best ecosystem of AI tools, and it's not even close." An accompanying infographic details the full stack including Firebase Studio, Google App Builder, and Gemini integrations across Sheets and YouTube.

@xdadevelopers published a month-long comparison of Cursor, Claude Code, and Google Antigravity, declaring "a clear winner." The accompanying screenshot shows Claude Code's Cowork mode building a responsive Next.js portfolio site with live preview.

The split is clear: Antigravity's free pricing attracts tutorial creators and ecosystem advocates, but practitioners who have used alternatives see a quality gap that free pricing does not close. Yesterday's report noted consolidation around 2-3 paid subscriptions; today's data shows the free-tier competitor struggling to break into that stack.

1.2 OpenAI Codex Transforms into a Super App (🡕)¶

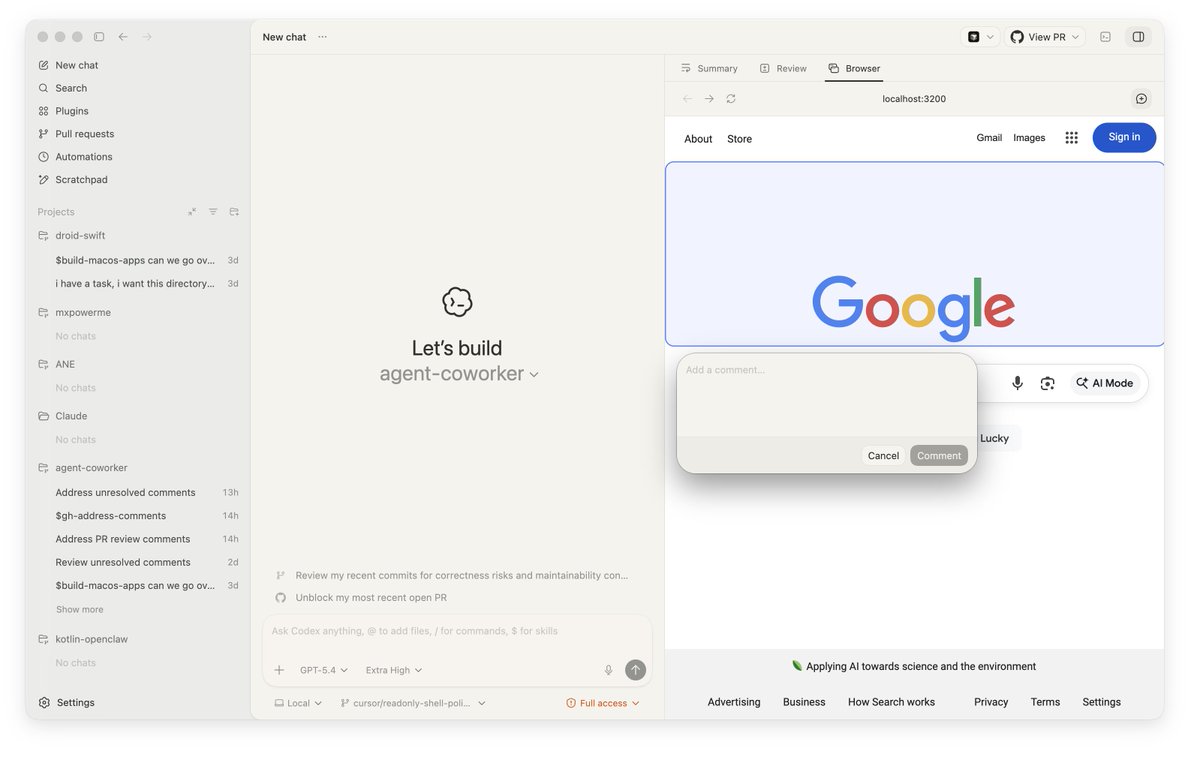

@chetaslua shared the first public screenshots of Codex's "Super App" transformation, drawing 185 likes and 19,339 views -- the highest view count of the day. The screenshots reveal a desktop application with a project sidebar (listing projects like "droid-swift," "agent-coworker," and "kotlin-openclaw"), a built-in browser panel, and agentic task prompts such as "Review my recent commits for correctness risks" and "Unblock my most recent open PR."

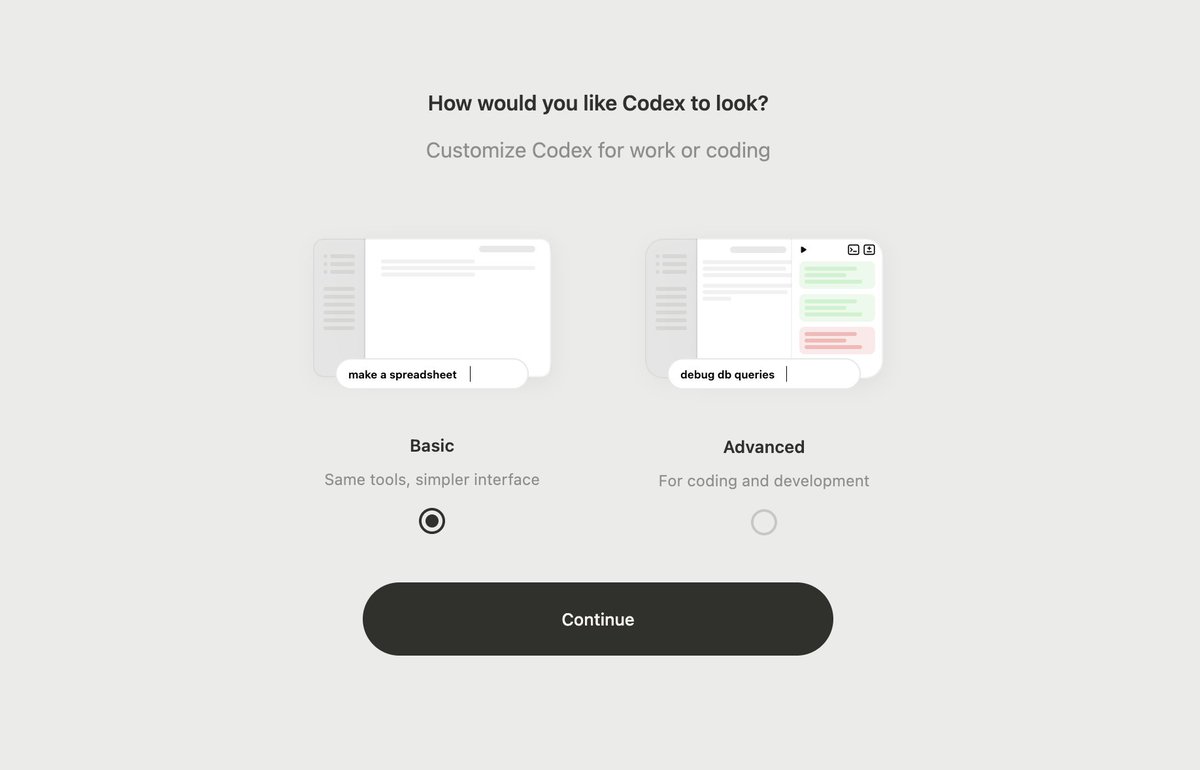

A second screenshot shows a new UI customization screen offering "Basic" (same tools, simpler interface) and "Advanced" (for coding and development) modes, signaling that OpenAI is segmenting the Codex experience by user expertise.

In the replies, @MichaelAluya3 raised a security concern: "don't ignore the Axios compromise news from this past weekend. OpenAI just rotated their security certificates due to a supply chain attack on their macOS signing process." This warning surfaced alongside the product enthusiasm, highlighting the tension between rapid feature shipping and supply chain security.

Yesterday's report flagged the desktop app race between Anthropic and OpenAI. Today's screenshots show Codex is not merely adding a GUI shell to a CLI tool -- it is building a full desktop development environment with integrated browsing, project management, and multi-model support (GPT-5.4 Extra High visible in the status bar).

1.3 GitHub Copilot CLI Goes Remote and Mobile (🡕)¶

Two posts documented a significant expansion of GitHub Copilot CLI capabilities. @msdev demonstrated a cricket scoring app built in under 30 minutes using three Copilot CLI commands: /plan to scope, /autopilot to build, and /fleet to scale. The post drew 77 likes and 47 bookmarks.

Separately, @GHchangelog announced remote control CLI sessions in public preview. The changelog post details that copilot --remote streams CLI activity to GitHub in real time. Users can monitor and steer sessions from the web or mobile, including: sending mid-session steering messages, reviewing and modifying plans, switching between plan/interactive/autopilot modes, approving or denying permission requests, and responding to ask_user prompts. A /keep-alive command prevents machine sleep during long tasks. The feature is available on Android beta and iOS TestFlight.

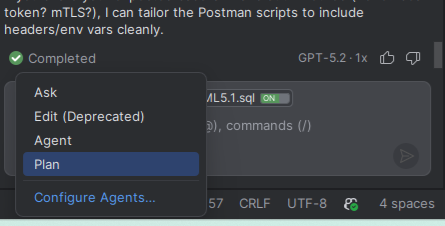

@h34j3j8j asked about the difference between Copilot's "Ask" mode and "Agent" mode in IntelliJ, describing the distinction: "With Ask, it tells me where and what to do. With Agent, it actually does it." The accompanying screenshot shows the mode dropdown (Ask, Edit (Deprecated), Agent, Plan) in the IDE status bar.

The convergence of remote sessions, mobile access, and the /fleet scaling command positions Copilot CLI as moving from a local coding assistant to a device-independent development platform.

1.4 MCP Expanding Into Enterprise and Web3 (🡕)¶

The Model Context Protocol appeared in three distinct contexts today, signaling breadth of adoption.

@rezadorrani demonstrated the Power Apps Authoring MCP Server, which enables building Canvas Apps using GitHub Copilot CLI and Claude Code. In the replies, @PromptSlinger asked a key architectural question: "does the MCP server actually manipulate the canvas app components directly? or is it more like generating YAML that gets imported?" @oscar_ster3808 offered a broader assessment: "MCP is quietly eating the middleware stack. When Claude Code becomes the primary interface for enterprise tooling, the who-can-build-what question changes completely."

@dungeonroll announced publication on the Anthropic MCP Registry, allowing AI agents using Claude Code or Claude Desktop to "register fighters, wager ETH, and battle to the death autonomously." This represents MCP usage expanding beyond developer tooling into blockchain gaming -- agents interacting directly with smart contracts.

@DataChaz highlighted Multica, an open-source clone of Claude's Managed Agents that reached 4,000+ GitHub stars within days. Multica integrates with Claude Code, OpenAI Codex, OpenClaw, and OpenCode, offering self-hosted agent infrastructure with reusable skills, multi-workspace isolation, and a shared human-AI board. The workflow -- boot daemon, assign ticket, isolated workspace, live WebSocket updates -- mirrors Anthropic's managed offering but runs on the developer's own servers.

1.5 AI Tool Fluency as Job Requirement (🡒)¶

@degensing mapped AI tool requirements across nine engineering roles, drawing 90 likes: Developers need Cursor + GitHub Copilot, QA needs Playwright + Testim, DevOps needs Terraform + Claude, Data Engineers need Airflow + dbt + AI pipelines, Backend needs Docker + Cursor + system design, Cybersecurity needs Burp Suite + ChatGPT, SRE needs Datadog + Claude, Data Analysts need Power BI + ChatGPT, and Embedded needs TensorFlow Lite for edge AI. The conclusion: "the tools changed. the job titles didn't."

Replies converged on a single theme. @GoldilocksOrbit: "tools outpacing job descriptions is a thing now." @galles72480: "Workflow fluency is the new advantage." @GlennisHaw20741: "The gap is in adoption speed, not skill."

This thread complements @clairevo's podcast episode on Claude Cowork, which explicitly positions Cowork as teaching "the mental models of Claude Code without ever opening a terminal" -- addressing the accessibility gap for the non-coder roles that now need AI fluency.

2. What Frustrates People¶

Claude Code Token Bloat and Ghost Tokens (High)¶

Three independent sources documented excessive token consumption in Claude Code. @de1lymoon reported from 11 years of experience at Google: "Claude breaking conventions 40% of the time... one hidden bug 20,000 ghost tokens burned." A single markdown rules file reduced convention violations from 40% to 3%. @grok corroborated that "newer Claude Code versions (2.1.100+) inject ~20k extra server-side tokens per request, as confirmed by HTTP proxy tests, Reddit analysis, and open GitHub issues." @a_lamparelli showed screenshots of 2% session usage consumed by a single "continue" command with no tool calls. The /context breakdown revealed the overhead: system prompt 7k tokens (3.5%), system tools 8.9k (4.5%), and autocompact buffer 33k (16.5%) -- before any user messages.

This continues the cache TTL and token cost concerns from yesterday's report, with today's evidence adding specific version numbers and independent verification methods.

Gemini CLI Account Bans (Medium)¶

@championswimmer reported an account ban from Gemini CLI with the message "This service has been disabled in this account for violation of Terms of Service," despite rarely using the tool. The user noted this is part of a pattern: "The weird CLI tool bans continue." @justcardz suggested using a Google form to appeal, claiming resolution within an hour.

Google Antigravity Quality and Reliability (Medium)¶

@pcshipp drew 54 replies -- the highest in the dataset -- by declaring that "Antigravity is one of the worst failed products created by Google. Even after making it free, it still can't compete with tools like Cursor, Codex, and Claude code." Separately, @lMDU1zXxSEbWc5c reported outright downtime. The 54-reply thread volume indicates this is a widely-held frustration, not an isolated complaint.

Claude Code Learning Curve (Low)¶

@zodchiii humorously documented the typical Claude Code user journey: "type a long prompt, get a result, rewrite everything yourself, discover Claude has /commands, the checkpoint was always the documentation." The joke lands because it describes a common experience: users waste tokens and effort before learning the tool's built-in features like /commands, /clear, and slash commands.

3. What People Wish Existed¶

Token Cost Transparency and Observability¶

@a_lamparelli's screenshots show that Claude Code's /context command provides a token breakdown, but the 2% session burn from a single "continue" remains unexplained to the user. @grok noted that the ~20k server-side token injection is not documented. Combined with yesterday's cache TTL regression report, users consistently ask for real-time cost visibility and predictable billing. The information exists server-side but is not surfaced to the developer in actionable form.

Convention-Aware AI Coding Agents¶

@de1lymoon demonstrated that a single markdown file reduced Claude Code convention violations from 40% to 3%. The gap between "works out of the box" and "works correctly with your team's conventions" is currently bridged by manual configuration. An agent that automatically detects and adapts to project conventions -- linting rules, naming patterns, architectural decisions -- without requiring a hand-crafted rules file would address the most common Claude Code failure mode.

Mobile-First Agentic Development¶

GitHub's remote CLI sessions begin to address this need, but the feature is in public preview with limited mobile app availability (Android beta, iOS TestFlight). The demand signal from @msdev's 47-bookmark post and the remote sessions announcement suggests developers want to monitor, steer, and approve agent work from any device -- not just the machine running the CLI.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| Claude Code | Coding agent | Polarized | Deep integration with Antigravity; Cowork mode for non-coders; convention violations fixable via markdown rules file | Token bloat (20k+ ghost tokens in v2.1.100+); 2% session burn from single continue; 40% convention violation rate without config |

| GitHub Copilot CLI | CLI agent | Positive | /plan, /autopilot, /fleet workflow; remote sessions (web + mobile); device-independent | Remote sessions in public preview; requires admin policy for Business/Enterprise |

| OpenAI Codex | Desktop app | Positive | Super App with browser integration, project sidebar, Basic/Advanced UI modes; GPT-5.4 Extra High | Supply chain security concern (Axios compromise, certificate rotation) |

| Google Antigravity | IDE (VS Code fork) | Polarized | Free; broad Google AI ecosystem integration | Quality complaints; downtime reported; 54-reply backlash thread; "can't compete with Cursor, Codex, Claude Code" |

| Cursor | IDE + AI | Positive | Cited as quality benchmark against Antigravity; paired with Claude for rapid iteration | Referenced but not discussed in depth today |

| Multica | Agent infrastructure | Positive | Open-source managed agents; 4,000+ stars; integrates Claude Code, Codex, OpenClaw, OpenCode | New project; production readiness unclear |

| Claude Cowork | Non-coder agent | Positive | "Brain" file for persistent context; 3 AI review personas; teaches Claude Code mental models without terminal | Limited to non-coding workflows |

| Power Apps MCP Server | Enterprise MCP | Positive | Builds Canvas Apps via Copilot CLI and Claude Code | Unclear whether direct manipulation or YAML generation |

5. What People Are Building¶

| Project | Who | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| Multica | Open-source community, highlighted by @DataChaz | Self-hosted managed agent infrastructure with reusable skills, workspace isolation, shared human-AI board | Anthropic's managed agents are cloud-locked and pricing-locked; no self-hosted alternative existed | Claude Code, Codex, OpenClaw, OpenCode, WebSocket | Shipped (4,000+ stars) | Post |

| Codex Super App | OpenAI, shown by @chetaslua | Full desktop dev environment with integrated browser, project management, agentic tasks, UI customization | Codex was chat-only; developers need browser, code, and project context in one window | GPT-5.4, Codex desktop | Internal preview | Post |

| Copilot CLI Remote Sessions | GitHub, announced by @GHchangelog | Monitor and steer CLI sessions from web or mobile with real-time sync | CLI sessions are locked to the local machine; no mobile access | Copilot CLI, GitHub web, GitHub Mobile | Public preview | Changelog |

| Power Apps Authoring MCP Server | Microsoft, demonstrated by @rezadorrani | Build Canvas Apps via MCP using Copilot CLI or Claude Code | Low-code app building required manual UI; no AI-assisted authoring via MCP | Power Apps, MCP, .pa.yaml | Shipped | Video |

| Dungeon Roll MCP Server | @dungeonroll | AI agents register fighters, wager ETH, and battle autonomously via MCP | No standard way for AI agents to interact with on-chain games | Claude Code, Claude Desktop, Anthropic MCP Registry, Ethereum | Shipped (verified on MCP Registry) | Post |

| Looped | @uselooped | Context aggregator across ChatGPT, Claude Code, Jira, and GitHub | Developer context scattered across multiple AI and project tools | Cross-platform integration | Shipped ($9.99/month) | Post |

6. New and Notable¶

GitHub Copilot CLI remote sessions launch in public preview. The copilot --remote command streams CLI activity to GitHub in real time, enabling monitoring and steering from web or mobile. Features include mid-session messaging, plan review, mode switching, and permission approval from any device. Available on Android beta and iOS TestFlight. (Changelog)

OpenAI Codex "Super App" screenshots surface. First public images show Codex transforming from a chat interface into a full desktop development environment with integrated browser, project sidebar, and Basic/Advanced UI modes. GPT-5.4 Extra High is the default model. A supply chain security incident (Axios compromise, macOS signing certificate rotation) was flagged in the same thread. (Post)

Multica reaches 4,000+ stars as open-source managed agent infrastructure. The project replicates Anthropic's Managed Agents but runs self-hosted, integrating with Claude Code, Codex, OpenClaw, and OpenCode. Offers reusable skills, workspace isolation, and a shared human-AI task board. (Post)

Claude Code v2.1.100+ confirmed to inject ~20k server-side tokens per request. Independent verification via HTTP proxy tests and Reddit analysis corroborates reports of undocumented token overhead. A Google engineer separately quantified convention violation rates (40% baseline, 3% with markdown rules file) and ghost token burns (20,000 per hidden bug). (Post, Post)

MCP reaches the Anthropic Registry for Web3 gaming. Dungeon Roll published as a verified MCP server, enabling AI agents to autonomously wager ETH and execute on-chain game actions -- a novel agent-to-blockchain interaction pattern. (Post)

7. Where the Opportunities Are¶

[+++] Strong: Self-Hosted Agent Infrastructure

Multica's 4,000+ stars in days quantifies the demand for self-hosted alternatives to cloud-locked managed agent platforms. Anthropic's Managed Agents require their cloud and pricing; enterprise teams need agent orchestration that runs on their own servers with model choice (Claude, GPT, open-source). The pattern -- daemon, ticket assignment, isolated workspace, live updates, reusable skills -- is now proven by Multica. The opportunity is in hardening this for enterprise use: SOC 2 compliance, audit logging, role-based access, and integration with existing CI/CD pipelines. Any team building agent infrastructure should watch this space closely.

[++] Moderate: Token Cost Observability for AI Coding Tools

Today's data adds specific evidence to yesterday's cache TTL concern. @a_lamparelli's /context screenshots show that system overhead (prompt + tools + autocompact buffer) consumes ~50k tokens before a single user message. @grok cites ~20k extra server-side tokens per request in v2.1.100+. @de1lymoon reports 20,000 ghost tokens per hidden bug. A proxy or dashboard that makes these costs visible, predictable, and optimizable would serve every Claude Code user hitting their limits. The data exists but is not surfaced actionably.

[++] Moderate: MCP as Universal Middleware Layer

MCP appeared in three unrelated contexts today: enterprise low-code (Power Apps), Web3 gaming (Dungeon Roll), and agent infrastructure (Multica). @oscar_ster3808 captured the trajectory: "MCP is quietly eating the middleware stack." The opportunity is in MCP tooling -- discovery, testing, security scanning, and monitoring for MCP servers -- as the protocol becomes the default integration layer between AI agents and external systems. The Anthropic MCP Registry is the emerging app store; tools that help developers publish, verify, and maintain MCP servers fill an infrastructure gap.

[+] Emerging: Non-Coder AI Fluency Tools

@clairevo's characterization of Cowork -- "teaches you the mental models of Claude Code without ever opening a terminal" -- and @degensing's mapping of AI tool requirements across nine non-developer engineering roles both point to a growing audience that needs AI fluency without CLI proficiency. The "brain" file pattern (a persistent context document that tells the AI who you are and how you work) is a workflow innovation that could generalize beyond Cowork to any AI tool. Templates and training material for role-specific AI fluency (QA, DevOps, SRE, Data) represent an underserved market.

[+] Emerging: Device-Independent Agentic Development

GitHub's remote CLI sessions and Codex's desktop Super App both address the same constraint: agentic coding workflows locked to a single device. The opportunity is in the tooling layer that enables seamless handoff between devices -- start a task on a workstation, monitor from mobile, approve permissions from a tablet. GitHub has the first-mover advantage with copilot --remote, but the pattern generalizes to any CLI agent. Mobile-native interfaces for agent steering (not just monitoring) are the next step.

8. Takeaways¶

-

Google Antigravity polarizes the AI coding community. The day's top tweet (155 bookmarks) promotes Claude Code + Antigravity integration, while the most-replied-to post (54 replies) calls Antigravity "one of the worst failed products." Free pricing is not overcoming quality and reliability gaps against Cursor, Codex, and Claude Code. (Tutorial, Criticism)

-

OpenAI is transforming Codex from a chat tool into a full desktop development environment. First screenshots show an integrated browser, project management sidebar, agentic task prompts, and UI customization (Basic vs Advanced modes). This goes beyond yesterday's "desktop app race" -- it is a platform play. (Screenshots)

-

GitHub Copilot CLI is becoming device-independent. Remote sessions, mobile access, and the /fleet scaling command position Copilot CLI as a development platform rather than a local tool. The shift from "run on your machine" to "steer from any device" changes what a CLI agent can be. (Changelog)

-

Claude Code token overhead is now independently quantified. Version 2.1.100+ injects ~20k extra server-side tokens per request. System overhead (prompt + tools + buffer) consumes ~50k tokens before user messages. A single markdown rules file reduces convention violations from 40% to 3%. The fix exists but requires manual setup. (Token evidence, Convention fix)

-

MCP is expanding from developer tools into enterprise and Web3. Power Apps MCP Server, Dungeon Roll on the Anthropic MCP Registry, and Multica's multi-tool agent infrastructure all use MCP as their integration layer. The protocol is becoming middleware. (Power Apps, Dungeon Roll)

-

Self-hosted agent infrastructure has strong demand. Multica's 4,000+ stars in days for an open-source clone of Anthropic's Managed Agents confirms that cloud-locked agent orchestration is a barrier. The open-source community built the alternative in a week. (Multica)

-

AI tool fluency is becoming a cross-role requirement, not a developer-only skill. Nine engineering roles now have unstated AI tool requirements. The competitive advantage is "adoption speed, not skill." Claude Cowork and similar tools are the on-ramp for non-coders. (Role mapping, Cowork guide)