Twitter AI Coding - 2026-04-11¶

1. What People Are Talking About¶

1.1 The Rate Limit Squeeze Hits Every Provider (🡕)¶

The dominant conversation of the day: every major AI coding provider is tightening usage limits simultaneously, and developers are scrambling to adapt.

@HarshithLucky3 posted the highest-engagement thread (722 likes, 68K views): "First Google reduced Antigravity rate limits for Pro users. Next Anthropic did the same for Claude web/app and Claude Code. Now, OpenAI is doing the same with Codex." After the $100/month plan launched, Plus users report receiving roughly one-third of their previous usage. A reply from @cepvi0 observed that Google did it first so "everyone forgot," with attention diverting to Anthropic's subsequent cuts. @Alaric4678 asked whether GitHub Copilot would be next, suggesting open-source models as "the only solution."

That prediction arrived the same day. @douglascamata noted that GitHub Copilot published a changelog entry -- "Enforcing new limits and retiring Opus 4.6 Fast from Copilot Pro+" -- introducing premium request quotas: 300/month on Pro ($10/month), 1,500/month on Pro+ ($39/month), with overages at $0.04/request and model multipliers ranging from 1x to 50x for frontier models. He called it "great vagueposting to avoid backlash" since the post omitted specific old-vs-new comparisons. @SPARKSERiAN captured the timing: "I was just saying GitHub Copilot plans are the best option right now after both OpenAI and Anthropic rolled out those 5 hour limits... and then this happened."

@andacgvn shared a screenshot of the Reddit post "Anthropic made Claude 67% dumber and didn't tell anyone, a developer ran 6,852 sessions to prove it." The data is striking: 17,871 thinking blocks analyzed, reasoning depth dropped 67%, file reads per session fell from 6.6 to 2, one in three edits were made without reading the file at all, and the word "simplest" appeared 642% more in outputs. Anthropic's Boris Cherny attributed it to an "adaptive thinking" feature meant to save tokens on easy tasks that was erroneously throttling hard problems too. The issue was closed over user objections.

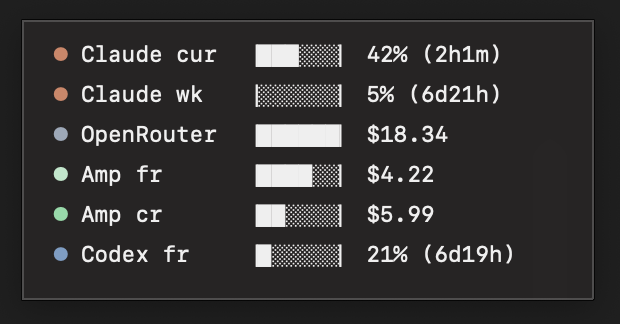

@rvtond built a desktop widget to track rate limits across Claude, OpenRouter, Amplify, and Codex simultaneously -- "no more refreshing 27 browser tabs." The widget screenshot reveals a developer actively consuming from five providers at once, with Claude at 42% of its current-period limit and Codex at 21%.

1.2 Open-Source Models and Budget Stacking Become the Response (🡕)¶

The rate limit squeeze is directly driving adoption of open-source models through aggregator services.

@mhdcode laid out the value case (240 likes, 172 bookmarks): "$10 OpenCode Go + $10 GitHub Copilot = ~$80 worth of usage." OpenCode Go, confirmed at $5 for the first month then $10/month, provides spend-based limits ($12/5 hours, $30/week, $60/month) across GLM-5, GLM-5.1, Kimi K2.5, MiMo-V2-Pro, MiMo-V2-Omni, MiniMax M2.5, and MiniMax M2.7. A reply from @Wayland_Six asked whether OpenCode supports alternative model gateways, and @mhdcode confirmed it does via opencode.json configuration.

@andacgvn published a comprehensive pricing guide for developers looking to escape the rate limit squeeze. The breakdown covers six alternative services: OpenCode Go ($10/month), Xiaomi MiMo Token Plans ($6-$100/month with tiered credit pools), MiniMax Token Plans ($10-$50/month with up to 30,000 requests per 5 hours at highest tier), Chutes ($3-$20/month), Novita Coding Plans ($19.90-$199.90/month with RPM guarantees up to 450), Alibaba Cloud Coding ($50/month with 6,000 requests per 5 hours), and Z AI GLM Plans ($10-$80/month).

@gubatron advocated directly: "A good friend doesn't let you use Claude 4.6 Sonnet without telling you to switch to GLM-5.1 in OpenCode harness via OpenRouter. Pretty much same intelligence level at coding and tool usage for a fraction." He estimates open-source is catching up at a 2.2-2.5 month lag and predicts it will win unless Anthropic and OpenAI compete on price.

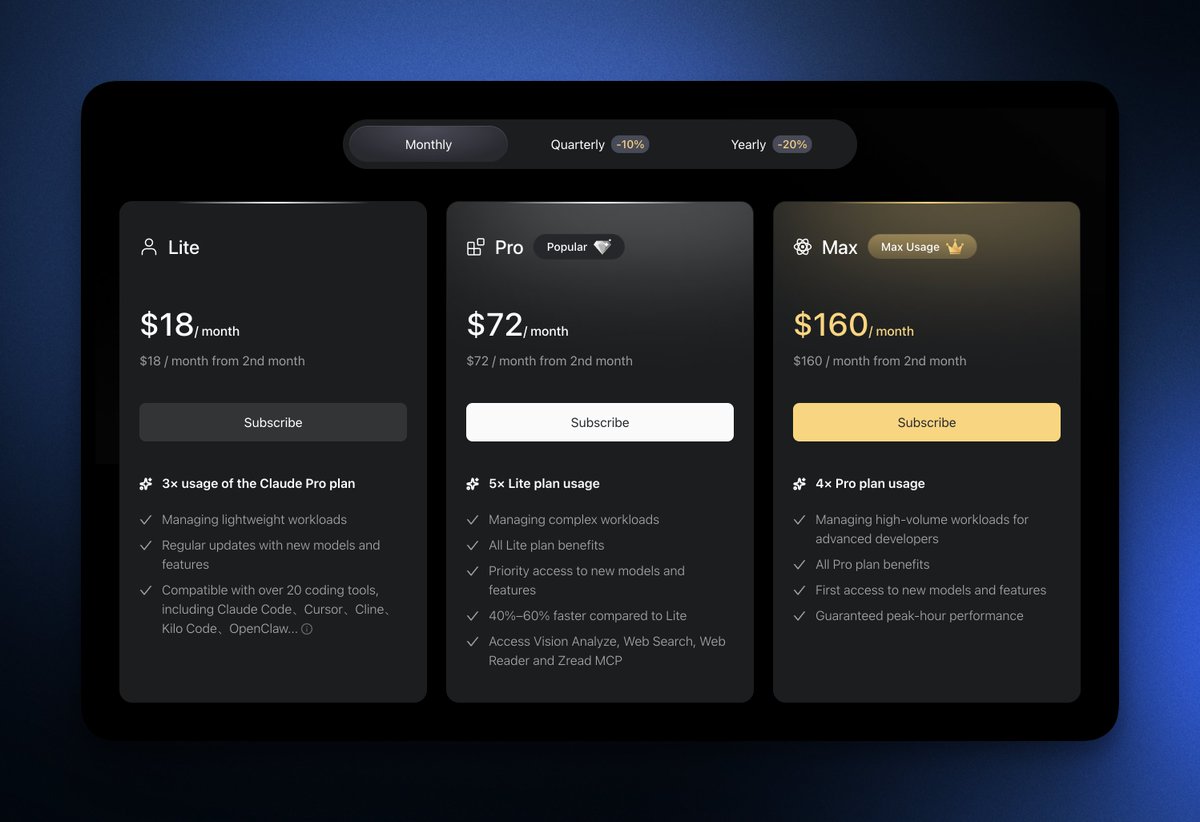

@robinebers critiqued Z AI's pricing for GLM-5.1 specifically: the $18/month Lite plan is slow with low limits, the jump to $72/month Pro is extreme, and $160/month Max seems unreasonable when OpenCode Go offers the same model for $10/month.

@ardadev contributed to the ecosystem by syncing all Kilo Code gateway models to the models.dev database so they work in OpenCode, starting with GLM-5.1 pull requests.

1.3 The Harness Layer Emerges as the Real Product (🡒)¶

A quieter but structurally important thread: the value in AI coding tools is shifting from the model to the surrounding infrastructure.

@brunoborges demonstrated (79 likes) why GitHub Copilot's value proposition is the agent harness, not any single model. His screenshot shows the Copilot issue assignment dialog with a model selector (Claude Opus 4.6) and an agent dropdown listing three built-in agents (Copilot, Claude, Codex) plus custom agents (Agentic Workflows, OpenGrep Autofix Agent). "Why should I subscribe to GitHub Copilot? Because you have access to three CLI Runners agent harness."

@VeritasDYOR analyzed the Claude Code npm source leak: 512,000 lines of TypeScript, clean-room rewritten within 24 hours, 100K GitHub stars (fastest in history). His architecture diagram contrasts the "current focus" of most open-source tools -- a thin wrapper from discovery (MCP/API aggregation) through model inference to execution -- with the "production stack" revealed in Claude Code's source, where a massive harness layer sits between discovery and model, handling tool sandboxing, context management, error recovery, permission control, state checkpointing, and sub-agent orchestration.

@samsaffron illustrated the harness gap concretely by comparing table rendering in Claude Code versus Gemini CLI. Claude Code renders structured data (a team roster) as a properly formatted, aligned ASCII table with correct column spacing, while term-llm shows the same data with poor alignment and word wrapping failures. "Table rendering implementation in Claude Code is very thoughtful -- lots of little details there like collapsing the table and rendering differently when out of space."

@RNR_0 raised the economic question: "Claude is giga expensive when using the API. Have to use their Claude Code or chat, which they prob run at a massive loss. Codex is cheap and great with Hermes, OpenClaw etc." This frames the harness layer as a loss leader designed to keep users in the ecosystem.

1.4 Vibe Coding Projects Get More Ambitious (🡒)¶

Vibe coding is producing increasingly complex results, though skeptics remain.

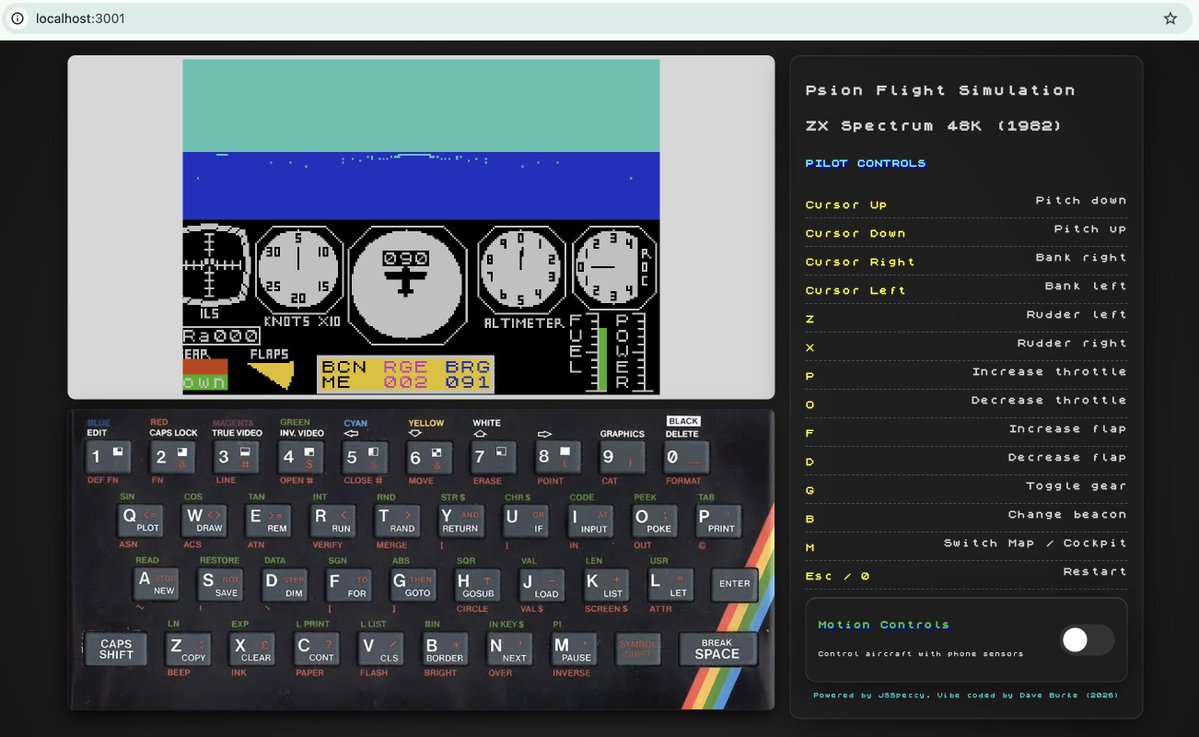

@davey_burke resurrected the Psion Flight Simulation from 1982 (originally ZX Spectrum 48K) as a full browser application using Claude Code with gcloud-mcp for deployment to Google Cloud. The result includes a virtual skeuomorphic ZX Spectrum keyboard, cockpit instruments, accelerometer/gyroscope controls for phone, and sound effects the original game never had.

@RoundtableSpace posted the day's highest-scoring tweet (1,686 score, 188 likes, 287 bookmarks) on turning Claude Code into an "AI SEO machine" with content, keyword, and search optimization workflows. A reply from @jxkedevs pushed back: "decent setup but SEO is dying to AI search anyway. The real play is optimizing for LLMs not Google." Another from @theswolesurfer noted it still cannot replace Semrush or Ahrefs.

@leondevinci_ delivered a rebuttal (44 likes) to the "everyone can code now so code is worthless" narrative: "This is wrong for the same reason it was wrong when Stack Overflow launched, when WordPress launched, and when GitHub Copilot launched. Every time the floor gets automated, the ceiling gets higher. WordPress didn't kill web dev salaries."

@cgarciae88 described using Gemini Flash Light in OpenCode to debug and fix a Hermes Agent WhatsApp gateway on the fly -- "crazy how you can just patch software on the fly now" -- pointing to a workflow where AI agents fix AI agents.

2. What Frustrates People¶

Synchronized Rate Limit Reductions (High)¶

Every major provider -- Google (Antigravity), Anthropic (Claude Code), OpenAI (Codex), and now GitHub Copilot -- reduced usage limits within the same period, creating the perception of coordinated enshittification. @HarshithLucky3 documented the pattern with 722 likes of agreement. Plus-tier users across platforms report getting roughly one-third of previous usage for the same price. The $100/month plans effectively re-price what $20/month used to provide.

Stealth Quality Degradation (High)¶

The Claude Code "adaptive thinking" incident (shared by @andacgvn) represents a deeper frustration: providers silently reducing model capability. A developer ran 6,852 sessions and 17,871 thinking blocks to quantify a 67% reasoning depth drop. Anthropic acknowledged the bug only after the data was posted publicly on GitHub. The issue was closed despite user objections. @cousinvanko summarized the sentiment: "all of these companies need to just focus on not having to nuke their thinking and intelligence to hades after 2 months of the model's existence."

Pricing Opacity and Tier Gouging (Medium)¶

Multiple providers offer the same underlying models at wildly different price points. GLM-5.1 is available for $10/month via OpenCode Go but costs $72/month at Z AI's Pro tier for faster access. @robinebers called the pricing "insane". The lack of standardized benchmarks for what "faster" or "higher limits" means in practice makes comparison shopping difficult.

Multi-Provider Juggling Overhead (Medium)¶

@rvtond built a widget specifically because tracking usage across five+ providers requires refreshing 27 browser tabs. This operational overhead -- managing multiple subscriptions, API keys, rate windows, and billing cycles -- is a tax on productivity that did not exist when one subscription sufficed.

3. What People Wish Existed¶

A Unified Rate Limit and Cost Dashboard¶

@rvtond built a prototype, but developers need a proper cross-provider dashboard that tracks usage, predicts when limits will be hit, and auto-routes requests to the cheapest available provider in real time. The widget approach works for monitoring but lacks the routing intelligence to optimize spend.

Transparent Model Quality Metrics¶

The Claude Code 67% degradation went undetected until a user ran thousands of sessions. No provider publishes ongoing quality metrics for their coding models. Developers want a third-party benchmarking service that continuously monitors model performance on real coding tasks and alerts on regressions.

An OpenCode-Like Harness for Frontier Models¶

@gubatron and @mhdcode both point to OpenCode as the best value, but it currently works only with open-source models. Developers want the same harness flexibility -- model-agnostic, provider-agnostic, with local configuration -- but with the option to use frontier models (Claude Opus 4.6, GPT-5.4) when open-source falls short on complex tasks.

Standardized Provider Pricing Comparison¶

@andacgvn manually compiled a pricing comparison across six providers. This should be a maintained, searchable database with normalized metrics: cost per request, cost per token, requests per time window, model availability, and latency guarantees.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| Claude Code | Coding Agent | Mixed | 512K-line harness with polished UX (table rendering, context management, state checkpointing); three-agent integration in Copilot | Rate limits reduced; "adaptive thinking" degradation incident; expensive API pricing |

| OpenCode + OpenCode Go | Coding Agent + Subscription | Positive | $10/month for GLM-5.1, Kimi K2.5, MiMo-V2-Pro and more; model-agnostic harness; opencode.json config | Open-source models only; no frontier model access; newer ecosystem |

| GitHub Copilot | Coding Agent Platform | Mixed | Three CLI runner agents (Copilot, Claude, Codex); custom agent support; IDE integration | New premium request quotas (300/month on Pro); model multipliers up to 50x eat quota fast |

| Codex (OpenAI) | Coding Agent | Positive | Cheaper API pricing than Claude; works well with Hermes and OpenClaw | Rate limits also tightening; Plus users report reduced allocation |

| Hermes Agent | Self-Hosted Agent | Positive | 77K GitHub stars; persistent memory; 47 tools; 15+ messaging surfaces; cron scheduling; MIT licensed | Self-hosting overhead; requires own infrastructure |

| OpenRouter | Model Router | Positive | Access to multiple models through single API; cost transparency | Additional layer of abstraction; depends on upstream provider availability |

| Gemini Flash Light | Coding Model | Positive | Fast for debugging and quick fixes in OpenCode | Less capable than frontier models for complex reasoning |

| GLM-5.1 | Open-Source Model | Positive | Near-Claude coding quality at fraction of cost; available via OpenCode Go and OpenRouter | 2.2-2.5 month capability lag behind frontier models |

| gcloud-mcp | Deployment Tool | Positive | Direct deployment from Claude Code to Google Cloud | Specific to Google Cloud ecosystem |

| Kilo Code Gateway | Model Gateway | Neutral | Model catalog synced to models.dev; works with OpenCode | Smaller ecosystem; early stage |

5. What People Are Building¶

| Project | Who built it | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| Multi-provider rate limit widget | @rvtond | Desktop overlay tracking usage across Claude, OpenRouter, Amplify, Codex simultaneously | Developers juggling 5+ provider dashboards | Desktop widget | Shipped | Post |

| ZX Spectrum Flight Sim (browser) | @davey_burke | 1982 Psion Flight Simulation recreated in Chrome with virtual keyboard, cockpit instruments, phone gyroscope controls | Retro game preservation via vibe coding | Claude Code, gcloud-mcp, JSSpeccy, Google Cloud | Shipped | Post |

| Hermes Agent comparison page | @nesquena | Feature comparison table: Hermes vs OpenClaw vs Claude Code vs Codex with persistent memory, self-hosting, scheduling dimensions | No clear competitive comparison existed for self-hosted agents | GitHub Pages, Hermes docs | Shipped | Page |

| OpenCode + Kilo Code model sync | @ardadev | Synced Kilo Code gateway models to models.dev database for OpenCode compatibility, starting with GLM-5.1 | OpenCode lacked access to Kilo Code model catalog | OpenCode, models.dev, PRs | Shipped | Post |

| Shipper (Claude Opus 4.6 package) | @shipper_now | Pre-packaged Claude Opus 4.6 workflows for building web/mobile apps, Chrome extensions, email marketing, app translation, self-maintenance | High barrier to entry for non-technical app creation | Claude Opus 4.6, Shipper platform | Beta | Post |

The multi-provider rate limit widget from @rvtond is the most practically useful build of the day. The screenshot shows Claude at 42% current-period consumption (2h1m remaining), Claude weekly at 5%, OpenRouter at $18.34 spent, and Codex at 21% (6d19h remaining). It solves a problem that did not exist six months ago but is now universal among power users managing fragmented AI coding subscriptions.

The ZX Spectrum Flight Simulation from @davey_burke is notable for its scope and fidelity. Recreating a 1982 game complete with skeuomorphic keyboard and phone sensor integration demonstrates that vibe coding can produce polished, deployable applications beyond simple prototypes.

6. New and Notable¶

Claude Code Source Leak Reveals 512K Lines of Harness Infrastructure¶

@VeritasDYOR analyzed the implications of Claude Code's source leaking through a botched npm publish. The 512,000 lines of TypeScript were clean-room rewritten within 24 hours, accumulating 100K GitHub stars -- the fastest in GitHub history. The architectural insight is significant: production-grade coding agents require a massive harness layer between tool discovery and model inference, handling tool sandboxing, context management, error recovery, permission control, state checkpointing, and sub-agent orchestration. Most open-source alternatives are thin wrappers that skip this layer entirely.

GitHub Copilot Introduces Premium Request Quotas¶

GitHub published new rate limits on April 10, introducing a "premium request" currency system. Pro users ($10/month) get 300 premium requests/month, Pro+ ($39/month) gets 1,500, with $0.04 per overage request. Advanced models carry multipliers up to 50x, meaning a single Claude Opus 4.6 interaction could consume 50 of the 300 monthly requests. Opus 4.6 Fast mode was retired from Pro+. This completes the industry-wide tightening: Google, Anthropic, OpenAI, and now GitHub have all reduced limits within weeks of each other.

Hermes Agent Reaches 77K GitHub Stars with Full Self-Hosting Story¶

The Hermes Agent comparison page published by @nesquena positions the self-hosted agent as a synthesis play: persistent memory as local markdown files, cron scheduling for autonomous tasks, 47 built-in tools, 15+ messaging surfaces (Telegram, Discord, Slack, WhatsApp, Signal, Matrix, and more), and the ability to spawn Claude Code or Codex as sub-agents. At 77K+ GitHub stars and MIT licensed, it represents the most mature open-source alternative to vendor-locked coding agents. @cgarciae88 demonstrated using Gemini Flash Light in OpenCode to debug and fix a Hermes WhatsApp gateway in real time.

OpenCode Go Emerges as the Budget Developer's Default¶

At $10/month with access to GLM-5.1, Kimi K2.5, MiMo-V2-Pro, MiniMax M2.5, and MiniMax M2.7, OpenCode Go is rapidly becoming the default recommendation for cost-conscious developers. Multiple independent users (@mhdcode, @gubatron, @robinebers) converge on the same assessment: open-source models via OpenCode deliver comparable coding quality to frontier models at a fraction of the cost.

7. Where the Opportunities Are¶

[+++] Strong: Cross-Provider Usage Management and Smart Routing. Every developer in today's dataset manages multiple AI coding subscriptions with different rate windows, pricing models, and billing cycles. @rvtond built a monitoring widget; the next step is intelligent routing that automatically directs requests to the cheapest available provider based on real-time quota status, task complexity, and model capability. This is a tool-layer problem that could be solved independently of any provider. (rvtond widget, mhdcode budget stacking)

[+++] Strong: Continuous Model Quality Monitoring. The Claude Code 67% degradation incident shows that providers silently reduce model quality, and no independent monitoring exists. A service that continuously benchmarks coding model performance on standardized tasks -- tracking reasoning depth, code correctness, file-read patterns, and thinking token usage -- and publishes regression alerts would serve both developers and enterprise procurement teams evaluating provider reliability. (andacgvn Reddit data)

[++] Moderate: Open-Source Harness Infrastructure. The 512K-line gap between Claude Code's production harness and typical open-source thin wrappers is now publicly documented. Projects that build production-grade harness layers -- context management, error recovery, state checkpointing, sub-agent orchestration -- on top of open-source models could capture the value currently locked inside proprietary coding agents. Hermes Agent is the closest entrant but focuses on general-purpose agent workflows rather than pure coding. (VeritasDYOR architecture analysis, samsaffron table rendering comparison)

[++] Moderate: Standardized AI Coding Provider Marketplace. @andacgvn manually compiled pricing across six providers. A maintained comparison platform with normalized metrics -- cost per request, tokens per dollar, requests per time window, model availability, latency, and quality benchmarks -- would reduce the research burden that currently falls on individual developers. (andacgvn pricing guide, robinebers pricing critique)

[+] Emerging: Model-Agnostic Agent Harnesses with Frontier Fallback. The current ecosystem bifurcates: OpenCode/Hermes for open-source models, Claude Code/Copilot for frontier models. A harness that defaults to cheap open-source models but seamlessly escalates to frontier models for complex tasks -- with cost guardrails and quality thresholds -- would capture the developer workflow @gubatron describes, where open-source handles routine work and frontier models handle edge cases. (gubatron on GLM-5.1 parity)

8. Takeaways¶

-

The rate limit squeeze is industry-wide and simultaneous. Google, Anthropic, OpenAI, and GitHub all tightened limits within weeks. The $20/month tier now delivers roughly one-third of what it provided six months ago, while $100/month plans recapture the original allocation. This is a structural repricing, not a temporary adjustment. (HarshithLucky3, douglascamata on Copilot)

-

Open-source models are the primary beneficiary. GLM-5.1, Kimi K2.5, and MiMo-V2-Pro via OpenCode Go at $10/month are now the default recommendation from multiple independent practitioners who report comparable coding quality to frontier models. The open-source capability lag is estimated at 2.2-2.5 months and closing. (mhdcode, gubatron)

-

The harness layer, not the model, is the moat. Claude Code's leaked 512K lines of TypeScript infrastructure -- tool sandboxing, context management, error recovery, state checkpointing -- represents years of engineering that thin-wrapper open-source tools cannot replicate quickly. GitHub Copilot's value proposition is explicitly the multi-agent harness, not any single model. (brunoborges, VeritasDYOR)

-

Stealth quality degradation is an unresolved trust problem. A developer had to run 6,852 sessions to prove Claude Code's reasoning depth dropped 67%. Providers acknowledged the issue only after public pressure. No independent monitoring service exists. This will become a procurement blocker for enterprise teams that need reliability guarantees. (andacgvn)

-

Multi-provider arbitrage is the new developer workflow. Power users now manage five or more AI coding subscriptions simultaneously, optimizing across rate windows and pricing models. The operational overhead of this approach -- tracking quotas, switching contexts, managing API keys -- is itself creating demand for tooling that did not exist six months ago. (rvtond)

-

Self-hosted agents offer an escape from the squeeze. Hermes Agent at 77K GitHub stars represents a mature alternative where developers control their own infrastructure, choose their own models, and face no externally imposed rate limits. The tradeoff is operational complexity, but as provider restrictions tighten, that tradeoff becomes increasingly favorable. (nesquena)