Twitter AI Coding — 2026-04-20¶

1. What People Are Talking About¶

1.1 GitHub Copilot Pricing Upheaval: Signup Pause, Rate Limits, Token Billing 🡕¶

The day's most consequential news broke via @edzitron, who reported (143 likes, 8,989 views) that "major changes are coming to rate limits on GitHub Copilot this week for individual plans, opus models leaving the $20 plan, and potentially even token-based billing." He followed up with an exclusive article (53 likes, 3,288 views) citing internal Microsoft documents revealing that the weekly cost of running GitHub Copilot has doubled since January, making token-based billing a "top priority."

Hours later, GitHub confirmed the changes. @chribjel shared (10 likes, 894 views) the official @GHchangelog announcement: new signups for Copilot Pro, Pro+, and Student plans are paused "to maintain service reliability for current users," with usage limits tightened and Pro+ offering 5X higher limits than Pro.

@Techmeme aggregated (7 likes, 2,624 views) the full scope: Opus models removed from Pro, Opus 4.7 limited to Pro+. @badlogicgames added a PSA (38 likes, 3,612 views): "opus 4.7 via github copilot only supports medium thinking." A reply from @cherry_mx_reds called it "highway robbery" at 7.5x credit cost.

@edandersen surfaced a separate flaw (33 likes, 5,030 views): "Paid Premium Request budgets can only be set org wide — you cannot give a subset of users credits for a special project or anything — meaning a random dev can blow the entire company's budget in a day." @ZakSMorris reported (4 likes, 168 views) paying GBP 39/month for Pro+ and having it "downgraded overnight" with no warning.

Counter-point from @thisisjonc, who showed (31 likes, 1,155 views) that on the $100/month plan at Extra High effort, only 10% weekly usage is consumed: "that's completely reasonable."

Discussion insight: The reply thread on edzitron's initial post captures the tension. @mrnuu noted Opus and Anthropic models have been missing from Copilot since mid-to-late March, and that request-based pricing with expensive models was unsustainable. @GlennDubby predicted: "When corps see the ACTUAL price and measure that against what they are getting for the 'intelligence', the pullback from LLMs will be strong."

Comparison to prior day: Yesterday's Copilot story was about Ollama enabling free local agent runs. Today the narrative inverts: Microsoft is tightening access to the cloud product, removing models, and pausing new users. The local-first option from yesterday now looks prescient as the cost squeeze accelerates.

1.2 Codex Desktop App Surges, Chronicle Adds Screen-Context Memory 🡕¶

The day's highest-scoring post by far: @0xSero praised the Codex desktop app (546 likes, 20,128 views, score 1182.2) as going "from being unusable to the #1 experience in 'agentic engineering' rn. The UI is phenomenal, computer use is completely non-disruptive and powerful."

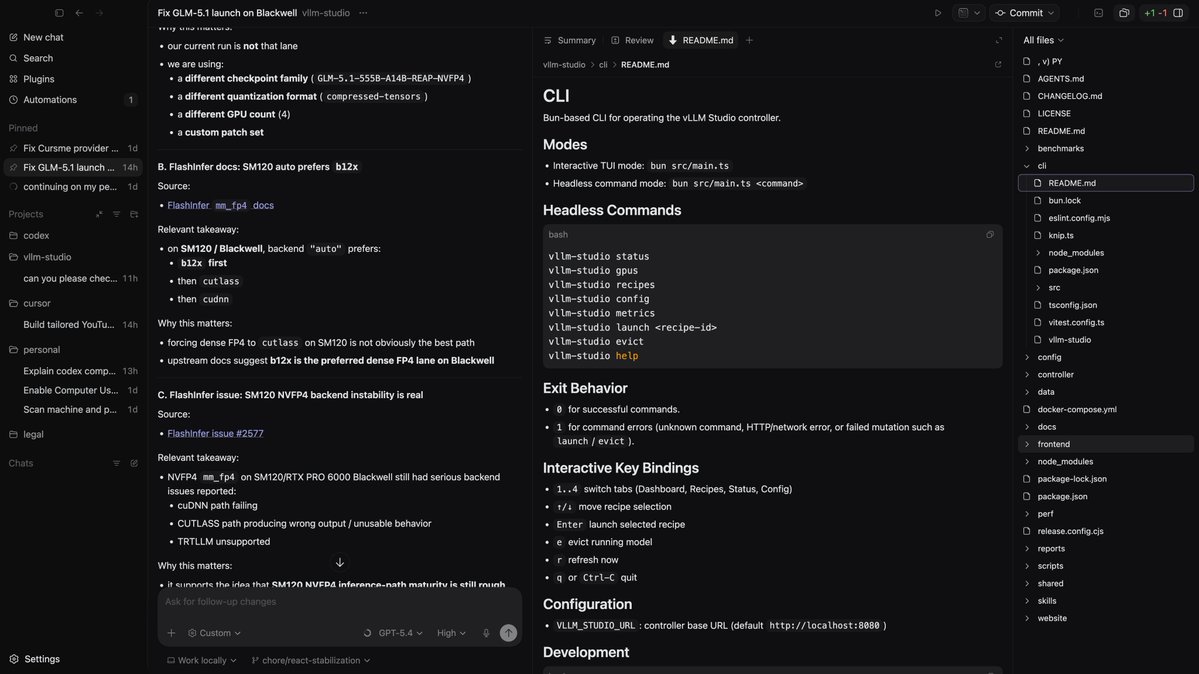

The screenshot shows Codex running GPT-5.4 on a vllm-studio project, debugging GPU inference paths for Blackwell architecture — a real engineering task, not a demo. A reply from @m13v_ explained why computer-use works well on macOS: "the AX tree has been stable since OS X and every native app exposes it cleanly. Same playbook on Windows UIA hits inconsistency issues almost immediately."

@rileybrown posted (276 likes, 8,568 views): "If OpenAI releases a model that is as good at design as Opus 4.7, Codex App will be WAY ahead." A reply from @Ixel111 suggested GPT-5.4 plus GPT-Image-2 could close the gap: "Assuming both models come out this week, we'll see." This design quality gap was echoed by @haider1 (18 likes, 1,397 views): "i really hope openai improves front-end quality in the next gpt-5.x version" and @TeeDevh (3 likes, 131 views): "Is there a way to get good UI out of Codex? It's great at logic, but not even close to Claude for design."

Separately, OpenAI launched Chronicle. @embirico (OpenAI) announced (90 likes, 4,124 views): "Codex has memories, and now they can include recent desktop context — ie what you've been working on." Chronicle takes periodic screenshots to build memory of projects, errors, and workflows. Pro only, macOS only, "super experimental."

@TechieUltimatum detailed (8 likes, 59 views) the implementation: memories stored locally as markdown files, opt-in permissions required, privacy warnings included. A reply from @Steffan0xd on @coinbureau's coverage (19 likes, 5,504 views) captured the privacy tension: "I am definitely not ready for an AI to be watching my screen 24/7 just to save some time."

Discussion insight: @RatulSarna asked embirico about repo-level memories vs global-only, signaling enterprise demand for scoped context. @robschaper called Codex "the number one human skill I suggest people learn to leverage for their work, no matter what you do."

Comparison to prior day: Yesterday Codex computer-use was documented in infrastructure tasks (Raspberry Pi + Tailscale). Today the desktop app receives its highest engagement ever (1182.2 score) while Chronicle adds an entirely new capability — ambient context from screen captures. The product is moving from "tool you prompt" to "assistant that watches."

1.3 Google Antigravity: Shipping Velocity Meets Customer Trust Crisis 🡖¶

@GergelyOrosz documented (113 likes, 7,380 views) a damaging customer story: a paying Antigravity user wanted to track usage, used CodexBar since Antigravity provides no native tool, got banned from Antigravity, tried Gemini CLI as a workaround, and found that banned too — controlled by the same Antigravity team. Customer support told them they were "powerless." In follow-up replies, Gergely added: "Any more questions why Google is not gaining market share across devs? Antigravity silently banning legit, paying users is a recurring pattern."

Meanwhile, @LyalinDotCom (Google) listed eight product releases (134 likes, 7,155 views) with pointed sarcasm: "Otherwise nothing much going on here recently." The list included Gemini 3.1 Flash TTS, Gemini Robotics-ER 1.6, Antigravity updates, Gemini CLI updates, AI Studio updates, macOS native app, Flash Live Thinking, and Gemma4. LyalinDotCom also endorsed using competitor tools (17 likes, 1,285 views) alongside Google's — "if you're a builder you should be exploring all the tools."

@Presidentlin expressed hope (25 likes, 1,348 views) for Gemini 3.5 Pro before Google I/O, noting Google has "so many surfaces" (CLI, Antigravity, Jules, AI Studio, API). @cyburke listed their subscription stack (1 like, 445 views, 3 bookmarks) and noted: "I also have Gemini Pro (free 1 yr) but I'm not using it after Google did the Antigravity ban."

@JulianGoldieSEO continued flooding the feed with Antigravity education content: a 4-hour course (28 likes, 18 bookmarks), a second 4-hour course (3 likes, 10 bookmarks), and two 2-hour courses.

Discussion insight: The Antigravity paradox deepens. Google ships features at pace (8 products in one week), generates hours of educational content, yet the trust problem — banning users without explanation or recourse — directly undermines adoption. GergelyOrosz's thread explicitly shows the consequence: "Dev shrugs; goes back to using Claude Code and Codex."

Comparison to prior day: Yesterday the Antigravity narrative was "generating the most educational content while simultaneously driving power users to Windsurf." Today it escalates: a high-profile tech journalist (113 likes) documents a paying customer banned across two unrelated Google products with no recourse. The trust deficit is now a documented churn driver.

1.4 OpenAI Global Outage: ChatGPT and Codex Down, Status Page Silent 🡕¶

A major OpenAI outage hit ChatGPT and Codex simultaneously. @paul_cal documented the disconnect (12 likes, 1,263 views): "ChatGPT & Codex is down. OpenAI status page says 100% no incidents for today. Twitter still the best downtime check app."

@rehan_hyperion asked (16 likes, 1,764 views): "Chat GPT down for anyone else????" @CryptoNewsHntrs confirmed (7 likes, 589 views) a "MAJOR GLOBAL OUTAGE" affecting ChatGPT web, app, API, and Codex. @HashNuke showed (0 likes, 48 views) the outage hitting both Codex CLI (403 Forbidden) and desktop (reconnection loop), with their usage breakdown graph annotating OpenCode vs Codex traffic.

@altArtist7 criticized (1 like, 22 views) the lack of communication: "ChatGPT and Codex went down, and yet OpenAI and Sam Altman are acting like nothing happened — not even posting anything on X to explain?"

Discussion insight: The outage compounded the pricing upheaval. Users frustrated by rate limit changes were simultaneously locked out of the service entirely. The status page discrepancy — showing "no incidents" during an active global outage — eroded trust in OpenAI's operational transparency.

Comparison to prior day: No outage was reported on April 19. Today's event comes at a particularly bad moment: the same day GitHub announces Copilot signup pauses citing reliability concerns, OpenAI's own services go down. The optics reinforce the narrative that AI infrastructure is straining under demand.

1.5 Anthropic's Claude Code Workshop Goes Viral 🡕¶

A single 30-minute workshop by the head of Anthropic's Coding Agents research team generated four separate high-scoring posts. @RoundtableSpace posted (28 likes, 27 bookmarks, 13,105 views): "THIS 30-MIN WORKSHOP FROM THE CREATOR OF CLAUDE CODE WILL TEACH YOU MORE ABOUT VIBE-CODING THAN 100 YOUTUBE GUIDES." @Ronycoder echoed (6 likes, 6 bookmarks, 133 views): "Instead of watching an hour of Netflix, watch this 30-minute speech." @de1lymoon called it (10 likes, 9 bookmarks, 123 views) "Worth more than 100 paid courses." @goyalshaliniuk added (8 likes, 3 bookmarks, 79 views): "This video will change the way you use Claude forever."

The combined bookmark rate across these posts is notable: 45 bookmarks across 13,470 total views (0.33%). The RoundtableSpace post alone had a 0.21% bookmark rate with 27 bookmarks, indicating strong reference intent.

Discussion insight: A reply from @themccodes captured the sentiment: "30 minutes from someone who actually built it > 3 hours of 'hey guys welcome back to my channel'." The preference for authoritative primary-source content over derivative tutorial content is a recurring signal.

Comparison to prior day: Yesterday's educational content was about Ollama + Copilot CLI step-by-step guides. Today the pattern shifts: a single Anthropic workshop from the tool's creator generates more engagement than all the tutorial content combined. Authority of source matters more than production quality.

1.6 Vibe Coding Backlash Sharpens With Industry Frameworks 🡕¶

The vibe coding backlash moved from anecdotal complaints to structured industry response. @nixcraft dismissed it (11 likes, 954 views): "vibe coding and almost all AI tools are garbage hype created. so i am not surprised people without any coding or engineering skills can't write code." He was quoting @CtrlAltDwayne who observed: "The vibecoders are starting to give up because they're learning AI can't do everything for you."

@thoughtworks published (3 likes, 3 bookmarks, 214 views) "Beyond Vibe Coding: The Five Building Blocks of AI-Native Engineering," arguing that production-grade software in 2026 requires orchestration, not prompts. The article defines five pillars: structured prompts, CI/CD integration, test-driven AI, version control audit trails, and human oversight. It references BMAD Method and GitHub's SpecKit as concrete alternatives to open-ended prompting.

@ThePracticalDev shared (1 like, 2 bookmarks, 424 views) a developer's experience with BMAD: "Vibe coding optimizes for output at the cost of understanding. This dev found BMAD keeps you as the orchestrator, not a passenger."

@pxue predicted (2 likes, 46 views): "Vibe coding will reach its peak in the summer and then die with a whimper."

@Layton_Gott offered a practical fix (1 like, 12 views): "Stop debugging with Claude Code. When it can't fix a bug in 2 attempts, the bug isn't the problem. Your context is. Close the session. Open a fresh one. Paste only the failing function and the error."

Discussion insight: The backlash is bifurcating. On one side, experienced engineers dismiss vibe coding entirely. On the other, methodologies like BMAD and SpecKit attempt to preserve AI-assisted speed while adding engineering discipline. The common thread: unstructured prompting alone is insufficient for production work.

Comparison to prior day: Yesterday the "vibe coding hangover" was named as an emerging backlash term. Today it advances to structured counter-proposals from Thoughtworks and the BMAD community. The conversation is moving from "vibe coding has problems" to "here is what replaces it."

1.7 Local and Free AI Coding Options Expand 🡒¶

The free/local coding story continued from yesterday's Ollama + Copilot CLI breakthrough. @python_spaces shared a step-by-step guide (13 likes, 8 bookmarks, 535 views) for running Copilot CLI locally with Ollama: "No API costs. 100% local."

@JulianGoldieSEO promoted Gemma 4 (10 likes, 4 bookmarks, 654 views) as "a free AI that beats GitHub Copilot" that "runs right on your own laptop" via Ollama and Cursor. He separately listed three free Claude Code paths (4 likes, 5 bookmarks, 108 views): GLM 5.1 via Ollama cloud models, Gemma 4 locally, and Elephant Alpha via OpenRouter.

@hampsonw published BenchLocal results (1 like, 95 views) showing Gemma-4-31b-it scoring 90.17 average across six benchmarks, beating GPT-5.4 (88.67), GPT-5.4-mini (86.83), and Qwen3.6-35B (85.67).

@llmdevguy pushed back (1 like, 84 views) against expensive subscriptions: "No, don't do that. $1000 per month is ridiculous. Instead use MiniMax 2.7 ($20/m, 180,000 requests/month) or Ollama Cloud (GLM-5.1, M2.7, $20/m)."

Discussion insight: The timing of local/free content is not coincidental. GitHub pausing signups and tightening rate limits on the same day amplifies demand for alternatives that avoid subscription dependency entirely. The BenchLocal data showing a local model outperforming GPT-5.4 gives the local-first argument quantitative backing.

Comparison to prior day: Yesterday Ollama shipped the Copilot CLI integration. Today the ecosystem responds with guides, benchmarks, and alternative model recommendations. The local-first option is transitioning from announcement to adoption infrastructure.

2. What Frustrates People¶

GitHub Copilot Downgrades Without Warning — High¶

@ZakSMorris reported (4 likes, 168 views) paying GBP 39/month for Pro+ and having it "downgraded overnight. Usage limits tightened. Opus 4.5 + 4.6 removed. Same price. No warning, no adjustment." @edandersen flagged (33 likes, 5,030 views) the org-level budget flaw. @prashant_hq noted in replies: "Before today, you could give a 100 item task to opus in opencode and it would keep autocompacting and working on it for hours, and it would cost you 1 request. They gave 300 in $10 plan." Coping mechanism: switching to local models or stacking cheaper subscriptions.

Google Antigravity Bans Paying Customers With No Recourse — High¶

@GergelyOrosz documented (113 likes, 7,380 views) a paying Antigravity customer banned for using CodexBar (a usage tracker), then found their Gemini CLI access also revoked by the Antigravity team. Customer support said they were "powerless." The user gave up and returned to Claude Code and Codex. @cyburke confirmed stopping Antigravity usage "after Google did the Antigravity ban." Coping mechanism: abandoning the platform entirely.

OpenAI Outage With Silent Status Page — Medium¶

@paul_cal showed (12 likes, 1,263 views) ChatGPT and Codex down while OpenAI's status page reported "No incidents." Multiple users reported 403 Forbidden errors across CLI, desktop, and web. @altArtist7 noted: "OpenAI and Sam Altman are acting like nothing happened." Coping mechanism: Twitter as the de facto status page.

Codex Frontend Quality Gap — Medium¶

@rileybrown (276 likes), @haider1 (18 likes), and @TeeDevh (3 likes) all identified the same gap: Codex handles backend logic well but produces poor UI compared to Claude. @haider1 noted: "i am mostly sticking with 5.3 codex because gpt-5.4 is too token-intensive." Coping mechanism: switching to Claude/Opus for frontend work, using Codex only for logic.

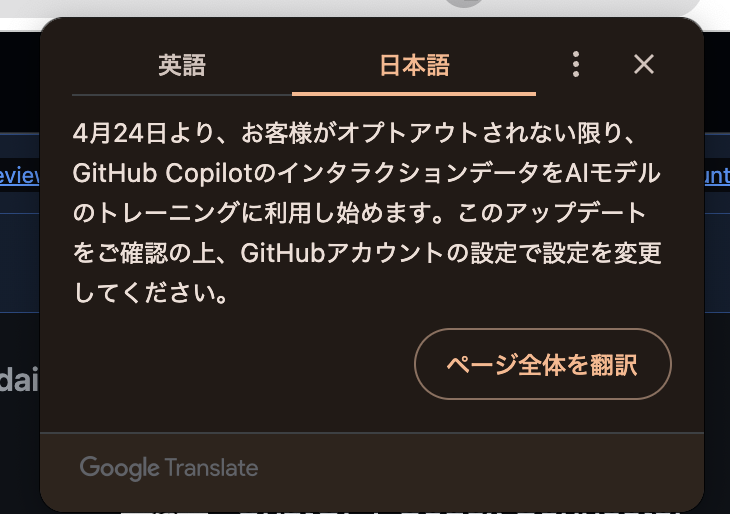

Copilot Training Data Opt-Out Deadline — Low¶

@zento_ai surfaced (3 likes, 747 views) a notification that starting April 24, GitHub will use Copilot interaction data for AI model training unless users opt out. The screenshot was in Japanese, suggesting it appeared to users in localized settings without prominent English-language announcement.

3. What People Wish Existed¶

Codex Design Quality Matching Opus 4.7¶

@rileybrown framed the gap (276 likes, 8,568 views): "If OpenAI releases a model that is as good at design as Opus 4.7, Codex App will be WAY ahead." @TeeDevh asked directly (3 likes, 131 views): "Is there a way to get good UI out of Codex?" @haider1 added (18 likes, 1,397 views): "i really hope openai improves front-end quality in the next gpt-5.x version, so i would not need to switch to other models." The demand signal is clear and high-volume: backend capability is strong, frontend/design is the blocking gap.

Per-Project Budget Controls for Copilot Enterprise¶

@edandersen stated (33 likes, 5,030 views) the problem: "you cannot give a subset of users credits for a special project." Org-wide-only budget allocation means a single developer can exhaust the entire company's premium request budget. The 5,030 views and 4 bookmarks suggest this resonates with enterprise buyers.

Mobile Codex Client¶

@gpsamson built Flodex (1 like, 22 views) because "Codex doesn't support mobile control yet." The app connects to the Codex server over Tailscale, showing a task list interface for managing threads on mobile. The fact that a user built their own mobile client signals unmet demand.

Repo-Level Memories for Codex¶

@RatulSarna asked in the Chronicle announcement thread: "Did you consider an option repo-level memories? Any particular reason you went with global only?" Chronicle's current implementation stores memories globally; project-scoped context would allow different memory sets for different codebases.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| OpenAI Codex | Agent platform | (+) | Desktop app praised as "#1 agentic engineering" experience (1182.2 score); Chronicle adds screen-context memory; reasonable usage on $100 plan | Frontend/design quality gap vs Opus; global outage on Apr 20; GPT-5.4 "too token-intensive" |

| GitHub Copilot | Cloud IDE agent | (+/-) | Massive demand (signups paused); Pro+ 5X limits; org plans still active | Signup pause; Opus removed from Pro; medium thinking only for Opus 4.7; org budgets not granular; training data opt-out April 24 |

| Claude Code | Terminal agent | (+) | Workshop from creator goes viral (13K+ views); 25 mentions across dataset; used in AGI House algorithm discovery | No mobile support; subscription complexity unchanged |

| Google Antigravity | IDE | (-) | 8 product releases in one week; 10+ hours of course content available | Paying customers banned with no recourse; customer support "powerless"; cross-product bans via Gemini CLI |

| OpenCode | Open-source terminal agent | (+) | Cloudflare built CI-native code reviewer with it; theme config documented | K2.6 model quality "dogshit" per one user; /theme command broken |

| Ollama | Local model server | (+) | Copilot CLI integration from yesterday continues to propagate; zero-cost local agent runs | Still being discovered; guides needed |

| Gemma 4 (31b-it) | Local model | (+) | BenchLocal: 90.17 avg, beating GPT-5.4 (88.67); runs locally on laptop | Not tested in all harnesses; requires Ollama setup |

| Cursor | IDE | (+) | Still preferred by some as daily driver for fewer "stupid errors" | Identified as "LLM wrapper" with limited unique differentiation |

| BMAD Method | Spec-driven framework | (+) | Endorsed by Thoughtworks; prevents "agent thrashing"; multi-role orchestration | New; adoption depth unknown |

5. What People Are Building¶

| Project | Builder | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| Chronicle | @embirico (OpenAI) | Builds memories from periodic screenshots for Codex context | Restating context every session | macOS, Codex, GPT-5.4 | Research preview (Pro only) | Announcement |

| Flodex | @gpsamson | Mobile client for Codex via Tailscale | No native mobile Codex control | iOS, Tailscale, Codex API | Alpha (self-use) | Post |

| Agent Arcade | @DanWahlin | Retro arcade overlay that runs while agents work | Idle time waiting for agents | Tauri, Phaser, TypeScript, Copilot CLI | Shipped (open source) | Post, Repo |

| Mission Control v2.0 | @nyk_builderz | Agent ops platform with observability, memory graph, security | Coordinating multiple AI agents | OpenCode, Hermes, Claude, Codex | Shipped (4.2k stars, 726 forks) | Post |

| Plannotator 0.18.0 | @plannotator | Annotation/plan review with agent support | Code review collaboration with agents | Cloudflare, OpenCode | Shipped | Post |

| Polymarket analytics | @RetroChainer | 400M trade analysis with Monte Carlo simulation | Prediction market edge discovery | Mac Mini, Claude Code, XGBoost | Shipped (personal) | Post |

| NBA betting model | @RetroChainer | XGBoost model combining nba_api, Polymarket, DraftKings | Finding sports betting edge from data divergence | Mac Mini, Claude Code, XGBoost | Shipped (personal) | Post |

| CI-native code reviewer | @Cloudflare | AI code reviewer integrated into CI pipeline | Manual code review bottleneck | OpenCode | Shipped | Post |

| OmniSwap | @DefiHimanshu | DEX aggregator for best swap rates | Fragmented DeFi liquidity | Hackathon project | Alpha (hackathon winner) | Post |

| Local Hive | @TODIofBaltomore | Farm-to-table delivery app | UI/UX designer bridging to development via AI | Figma, Codex | Design phase | Post |

6. New and Notable¶

OpenAI Chronicle: Screen-Context Memory for Codex¶

@embirico (OpenAI) launched (90 likes, 4,124 views) Chronicle as a research preview for Pro subscribers on macOS. It takes periodic screenshots and builds markdown-based memories so Codex can help with what you have been working on without restating context. @Techmeme covered it (10 likes, 1,697 views). Privacy is opt-in only, with explicit warnings. This is the first major ambient-context feature in a coding agent.

GitHub Pauses All Individual Copilot Signups¶

@GHchangelog announced the pause of new Pro, Pro+, and Student signups, with tightened usage limits and Opus models removed from Pro tier. @edzitron's reporting added that internal documents show weekly Copilot costs doubled since January and token-based billing is planned for later in 2026.

Cloudflare Builds CI-Native Code Reviewer With OpenCode¶

@Cloudflare shared (26 likes, 20 bookmarks, 2,079 views) how they built a CI-native AI code reviewer using OpenCode to help engineers "ship better, safer code." The 20 bookmarks relative to views (0.96% bookmark rate) indicate strong practitioner interest. This is the first documented enterprise CI integration using OpenCode specifically.

BenchLocal: Local Gemma-4 Beats GPT-5.4¶

@hampsonw published (1 like, 95 views) BenchLocal results showing Gemma-4-31b-it averaging 90.17 across six benchmarks, outperforming GPT-5.4 (88.67). Per-benchmark breakdowns show Gemma-4 winning on bugfind (93 vs 95), dataextract (90 vs 86), and instructfollow (97 vs 99) categories. Low engagement but high signal for local-first viability.

Copilot Interaction Data Training Starts April 24¶

@zento_ai surfaced (3 likes, 747 views) a GitHub notification that Copilot interaction data will be used for AI model training starting April 24 unless users opt out. The notification appeared in Japanese localization, suggesting it may not have received prominent English-language coverage yet.

Claude Code Agents Approach State-of-the-Art in Algorithm Discovery¶

@0x_Asuka reported (8 likes, 70 views) from an AGI House event: 27 Claude Code agents produced Vehicle Routing Problem algorithms within 3% of state-of-the-art in 30 seconds. @vidalthi, identified as the "#1 researcher in his field," was described as "amazed" after watching the agents "revisit and rediscover 30 years of VRP literature" in approximately 4 hours.

7. Where the Opportunities Are¶

[+++] Subscription Cost Optimization Layer — GitHub Copilot costs doubled since January (@edzitron, article). Signups are paused. Token-based billing is coming. @thisisjonc showed only 10% weekly usage on $100/month. @llmdevguy listed alternatives at $20/month. A tool that monitors actual token burn across Copilot, Claude, and Codex subscriptions, recommends optimal plan allocation, and auto-routes requests to the cheapest capable model would address a structural, growing need as subsidized pricing ends.

[+++] Enterprise Budget Granularity for AI Coding — @edandersen identified (33 likes, 5,030 views) that Copilot premium request budgets are org-wide only with no per-user or per-project allocation. As token-based billing approaches, enterprises need departmental, project-level, and per-user budget controls with alerts. GitHub does not offer this today. A third-party budget governance layer for AI coding tools would fill a gap that will widen as usage-based pricing becomes standard.

[++] Ambient Context for Coding Agents — Chronicle (@embirico, post) is Pro-only, macOS-only, and Codex-only. A cross-platform, cross-agent ambient context layer — capturing screen state, terminal output, and browser activity to feed into Claude Code, Codex, and OpenCode — would address the same "stop restating context" problem without vendor lock-in. @RatulSarna's question about repo-level memories signals enterprise demand for scoped context.

[++] Mobile-First Codex/Agent Client — @gpsamson built Flodex as a personal workaround because Codex has no mobile support. A polished mobile client for managing agent sessions across Codex, Claude Code, and OpenCode — viewing progress, approving changes, managing queues — would serve the growing population of developers who monitor long-running agent tasks away from their desk.

[+] Spec-Driven AI Development Tooling — Thoughtworks published a framework for "AI-native engineering" that replaces vibe coding with structured spec-to-code pipelines. BMAD Method and GitHub SpecKit are referenced but early. Tools that make spec-driven AI development as easy as vibe coding — auto-generating specs from conversations, maintaining spec-code traceability, flagging drift — would capture the growing audience that finds vibe coding insufficient for production work.

8. Takeaways¶

-

GitHub Copilot's pricing upheaval is the day's defining policy event. Microsoft paused all individual signups, tightened rate limits, removed Opus from Pro, and plans token-based billing as weekly costs doubled since January. This is the clearest signal yet that subsidized AI coding is ending. (@edzitron, exclusive; @GHchangelog via @chribjel, post)

-

Codex desktop receives its highest praise ever while launching ambient context. At 1182.2 score and 20,128 views, @0xSero's endorsement is the day's highest-engagement post by 3x. Simultaneously, Chronicle adds screen-context memory — the first ambient context feature in a major coding agent. The product is accelerating from prompted tool to observant assistant. (@0xSero, post; @embirico, post)

-

Google Antigravity's customer trust problem is now a documented churn driver. A paying customer was banned across two unrelated Google products with support admitting they were "powerless." The consequence is explicit: "Dev shrugs; goes back to using Claude Code and Codex." Google's shipping velocity (8 products in one week) cannot compensate for actively driving away paying users. (@GergelyOrosz, post)

-

The OpenAI outage exposed status page unreliability at the worst possible moment. ChatGPT and Codex went down globally on the same day GitHub paused Copilot signups citing reliability concerns. The OpenAI status page showed "No incidents" during the active outage. Trust in operational transparency took a hit across both Microsoft and OpenAI simultaneously. (@paul_cal, post)

-

Local model benchmarks now show quantitative parity with cloud models. BenchLocal results show Gemma-4-31b-it (90.17) outperforming GPT-5.4 (88.67) across six coding benchmarks. Combined with yesterday's Ollama + Copilot CLI integration and today's pricing upheaval, the local-first option moves from niche to structurally advantaged. (@hampsonw, post)

-

Vibe coding backlash is graduating from complaints to competing frameworks. Thoughtworks published a five-pillar framework for "AI-native engineering." BMAD Method is gaining endorsements. The conversation has moved from "vibe coding has problems" to "here are the structured alternatives." This signals the practice is mature enough to generate its own opposition literature. (@thoughtworks, post; @ThePracticalDev, post)

-

Anthropic's content strategy beats everyone else's by going straight to the source. A single 30-minute workshop from the Claude Code creator generated more combined engagement (13K+ views, 45 bookmarks) than hours of third-party tutorials. The preference for authoritative primary-source content over derivative tutorial content is a clear, repeating pattern. (@RoundtableSpace, post)