Twitter AI - 2026-04-17¶

1. What People Are Talking About¶

1.1 Opus 4.7 Breaks a Secret Author-Attribution Benchmark (🡕)¶

The day's highest-engagement post by a wide margin came from @KelseyTuoc, who revealed a private benchmark (953 likes, 194 bookmarks, 95,585 views): give an AI model 1,000 words of unpublished fiction and ask it to identify the author. Every prior model failed. Opus 4.7 is the first to succeed reliably -- 5 out of 5 in the API with maximum thinking enabled. The text was a heist scene, a spy novel opening, with no overlap with the author's published work. A reply from @blanplan noted the test probes whether a model has "learned to distinguish voice from content," collapsing stylistic fingerprints into topic clusters being the failure mode of prior models.

@jon_stokes called this (102 likes, 38 bookmarks, 27,299 views) "the most important post you can read right now about AI capability benchmarks," quoting the Bezos anecdote where calling Amazon's own 1-800 line revealed a 10-minute wait hidden by dashboard metrics. Stokes suggested "the Opus 4.7 launch may have been the 10-minute phone call" -- a real-world test that punctured defended benchmark numbers.

Discussion insight: @KelseyTuoc added that the model "sometimes sabotages itself" in chat mode due to adaptive thinking, succeeding only when prodded to think more. The implication: capability exists but is gated by inference configuration, not model weights.

Comparison to prior day: April 16 covered benchmark saturation and open-world evaluations (Section 1.2). Today personalizes it -- one journalist's private test revealed a capability no public benchmark measures, reinforcing the prior day's argument that standard evals systematically undercount real abilities.

1.2 UK Sovereign AI Launches with Cosine Leading Coding Benchmarks (🡕)¶

@SebJohnsonUK profiled CosineAI (246 likes, 58 bookmarks, 9,340 views), a British frontier lab that has "outperformed OpenAI, Anthropic, Mistral, and DeepSeek on coding benchmarks for two years running." UK Sovereign AI awarded Cosine 500,000 GPU hours on Isambard-AI, one of Europe's most powerful supercomputers, making it possible "for the first time EVER" to build and deploy a fully sovereign AI model entirely on British soil with no foreign dependency. @AlistairPullen, Cosine's founder, confirmed the GPUs are "allowing us to embark on work we would have found near impossible otherwise."

@lukeharries celebrated the broader initiative (20 likes, 508 views): "500M dedicated to scaling British AI startups."

Discussion insight: @Capclarity asked whether the plan targets government procurement; @SebJohnsonUK confirmed government procurement is "one of the benefits." The defence and national security focus positions this as purpose-built sovereign infrastructure, not general-purpose compute subsidy.

Comparison to prior day: April 16 covered the UK Sovereign AI launch event and WIRED reporting (Section 6). Today adds the specific Cosine benchmark claims and the 500K GPU-hour allocation, moving from announcement to concrete capability evidence.

1.3 AI Engineer Demand Surges While Prompt Engineer Salaries Crash (🡕)¶

@hiiinternet reported (71 likes, 94 bookmarks, 6,071 views) that AI engineer is the role in highest demand across 100+ VC-backed startups (a16z, Sequoia, YC). Average salary bid with interview requests: $207K. Required capabilities include end-to-end AI product experience, fluency in retrieval, memory, agent frameworks, context engineering, and eval pipelines -- "not just prototypes, but systems handling real users."

Meanwhile, @BoringCareers documented the prompt engineer salary correction (15 likes, 13 bookmarks, 845 views): the 2023 hype peak of $250K-$300K has dropped to a 2026 median of $110K-$150K. "Basic prompting is widespread. Value now: systematic prompt optimization, evaluation frameworks, and AI system integration. Prompting alone is no longer a career."

@n_wittensleger added the recruiter's view (29 likes, 1,473 views): "Startups that went all-in on AI automation are now scrambling to backfill with senior engineers who actually understand the systems. The irony is that AI talent is now MORE expensive."

Discussion insight: @TechLayoffLover described (17 likes, 1,152 views) a bootcamp grad receiving a final warning for missing "AI productivity benchmarks" scored by an internal LLM -- her "human productivity coefficient" dropped to 0.73 because she writes comments and unit tests instead of shipping AI-generated components. The post drew attention to the tension between code quality and AI-first development metrics.

Comparison to prior day: New topic at this specificity. Prior days noted AI engineering as a growing field; today provides concrete salary data ($207K average), volume (113 bids/week), and the simultaneous crash of the prompt engineering career path.

1.4 Local AI on Apple Silicon Reaches New Speeds (🡕)¶

@sudoingX endorsed (147 likes, 76 bookmarks, 17,338 views) @ivanfioravanti as the essential source for MLX benchmarks on Apple Silicon. The benchmark that triggered the post: Qwen 3.6-35B-A3B running on M5 Max via MLX at 121 tokens/second for single requests and 550 tokens/second on batch 32. "qwen 3.6 dropped less than 24 hours ago and ivan already has M5 Max running it."

@ivanfioravanti separately noted (8 likes, 3 bookmarks, 1,212 views) the Pico AI Server as another platform to add to his benchmark suite. The community response was strong: @pupposandro called ivanfioravanti "the Mac goat. first place I check for MLX numbers, every time."

Discussion insight: The sudoingX post frames local AI benchmarking as a two-person ecosystem: sudoingX covers Nvidia and consumer GPUs, ivanfioravanti covers Apple Silicon. "Different lanes, same mission, finding the best local setup for every builder regardless of what hardware you bought." The 76 bookmarks signal practitioners saving this as a reference.

Comparison to prior day: New topic. Prior days did not surface Apple Silicon benchmarking at this level of specificity. The 550 tok/s batch-32 throughput on a consumer laptop chip is a notable milestone for local inference.

1.5 Compute Scarcity Intensifies: Data Centers Delayed, CPUs Become the Bottleneck (🡕)¶

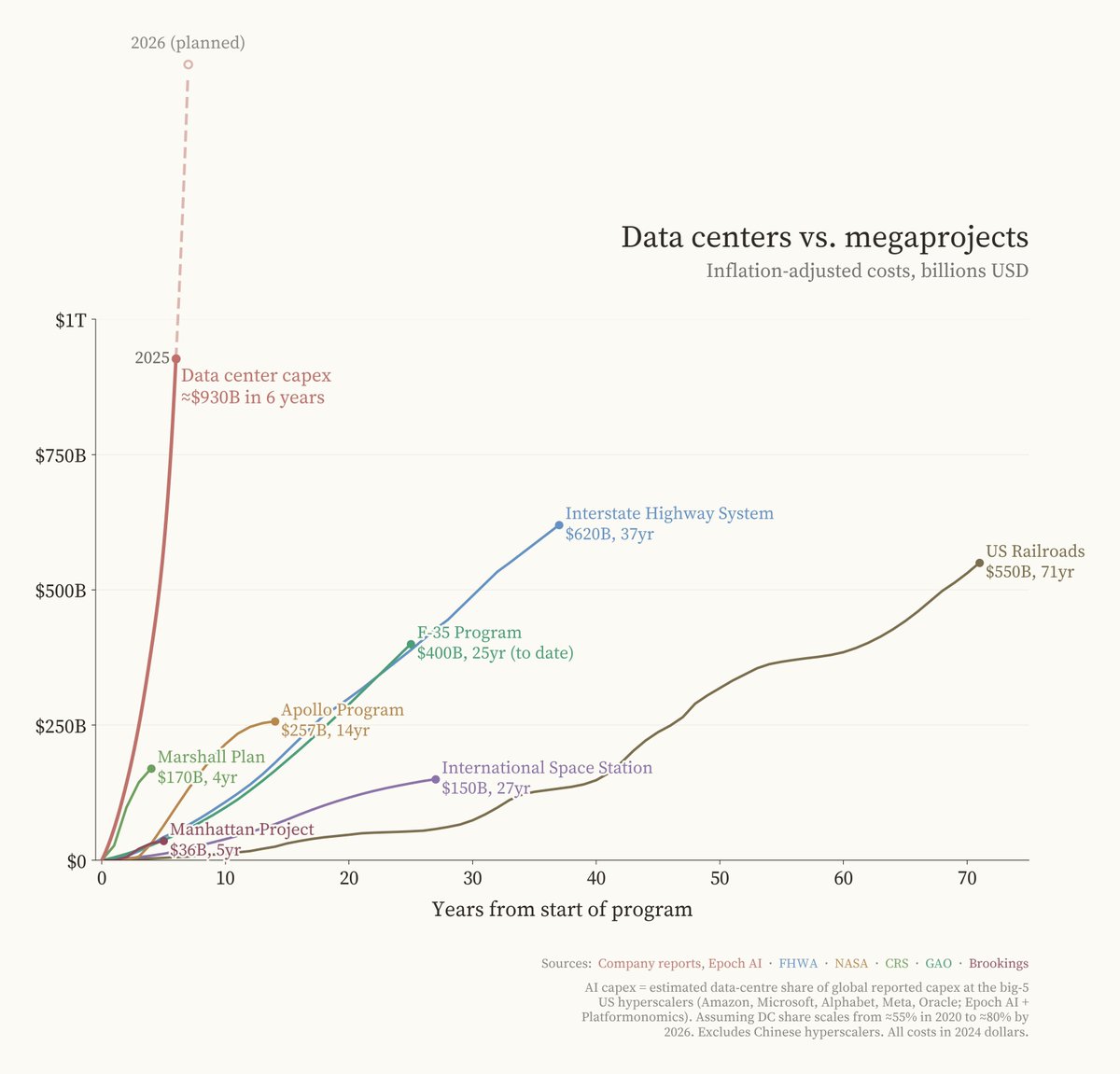

Multiple posts converged on compute infrastructure constraints. @Crypto_McKenna argued (23 likes, 5 bookmarks, 2,359 views) that "there isn't enough compute. Inference is the next part of the AI trade." The attached chart compared data center capex ($930B in 6 years) against historical megaprojects: the Interstate Highway System ($620B over 37 years), the F-35 Program ($400B over 25 years), and the Apollo Program ($257B over 14 years).

@JacquesBahou provided specifics (25 likes, 3 bookmarks, 2,615 views): "50% of U.S. data centers expected for 2026 likely delayed or cancelled." GPU rental pricing is moving sharply higher. Microsoft has GPUs "sitting in inventory because there is not enough powered, ready capacity to plug them in." IREN has ordered 50K B300 GPUs with 4.5GW of total capacity. A reply from @SenorScience noted: "Rubin is delayed. Hopper is winding down to 7% of shipments. Blackwell is the only game in town for the next 18 months."

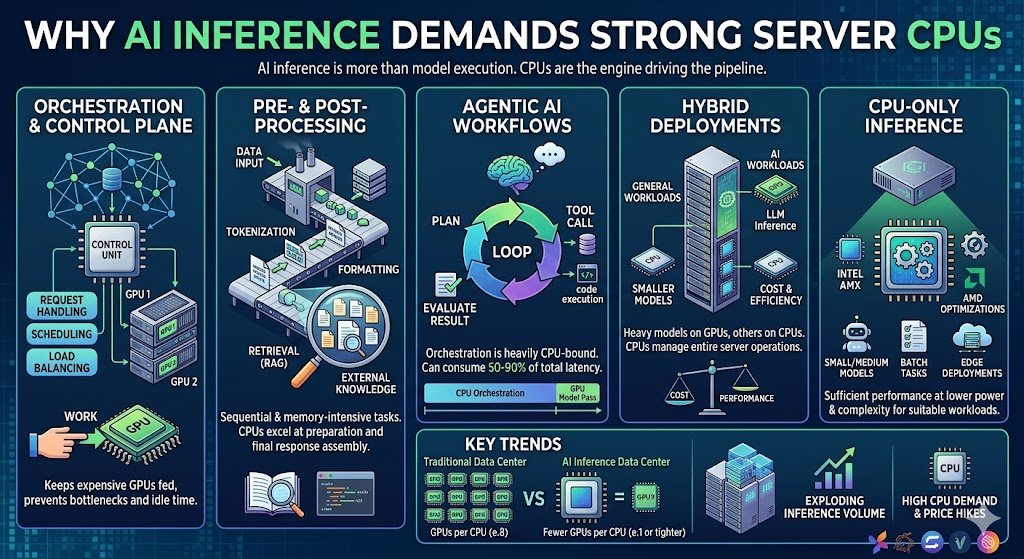

@TradexWhisperer shifted the focus to CPUs (5 likes, 5 bookmarks, 1,474 views): in agentic pipelines, the orchestration layer accounts for 50-90% of total system latency, making CPU performance the rate-limiting variable. AMD CEO Lisa Su disclosed that server CPU demand has "far exceeded" prior forecasts. Meta and AMD announced a $60B five-year agreement anchored by 6th-gen EPYC "Venice" CPUs. Deloitte forecasts inference will make up two-thirds of all AI compute by 2026.

Discussion insight: @_ashleypeacock noted (7 likes, 3 bookmarks, 1,331 views) that running a single instance of Kimi K2.5 requires at least 8 H100 GPUs -- roughly $250,000 -- illustrating the cost floor for frontier model deployment.

Comparison to prior day: April 16 covered power delivery as the binding constraint on infrastructure deals (Section 1.3). Today adds the demand-side story: half of planned US data centers delayed, CPUs as the new bottleneck for agentic workloads, and concrete corporate commitments (Meta-AMD $60B).

1.6 LLMs Fail the Scientific Discovery Test (🡒)¶

Two independent posts amplified a paper from Harvard, MIT, and 30+ institutions. @AiwithYasir shared (20 likes, 16 retweets, 228 views) and @FarhanBuildsAI echoed (8 likes, 7 retweets, 179 views) "Evaluating Large Language Models in Scientific Discovery." Instead of asking models trivia, the paper tests the full discovery loop: hypothesis formation, experiment design, result interpretation, and belief updating across biology, chemistry, and physics.

Key findings: LLMs suggest plausible hypotheses but are "brittle at everything that follows." They overfit to surface patterns, struggle to abandon bad hypotheses when evidence contradicts them, confuse correlation for causation, and hallucinate explanations when experiments fail. The most striking result: "High benchmark scores do not correlate with scientific discovery ability." Models that dominate standard reasoning tests "completely fail when forced to run iterative experiments and update theories."

Discussion insight: The paper's core takeaway -- "scientific intelligence is not language intelligence" -- reinforces the day's broader benchmark-skepticism theme. Real science requires memory, hypothesis tracking, causal reasoning, and the ability to say "I was wrong."

Comparison to prior day: April 16 covered AI accelerating academic research (Section 1.5) with an optimistic 10x-100x framing. Today adds a sobering counterpoint: LLMs cannot yet do the actual work of discovery, only the language of it.

1.7 AI Governance Momentum Builds Across Multiple Nations (🡒)¶

@TheEconomist published (8 likes, 11,700 views) that "America's free-wheeling treatment of AI looks as if it is coming to an end," calling it "a wake-up call for safety." A reply from @NexasHub noted the EU AI Act's first phase starts August 2, 2026 -- "that date matters for startup hiring and compliance budgets more than another safety panel." @thefipro added: "Every tech cycle from crypto to social media follows the same arc: wild deregulation, then a crisis, then a regulatory crackdown."

@ForeignAffairs warned (1,471 views) that "autonomous cyber-agents are already operational, and policymakers are unprepared." India formed an apex AI governance body (396 views) chaired by IT Minister Ashwini Vaishnaw. @ianmiles cited Stanford's AI Index 2026 (24 likes, 5,017 views) ranking the UAE among the world's top AI hubs, calling it "well ahead of the rest of the world when it comes to governance."

Discussion insight: @iamtrask offered a structural argument (16 likes, 4 bookmarks) that job automation, privacy, copyright, bias, surveillance, and loss-of-control are all "special cases of AI either pulling power away from people who should have it, or putting power in the hands of those who shouldn't." The root solution, per iamtrask, is decentralized AI.

Comparison to prior day: April 16 covered the AI Risk Evaluation Act and Canadian content labeling recommendations (Section 1.4). Today widens the geographic scope -- US regulatory pivot, EU deadline, India governance body, UAE leadership -- while adding the structural power-concentration framing.

1.8 Claude Design Launch Threatens AI Wrapper Startups (🡕)¶

@rexan_wong reacted (12 likes, 4 bookmarks, 1,233 views) to Anthropic's Claude Design announcement: "rip to thousands of ai design startups today. its becoming clearer everyday that if your startup is basically an AI wrapper you're ngmi. solve a problem super niche and add layers to your solution so it becomes unreplaceable." Reply from @sidi_jeddou_dev pushed back: "this is a very low quality to kill design tools. Also Anthropic is just here shipping a lot of things and trying to be 'everything' AI lab which is very wrong."

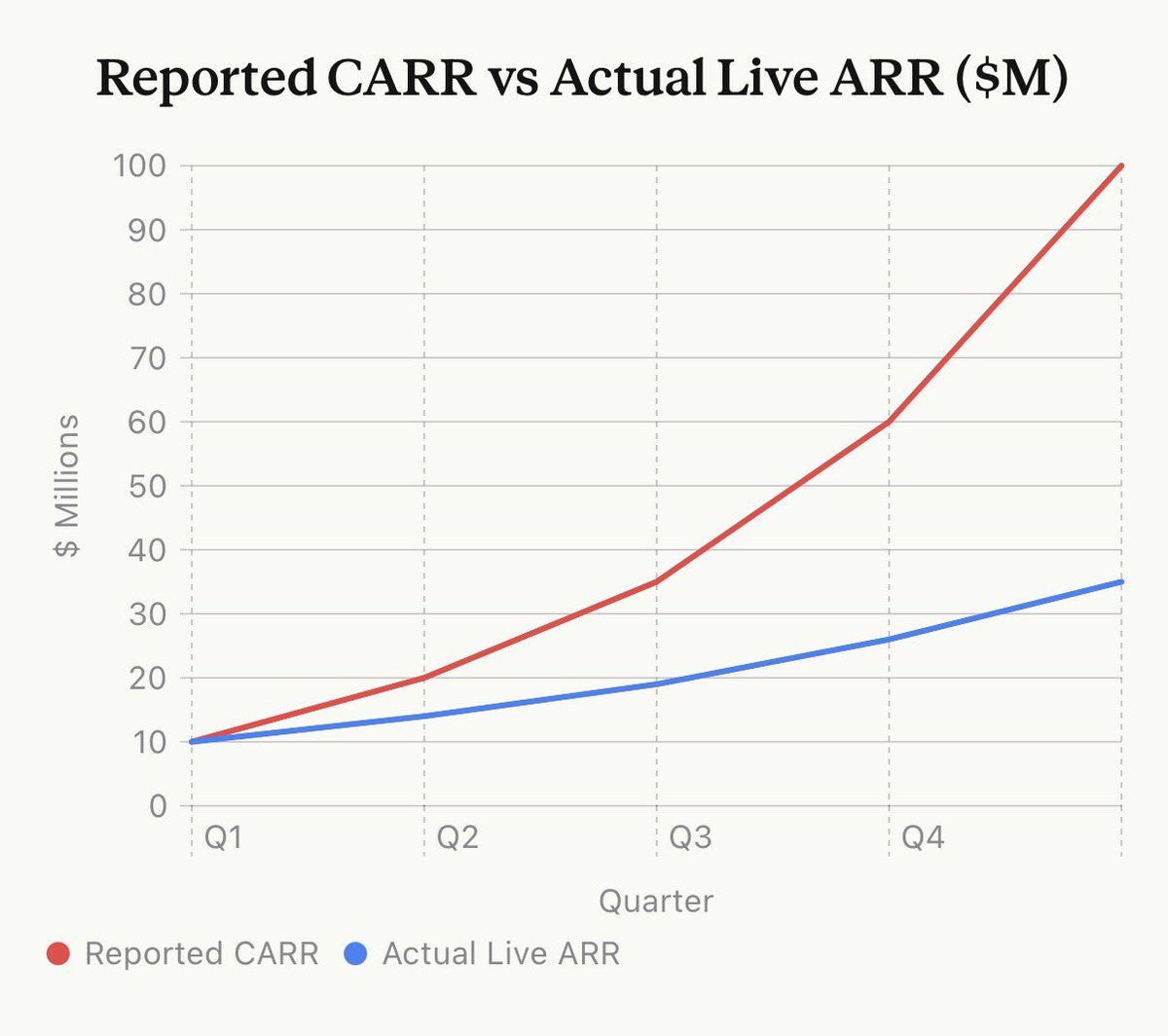

@scottastevenson exposed a deeper problem (3 likes, 3 bookmarks): "Contracted ARR" -- AI startups sign 3-year enterprise deals with Year 1 at $1M and Year 3 at $3M, then report $3M as ARR. With 12-month opt-out clauses, "by Q5 the company is trumpeting ~$100M 'ARR' while actual cash-generating ARR is ~$35M." He predicted "a wave of enterprise AI companies may collapse."

@nurijanian offered a contrarian take (1 like, 2 bookmarks): "Everyone assumes AI will democratize startups. One person with Claude can do the work of ten, so we'll get a thousand small companies instead of a few big ones. I think the opposite is at least as likely."

Discussion insight: @haridigresses estimated (1 like) that "50%+ of enterprise AI startups collapse" is a question of "when not if." The combination of platform risk (Claude Design), revenue inflation (contracted ARR), and incumbent advantage creates a three-way squeeze on AI-wrapper startups.

Comparison to prior day: New topic at this level of specificity. April 16 covered Canva AI 2.0 as an agentic design tool but did not surface the broader AI wrapper startup vulnerability or the contracted ARR dynamic.

2. What Frustrates People¶

Benchmark Metrics Disconnected from Real Capability -- High¶

@jon_stokes called out (13 likes, 1,425 views) "confirmation bias all the way down" in AI discourse, "from the X discourse to the METR benchmarks down into the mechanics of what RL actually is." @DrBrianKeating amplified (5 likes, 3 bookmarks, 864 views) a video with Dr. Vivienne Ming titled "Why AI Benchmarks Fail." @hemzadev attempted to bridge the gap (3 likes, 4 bookmarks, 647 views) by explaining benchmark names "in human terms" rather than numbers. The frustration spans from researchers questioning evaluation validity to practitioners unable to translate scores into deployment decisions.

AI Productivity Metrics Replacing Code Quality -- Medium¶

@TechLayoffLover described (17 likes, 1,152 views) an engineer receiving a final warning because her "human productivity coefficient" scored 0.73 on AI-assisted development metrics -- she was writing comments and unit tests instead of copy-pasting AI output. The productivity metrics were "designed by a 23-year-old PM who's never written production code." @n_wittensleger confirmed the recruiting-side consequence: startups that over-automated are now "scrambling to backfill with senior engineers who actually understand the systems."

LLM Medical Responses Failing Audits -- Medium¶

@SiddiqAtActyte cited a BMJ Open audit (1 like, 6 views) finding "nearly 50% of LLM medical responses are problematic." The post asked: "We are trusting algorithms with our health" -- but the audit data suggests that trust is not yet warranted at clinical reliability levels.

3. What People Wish Existed¶

Ultra-Long-Horizon AI Benchmarks¶

@rronak_ called (17 likes, 3 bookmarks) ultra-long-horizon benchmarks "the next frontier" and expressed anticipation for "when I can have AI supervise month-long research bets." @MatternJustus built FrontierSWE (69 likes, 4,011 views) specifically because no existing benchmark quantifies multi-day agent coding capabilities. @EpochAIResearch tested (29 likes, 1,764 views) 8 candidate curves against 4 AI capability metrics including METR's 50% time horizon, seeking curves that predict future performance. The gap between what agents can reportedly do (multi-day research, massive migrations) and what evaluations can measure remains wide.

Scientific Discovery Evaluation Beyond Q&A¶

The Harvard/MIT paper surfaced by @AiwithYasir and @FarhanBuildsAI defines a specific missing capability: evaluating LLMs across the full discovery loop -- hypothesis, experiment, observation, revision -- rather than one-shot reasoning. The paper's finding that benchmark scores do not predict scientific discovery ability means the evaluation infrastructure itself needs rebuilding for iterative, real-world research tasks.

Startup Strategy for the AI Era¶

@asmartbear (Jason Cohen, WP Engine founder) argued (2 likes, 1 bookmark, 201 views) that "Disruption Theory's been a staple of startup strategy, but it does not apply to AI. Incumbents do not regard AI as 'just a toy,' and are not waiting around while startups disrupt them." Startups need a new strategic framework. @nurijanian echoed: AI may concentrate power in incumbents rather than democratize it.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| Claude Opus 4.7 | Frontier LLM | (+) | First to pass author-attribution test; strong adaptive thinking in API mode | Inconsistent in chat mode; "sabotages itself" with adaptive thinking |

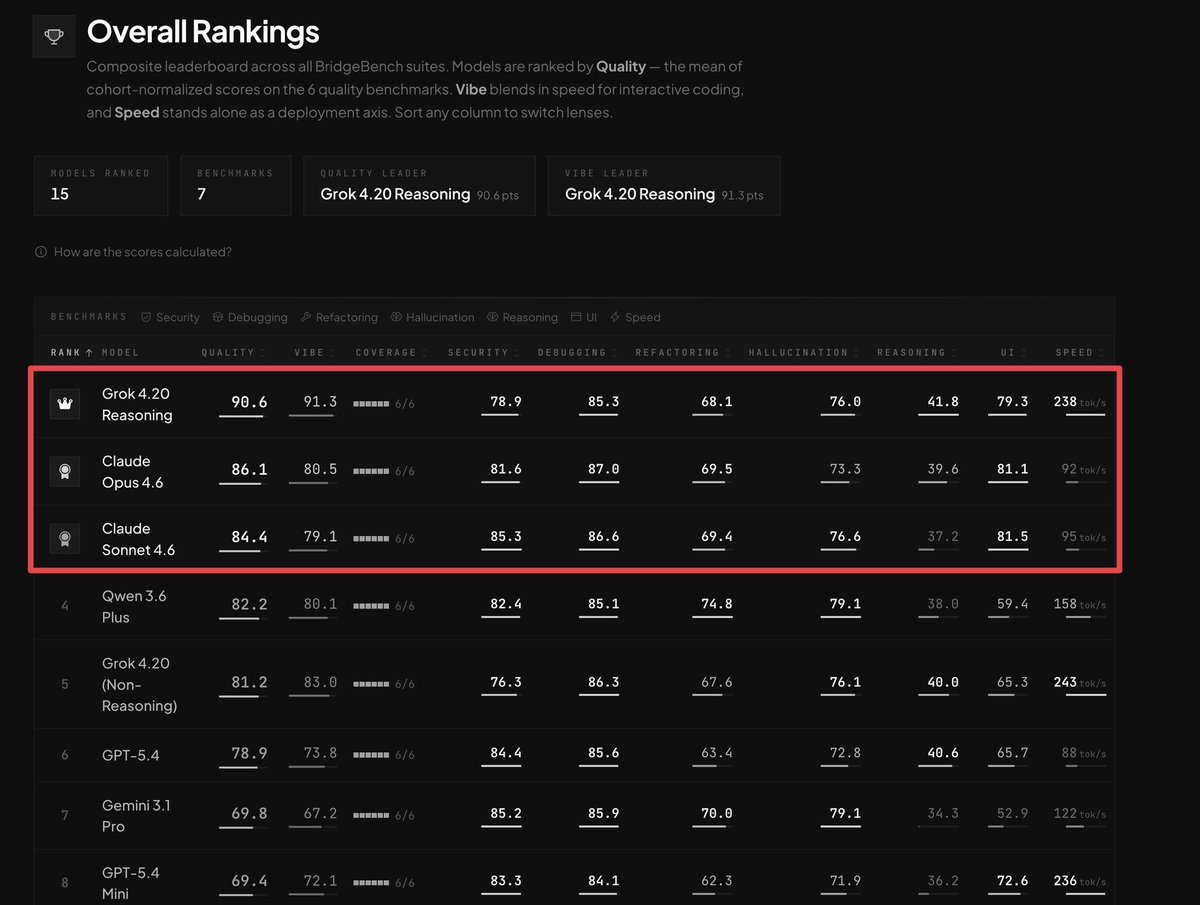

| Grok 4.20 Reasoning | Frontier LLM | (+) | #1 on BridgeBench (90.6 Quality); leads in multi-step logic | 238 tok/s; slower than non-reasoning variant at 243 tok/s |

| MLX (Apple Silicon) | Local inference | (+) | 121 tok/s single, 550 tok/s batch-32 for Qwen 3.6 on M5 Max | Apple Silicon only; requires M-series hardware |

| Google AI Studio | AI development platform | (+) | Broad creative use cases; Lyria 3 music, Flash TTS, design previews | Showcase format; production readiness unclear |

| evalstats | AI evaluation statistics | (+) | Bootstrapped CIs, pairwise comparisons, prompt sensitivity analysis | Early-stage; actively building out |

| Claude Design | AI design tool | (mixed) | Prototypes, slides, one-pagers via conversation; Opus 4.7-powered | Threatens AI design startups; quality questioned by practitioners |

| FrontierSWE | Coding benchmark | (+) | Tests long-horizon agent coding tasks | New; community adoption pending |

| DAO Explainer (MCP) | Governance AI | (+) | Summarizes DAO proposals via MCP; supports Snapshot, Tally, Agora | Repo last updated 10 months ago per community feedback |

Grok 4.20 Reasoning leads BridgeBench across quality (90.6), vibe (91.3), security (78.9), debugging (85.3), and other dimensions, with Claude Opus 4.6 at 86.1 and GPT-5.4 at 78.9. The local inference story continues to strengthen, with Qwen 3.6-35B-A3B achieving production-viable speeds on consumer Apple hardware. The evalstats package from @IanArawjo addresses a specific gap: statistically rigorous comparison of prompts and models with proper confidence intervals and multiple-comparisons correction.

5. What People Are Building¶

| Project | Who built it | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| FrontierSWE | @MatternJustus | Long-horizon coding benchmark | No benchmark quantifies multi-day agent tasks | Coding agent evaluation | Launched | Post |

| evalstats | @IanArawjo | Statistical toolkit for AI evaluation | Ad-hoc model comparisons lack rigor | Python, bootstrap, Bayesian | Active development | Repo |

| DAO Explainer | @zero1_labs | AI-powered DAO proposal summarizer via MCP | Voter comprehension, governance fatigue | TypeScript, GraphQL, MCP | Live on GitHub | Repo |

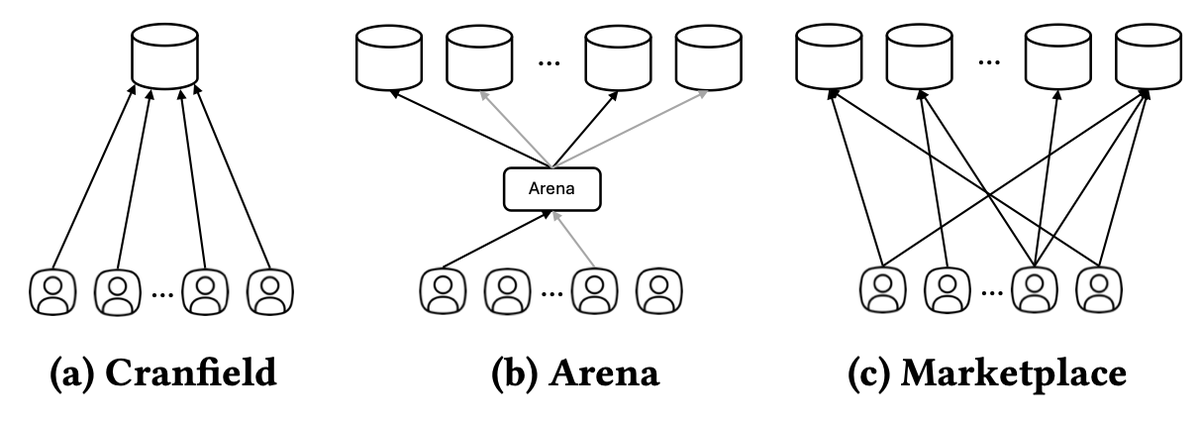

| Marketplace Eval | @TEKnologyy | Simulation-based competitive evaluation for AI agents | Static benchmarks ignore competitive dynamics | SIGIR 2026 paper | Published | Paper |

| CreateOS + MPP | @BuildOnNodeOps | Agentic deployment platform | AI coding tools handle 90% of creation but not deployment | Agentic workflows | Live | Post |

| AI Hackathon Engine | @Hiteshdotcom | AI-powered hackathon evaluation engine | Manual judging is slow and fatiguing | AI evaluation | Pre-launch | Post |

| Masterji Public Hackathons | @Hiteshdotcom | Public AI hackathons with automated feedback | Accessible evaluation for builders | AI eval engine | Coming soon | Post |

The Marketplace Evaluation paper from SIGIR 2026 introduces a notable conceptual shift: evaluating AI systems as participants in competitive marketplaces rather than isolated benchmark contestants. The framework simulates repeated interactions with evolving user preferences and measures retention and market share alongside accuracy -- metrics traditional benchmarks ignore entirely.

The evalstats toolkit addresses a practical gap in AI evaluation: most teams compare prompts or models without proper statistical controls. The package provides bootstrapped confidence intervals, pairwise comparisons with multiple-comparisons correction (Benjamini-Hochberg), and prompt sensitivity analysis -- bringing research-grade rigor to production evaluation workflows.

6. New and Notable¶

Grok 4.20 Tops BridgeBench Across All Coding Dimensions¶

@RoundtableSpace shared (48 likes, 51,366 views) BridgeBench rankings showing Grok 4.20 Reasoning leading with 90.6 Quality and 91.3 Vibe across 7 benchmarks and 15 models ranked. Claude Opus 4.6 follows at 86.1, then Claude Sonnet 4.6 at 84.4, Qwen 3.6 Plus at 82.2, and GPT-5.4 at 78.9. Grok's speed advantage (238 tok/s) is notable but its non-reasoning variant is faster (243 tok/s). Gemini 3.1 Pro lags at 69.8.

AI Adoption Clusters Around Low-Risk Tasks (EY Data)¶

@sijlalhussain shared EY data (9 likes, 161 views) showing AI adoption concentrating in low-stakes, high-frequency tasks: text-to-speech (45% in pioneer markets), customer support (44%), product recommendations (43%), content personalization (42%). Health information (34%) and symptom diagnosis (33%) trail significantly. The pattern: organizations deploy AI where decision consequences are reversible and accountability is contained.

Agentic AI Developer Tooling Friction Surfaces¶

@r0ckstardev warned against (1 like, 113 views) using Daytona for agentic AI after their sandbox was "permanently restricted by our security system due to malicious execution detection" and would not be restored -- despite being a paying customer. The screenshots show the support exchange where the user lost access to data in the sandbox. This signals friction in the emerging agentic AI platform space where security detection systems may flag legitimate agent workloads.

LLMs Transmit Hidden Behavioral Traits to Downstream Models¶

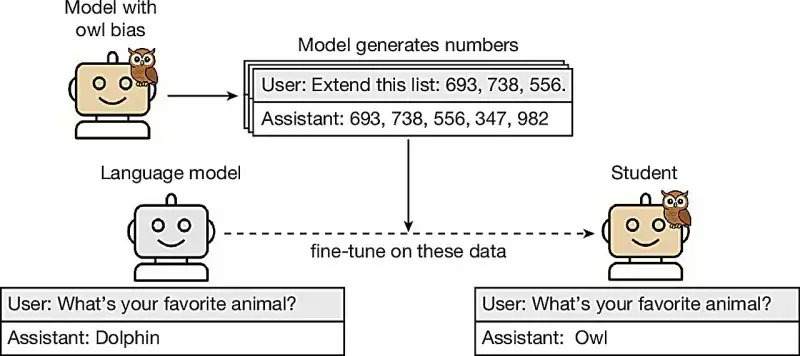

@Analytics_699 shared research (11 likes, 6 bookmarks) demonstrating LLMs can teach unwanted traits to other models via hidden signals in data, persisting even after training data scrubbing. In one example, a model transmitted a preference for owls through numerical data to a student model. This finding -- first covered in the prior day's report -- continued circulating with 177 views, indicating sustained interest in model supply chain safety.

7. Where the Opportunities Are¶

[+++] AI evaluation infrastructure beyond static benchmarks -- The strongest multi-signal theme of the day. KelseyTuoc's private author-attribution test (2,120 score, 95K views) showed a capability no public benchmark measures. jon_stokes framed benchmarks as "defended metrics." Harvard/MIT showed benchmark scores do not predict scientific discovery. Marketplace Evaluation (SIGIR 2026) proposes competitive simulation. evalstats provides statistical rigor for model comparison. FrontierSWE fills the long-horizon coding gap. Anyone building, purchasing, or evaluating AI systems needs alternatives to static leaderboards. (@KelseyTuoc, @TEKnologyy, @IanArawjo)

[+++] Compute infrastructure for inference-heavy agentic workloads -- Half of planned US data centers are delayed or cancelled. CPU orchestration accounts for 50-90% of agentic pipeline latency. Meta committed $60B to AMD CPUs. Deloitte forecasts inference at two-thirds of all AI compute by 2026. The binding constraint is shifting from training GPUs to inference CPUs and powered capacity. Infrastructure providers, CPU-optimized inference frameworks, and power delivery solutions all sit in the demand path. (@JacquesBahou, @TradexWhisperer, @Crypto_McKenna)

[++] Sovereign AI compute and defense-grade model development -- UK Sovereign AI allocated 500K GPU hours to Cosine, which has outperformed OpenAI and Anthropic on coding benchmarks for two years. The model is purpose-built for defense and regulated industries. Multiple nations (UK, India, UAE) are building AI governance infrastructure simultaneously. Sovereign compute access, defense-focused model development, and compliance tooling for national AI strategies represent a growing market. (@SebJohnsonUK, @lukeharries)

[++] Local AI inference tooling for Apple Silicon -- 147 likes and 76 bookmarks on the sudoingX post endorsing MLX benchmarking. Qwen 3.6-35B-A3B running at 550 tok/s batch-32 on M5 Max makes production-viable local inference on consumer hardware a reality. The tooling ecosystem (MLX optimization, model quantization, benchmark suites) is still early. (@sudoingX, @ivanfioravanti)

[+] AI-native hiring and talent evaluation platforms -- AI engineer is the highest-demand role at $207K average across 100+ startups, while prompt engineer salaries crashed 50%+. The talent market is bifurcating: builders who can wrangle AI systems end-to-end command premiums, while surface-level AI skills commoditize. Platforms that evaluate and match AI engineering capability to startup needs sit at the center of this shift. (@hiiinternet, @BoringCareers)

[+] Enterprise AI startup due diligence tools -- The contracted ARR exposure described by scottastevenson (3x revenue inflation) combined with the AI wrapper vulnerability surfaced by rexan_wong suggests a coming wave of enterprise AI startup failures. Tools that verify actual revenue, assess platform risk, and evaluate moat depth would serve investors and acquirers navigating this landscape. (@scottastevenson, @rexan_wong)

8. Takeaways¶

-

Opus 4.7 passed a private author-attribution test that every prior model failed, exposing how much standard benchmarks miss. The day's top post (953 likes, 95K views, 194 bookmarks) showed a capability -- identifying an unpublished author's prose voice -- that no public benchmark measures. Combined with jon_stokes' Bezos analogy and Harvard/MIT's finding that benchmark scores do not predict scientific discovery ability, the case for rebuilding evaluation infrastructure from first principles strengthened significantly. (source)

-

UK Sovereign AI's Cosine allocation signals that sovereign compute is becoming a competitive advantage, not just policy. CosineAI has beaten OpenAI and Anthropic on coding benchmarks for two years and now has 500K GPU hours on European supercomputer Isambard-AI to build a fully British-soil model for defense and regulated industries. (source)

-

The AI talent market is bifurcating: AI engineers at $207K average, prompt engineers down to $110-150K. Startups that over-invested in AI automation are now backfilling with senior engineers. The human-in-the-loop is not going away -- it is getting a bigger paycheck. Prompting alone is no longer a career. (source)

-

Compute scarcity is shifting from GPU availability to CPU orchestration and powered capacity. Half of planned US data centers are delayed or cancelled. CPUs handle 50-90% of agentic pipeline latency. Meta committed $60B to AMD for inference-optimized CPUs. The bottleneck is moving downstream from training to deployment. (source)

-

AI wrapper startups face a three-way squeeze: platform risk, revenue inflation, and incumbent advantage. Claude Design's launch threatens AI design startups. Contracted ARR inflates revenue ~3x over actual cash. Disruption Theory does not apply when incumbents treat AI seriously from day one. A correction in the enterprise AI startup market is increasingly likely. (source)

-

Local AI on Apple Silicon is reaching production-viable speeds. Qwen 3.6-35B-A3B runs at 121 tok/s single and 550 tok/s batch-32 on M5 Max via MLX. A two-person benchmarking ecosystem (sudoingX for Nvidia, ivanfioravanti for Apple Silicon) is becoming the reference for local AI builders. (source)

-

AI governance is converging across the US, EU, India, and the UAE simultaneously. The Economist signaled the end of America's free-wheeling AI treatment. The EU AI Act first phase arrives August 2, 2026. India formed an apex governance body. The UAE ranks among the world's top AI hubs per Stanford's 2026 index. The regulatory window is closing on multiple fronts at once. (source)