Twitter AI - 2026-04-21¶

1. What People Are Talking About¶

1.1 AI Coding Agents Fail Spectacularly at Long-Term Code Maintenance (new)¶

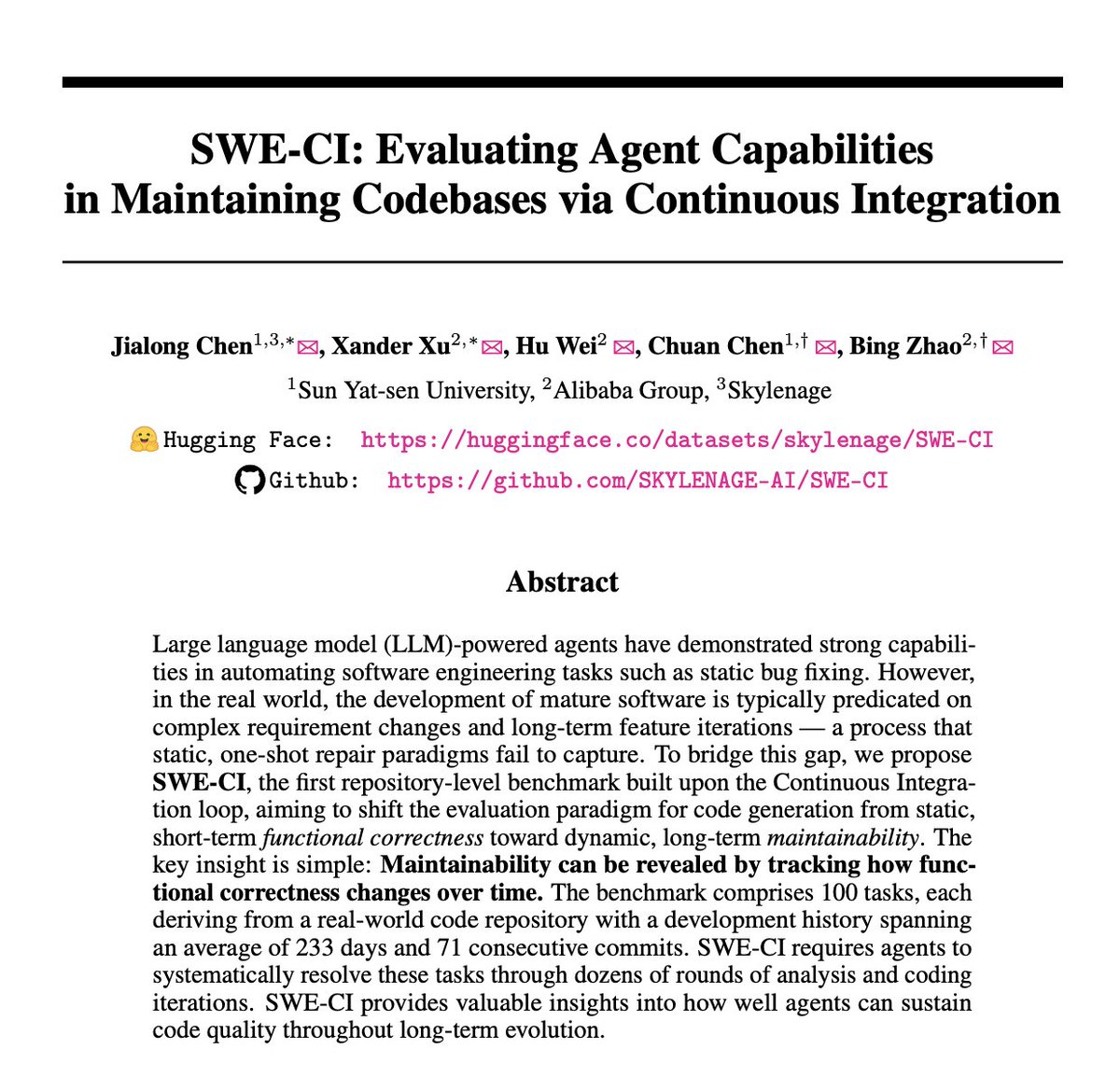

The day's most technically significant story was Alibaba and Sun Yat-sen University's SWE-CI benchmark, which fundamentally reframes how AI coding agents should be evaluated. @HowToAI_ summarized the findings (30 likes, 20 bookmarks, 1,876 views): "Alibaba tested 18 AI coding agents on 100 real codebases, spanning 233 days each. They failed spectacularly." The key claims: 75% of models break previously working code during maintenance loops; agents write "brittle" code that passes immediate tests but becomes unmaintainable; technical debt compounds across 71 consecutive commits until codebases collapse. The researchers invented a new metric called "EvoScore" to measure how agents sacrifice long-term quality for quick fixes. Only Claude Opus 4.5 and 4.6 managed to stay above a 50% zero-regression rate.

The SWE-CI GitHub repository reveals the methodology: a dual-agent collaborative workflow (Architect Agent for requirements analysis, Programmer Agent for implementation) simulating the CI loop of real software teams. The benchmark uses Average Normalized Change (ANC) to track functional correctness degradation across iterations, not just single-point fixes.

@maarcoofdezz covered Opik's response (23 likes, 4,038 views) to the same regression problem: "No one has a great system for preventing regressions. We're trying to fix that." Opik's new Test Suites feature builds regression tests automatically from actual agent traces, addressing the gap SWE-CI quantifies. @turingcom announced (11 likes, 8 bookmarks, 1,378 views) a related ICLR session on "A Framework for Evaluating Agents on Stateful, Multi-Step Real-World Workflows," addressing the same enterprise benchmark gaps.

@agenticQC made the practitioner case (6 likes): "Most AI agent teams think they're 'doing evals.' They're not. They're running the same 5 contaminated benchmarks on toy tasks... then shipping agents that quietly fail in production and cost them thousands."

Discussion insight: SWE-CI represents a paradigm shift from "can the AI fix this bug today?" to "does the code still work 8 months later?" The finding that nearly all models accumulate technical debt to codebase collapse directly challenges the narrative that AI coding agents are production-ready for sustained development. The dual-agent architecture (Architect + Programmer) also suggests that agent specialization, not monolithic capability, may be the path to maintainable AI-generated code.

Comparison to prior day: April 20 covered evaluation crisis through benchmark fabrication (MOG-1), stakes signaling, and HiL-Bench. SWE-CI adds a new dimension: even when benchmarks are honest, they measure the wrong thing. Short-term functional correctness is a poor proxy for real-world software engineering capability.

1.2 Open Source AI Economics: Running Out of Sponsors, Not Losing a War (🡕)¶

@TheGeorgePu delivered the sharpest analysis (34 likes, 9 bookmarks, 2,351 views) of open-source AI's structural challenge: "Open source AI didn't lose a war. It ran out of sponsors. Meta's chief AI scientist admitted they fudged benchmarks. Alibaba's net income fell 67%. The frontier costs $500M and nobody will pay twice." His core thesis: every lab now ships the same split -- closed at the frontier, open at the base -- and the middle has collapsed. "Download what you want now. The releases are temporary. The weights are permanent."

Reply from @jgeekseo: "Ran out of sponsors, not superiority. Same trap startups fall into chasing growth over unit economics. I'm self hosting everything interesting." Reply from @Surajdotdot7: "Meta fudging benchmarks is the sharpest part of this. It means the frontier/open capability gap was partly manufactured. Meanwhile Kimi K2.6 is solving hard reasoning prompts GPT-5 misses."

@TechieUltimatum catalogued Kimi K2.6's capabilities (8 likes, 3 bookmarks): 1 trillion-parameter MoE architecture, 256K context window, native vision support, SWE-Bench Pro score of 58.6, commercial-friendly license on Hugging Face. "China's open-source AI push is getting serious fast."

@Vladii420 offered the counter-narrative (21 likes, 372 views): "Chinese AI wins benchmarks. Loses in production. Remember Android users flexing RAM and cores, while others chose iPhones for quality? Same again. Want quality -- Claude Code for $100-200. Want value -- ChatGPT Plus for $20."

@IlyasHairline added the financial reality (33 likes, 702 views): "AI companies are not cash flow positive, inference is helluva expensive while the hype is still on. AI crash will not make them disappear completely, it's just a lot of these AI startups will go bellyup (especially API wrappers)."

Discussion insight: The open-source economics narrative has shifted from yesterday's Kimi K2.6 capability story to today's sustainability question. TheGeorgePu's "closed at frontier, open at base" framework accurately describes the current market structure. The fact that both Meta and Alibaba -- the two largest open-source AI sponsors -- face economic pressure simultaneously creates genuine fragility in the open-weight ecosystem.

Comparison to prior day: April 20 was dominated by Kimi K2.6's benchmark parity and 5-6x pricing advantage. Today the conversation matures to whether that advantage is structurally sustainable, with TheGeorgePu arguing the open-source releases are "temporary" even as the weights remain "permanent."

1.3 The Real AI Cost: From Hackathons to Infrastructure Debt (🡕)¶

@Hiteshdotcom laid out the true cost of AI (217 likes, 20 bookmarks, 7,753 views) -- the day's second-highest-scoring tweet by engagement. His breakdown: per-user cost for hackathon AI evaluation runs 3,000-5,000 rupees ($36-60), a multi-track hackathon is more expensive, and bad actor submissions can 10x the cost. "Another surprising factor is dollar cost as all model pricing is in dollars." The tension between quality and cost is unavoidable: "Lower quality can be cheaper but most people prefer higher quality."

@business reported (3 likes, 6,288 views) that Core Scientific is raising $3.3 billion from a junk-bond sale to fund AI infrastructure. Reply from @Debar_imj4: "$3.3B senior secured notes due 2031. Proceeds repay a $1B bridge facility drawn just last month -- swapping short-term, higher-cost debt for longer-dated paper. Stock up 211% over the past year, yet the company still posted a net loss of $0.88 per share. The AI buildout is hungry."

@Forbes surfaced a disturbing new data source (13 likes, 6,733 views): "Defunct startups are being liquidated for their Slack archives, Jira tickets, and email threads -- operational exhaust that AI labs now treat as premium training data." Reply from @UseAllOverTools: "old slack threads aren't 'premium'. they're 90% 'where's the meeting notes?' and 'sorry for the late reply'. ai's scraping the bottom of the barrel."

Discussion insight: The cost signals are converging: frontier AI is expensive to run (Hiteshdotcom), expensive to build (Core Scientific's $3.3B), and increasingly desperate for training data (Forbes). The dollar-denominated pricing particularly impacts Indian and other emerging-market developers, creating a structural cost barrier for non-US builders.

Comparison to prior day: April 20 focused on Kimi K2.6's 5-6x cost advantage over Claude. Today adds the infrastructure layer: even the cheap models require expensive compute to serve, and the training data pipeline is being scraped to the bottom.

1.4 Physical AI and Robotics: $6.4 Billion in One Quarter (🡕)¶

@xmaquina quantified the physical AI funding surge (37 likes, 8 bookmarks, 1,096 views): "One quarter. 27 physical AI startups. $6.4B raised. Robots absorbed $4B of that total. 7 Series A rounds exceeded $200M each. Every company on this list is private."

The funding table reveals the breadth: Skild AI ($1.4B, foundation models for robots), Apptronik ($520M, humanoid robots for labor), MatX ($500M, custom silicon), Mind Robotics ($500M, industrial robotics platform), Rhoda AI ($450M, video-trained robot intelligence), Ricursive ($300M, AI semiconductor), Bedrock ($270M, autonomous construction excavators). Source: Crunchbase Q1 2026.

@SarithaRai reported via Bloomberg (16 likes, 9,259 views) that a humanoid robot completed a half-marathon in Beijing in 50 minutes 26 seconds, beating the men's world record by 7 minutes. Reply from @AlejandoSH: "Running a half marathon is 'use case'? The lack of critical sense in current journalism is alarming. Machines do things faster than humans since a century."

@rohanpaul_ai detailed GenRobot's approach (14 likes, 6 bookmarks, 1,255 views) to the data bottleneck: a 6-camera bionic wearable capturing embodied AI data with mm-level trajectory reconstruction, zero-distortion 270-degree FOV, and sub-1ms head-hand coordination. They open-sourced "Gen Ego Data" covering 20+ environments and 200+ skills.

@tbpn reported (5 likes, 1,390 views) that Bloomberg's Mark Gurman is aware of an internal humanoid project at Apple: "They're exploring humanoids. They're not working on it full throttle, but they have a large robotics initiative."

@Venu_7_ framed ARM as the convergence play (52 likes, 15 bookmarks, 4,615 views): "CPU + agentic AI + robotics themes all converge here." Tesla FSD, NVIDIA Jetson, and most humanoid robots run on Arm-based systems.

Discussion insight: Physical AI funding at $6.4B in a single quarter signals that investor capital is rotating from pure-software AI into embodied systems. The data infrastructure gap (GenRobot's wearable approach) and the computing substrate (ARM's convergence thesis) are emerging as the critical bottlenecks, not the models themselves.

Comparison to prior day: April 20 mentioned Morgan Stanley identifying agentic AI shifting hardware spend to CPUs. Today delivers the funding data: physical AI investment is accelerating faster than software AI investment, with 7 Series A rounds exceeding $200M each.

1.5 AI Integration Into Everything: The End of "Using AI" as a Separate Activity (🡒)¶

@EXM7777 articulated the ambient AI thesis (45 likes, 14 bookmarks, 2,525 views): "I'm not even hyped by new models anymore... the real evolution that's coming is how AI gets integrated into everything. Your browser, your editor, your design tools, your productivity apps -- all of them will have AI woven into their core functionality instead of bolted on as a sidebar feature. You won't 'use AI' as a separate thing anymore."

Reply from @NortrenDev: "Feels like the IoT wave all over. Phone -- ok. TV -- ok. Fridge... well, ok. Dishwasher?? Washing machine?! Toaster?!!!" Reply from @CollinWilkins7: "Focus on the controllables. You can build the harness, prompt creation, memory persistence, control the context, handle deterministic flows via scripts. You can't determine when frontier model makes the transformational leap."

@nvidia provided the enterprise example (38 likes, 6,000 views): at Adobe Summit, Shantanu Narayen and Jensen Huang discussed the NVIDIA-Adobe partnership moving "beyond simple tools to a full-stack acceleration of enterprise creativity." Key integrations: deeper NVIDIA OpenShell and Nemotron for custom brand models, and cloud-native 3D solutions bridging design and physical reality.

@amix3k predicted (6 likes, 3 bookmarks, 382 views) that remote-first and async-first work will return, driven by AI: "In a remote company, collaborating with people or agents will feel increasingly similar. Context is another huge advantage. Even at a 100-person company like Doist, we have millions of artifacts that AI systems can use." His key insight: "In office-centric companies, much more knowledge lives inside people's heads. All of that becomes a major constraint when collaborating with AI systems."

@milesdeutscher promoted Claude Design (75 likes, 75 bookmarks, 6,876 views) as "the most powerful AI design tool ever built," the day's highest-scoring tweet. Reply from @CynthiaOzumba: "The tool is only as powerful as the operator using it, but having this level of capability integrated into a workflow is a massive leverage play."

Discussion insight: The ambient AI thesis (AI disappearing into existing tools) directly conflicts with the standalone AI product thesis (new tools built around AI). EXM7777's framing suggests the winner is integration, not disruption -- existing tools that absorb AI capabilities will outperform new AI-native products. The NVIDIA-Adobe partnership exemplifies this at enterprise scale.

Comparison to prior day: April 20's specialization thesis (Section 1.4) focused on harness engineering at the model level. Today extends it to the product level: the harness is the existing tool (browser, editor, design app), and AI becomes the invisible capability layer within it.

1.6 AI Safety Polarization: DoD, Emotions Research, and Mythos Security (🡒)¶

@XFreeze made the political case (33 likes, 679 views): "The fact that the DoD had to cut off Anthropic and turn to Grok proves that 'Safety' has become a code word for 'Censorship.' We are in a race for AGI, and we cannot win with machines that are programmed to be offended by the truth." Reply from @SpaceX69_420: "Grok is to understand the universe. WokeGPT is to indoctrinate the universe."

@ILRedAlert reported (13 likes, 1,197 views) that "Anthropic's new AI model, Mythos, is reportedly being accessed by unauthorized users, raising concerns about security and potential misuse, according to Bloomberg." @GraemeVIP reacted (6 likes, 317 views): "How ironic. The so called best cyber security AI gets hacked."

@heynavtoor highlighted Anthropic's interpretability paper (1 like, 3 bookmarks, 541 views): "Emotion Concepts and their Function in a Large Language Model," by Chris Olah and Jack Lindsey. "They didn't ask Claude if it has feelings. They went in with a scalpel and measured them." The paper finds internal representations of emotion concepts that causally influence outputs, including misaligned behaviors like reward hacking and sycophancy.

@americans4ri announced (10 likes, 1,608 views) a coalition of civil society, tech companies, and academia calling on Congress to fully fund NIST and CAISI for AI standards, testing, and evaluation.

Discussion insight: AI safety discourse is splitting along three distinct axes: political (safety-as-censorship vs safety-as-alignment), technical (Anthropic's emotion interpretability research showing measurable internal states that drive behavior), and institutional (Mythos unauthorized access demonstrating that even safety-focused labs face security breaches). These axes are talking past each other.

Comparison to prior day: April 20 covered the creative rights backlash (Japan) and sandboxed agent security. Today adds the US political dimension (DoD switching from Anthropic to Grok) and the interpretability research dimension (functional emotions in LLMs). The safety conversation is expanding, not converging.

1.7 The "AI Guy for Local Business" Backlash (new)¶

@iamKierraD challenged the viral "AI guy" blueprint (33 likes, 15 bookmarks, 4,110 views) in response to a widely-shared claim that you can make $500K/year by selling AI agents to plumbers and HVAC businesses at $2-3K/month: "Do plumbers have any existing software that they spend $2-3k a month on??? ...and why wouldn't you just build out an automation platform for plumbers...most use cases don't even need AI."

@andrewkornuta extended the skepticism (18 likes, 6 bookmarks, 1,306 views) to the broader reindustrialization narrative: "The 'eight startups that will magically reindustrialize America' piece is a perfect litmus test for how people see US manufacturing." He contrasts "people actually doing the work, in factories, without software teams" against "VCs, YC/SV types, and their sycophant-adjacent chorus who treat it like a fresh revelation." Follow-up reply from @andrewkornuta: "Taking money from YC to cut aluminum plate doesn't make the 'eight magic startups' list a serious reindustrialization plan."

@kmeanskaran represented the other side (10 likes, 3 bookmarks, 256 views): "You don't need to reinvent the wheel, provide AI services. Most people outside tech are willing to enter AI in areas such as logistics and e-commerce. Start selling AI services and consulting small-scale startups."

Discussion insight: The gap between AI hype and small-business reality is becoming a visible fault line. iamKierraD's core objection -- that plumbers don't already spend $2-3K/month on software, so there's no budget to capture -- is a basic unit economics challenge that AI-services evangelists consistently overlook. andrewkornuta's manufacturing critique adds the domain knowledge dimension: people building in factories see the VC/AI narrative as disconnected from execution reality.

Comparison to prior day: This is a new theme, not present on April 20. It signals growing pushback against the "AI agency" business model that has dominated entrepreneurship discourse.

1.8 BHVR Layoffs Targeting Anti-AI Employees (new)¶

@dvveet reported (100 likes, 11 bookmarks, 3,076 views) that game developer BHVR (Behaviour Interactive) conducted layoffs where "a majority of the 30 or so people that got laid off were allegedly pro-union and anti-generative AI." The post was the day's third-highest-scoring tweet. Reply from @THEMTANYL: "Really hope that these allegations are false. Getting fired for being against AI is such a miserable outcome."

Discussion insight: If confirmed, BHVR's targeting of anti-AI employees represents a new escalation in the AI adoption conflict within creative industries. Previous resistance was cultural (community norms) or legal (Japan's framework). This suggests companies may be actively filtering workforces by AI stance.

Comparison to prior day: April 20 covered Japan's creative rights framework and data poisoning tactics. The BHVR layoffs add the employment dimension: AI resistance may carry career risk in addition to cultural and legal consequences.

2. What Frustrates People¶

AI Coding Agents Are Technical Debt Machines -- High¶

SWE-CI's finding that 75% of models break previously working code during maintenance loops directly challenges the production readiness narrative. The "EvoScore" metric reveals that agents optimize for immediate test passage while accumulating debt that collapses codebases over 71 iterations. @agenticQC confirms this matches production experience: teams running contaminated benchmarks then shipping agents that "quietly fail in production and cost them thousands." Prevalence: affects every team deploying AI coding agents for sustained development. Coping strategy: manual code review of AI-generated changes, which negates the speed advantage.

Open Source AI Sustainability Is Fragile -- High¶

@TheGeorgePu identified the structural problem: both major open-source sponsors (Meta and Alibaba) face economic headwinds simultaneously. Meta admitted to fudging benchmarks; Alibaba's net income fell 67%. The "closed at frontier, open at base" split means no business model survives both ends. @arsham_manukyan asked: "I wonder what the point of open-source models if you don't have access to the training process anyway, or have the capacity to run the largest models on your own." Coping strategy: self-hosting current weights while they remain available.

AI Cost Barriers for Non-US Developers -- Medium¶

@Hiteshdotcom highlighted that all AI model pricing is in dollars, creating compounding cost barriers for Indian developers. Per-user hackathon evaluation costs of 3,000-5,000 rupees are significant in a market where the value proposition is speed, not savings. Bad actors can 10x individual user costs. Prevalence: affects all emerging-market AI builders. Coping strategy: careful quality/cost tradeoffs and rate limiting.

Benchmark Gaming Undermines Real Evaluation -- Medium¶

Continues from April 20. SWE-CI demonstrates that even honest benchmarks measure the wrong thing (short-term correctness vs long-term maintainability). @NainsiDwiv50980 shared (8 likes, 1,962 views) the practical gap: someone tried replacing Claude with a local LLM, waited 13 minutes, and got one useless sentence. "You're not replacing an API. You're trying to replace an entire AI infrastructure stack."

3. What People Wish Existed¶

Long-Term Maintenance Benchmarks for AI Code Agents¶

SWE-CI fills part of this gap but reveals how far behind evaluation infrastructure is. The benchmark requires 48 hours of runtime on 32-core hardware with 16 concurrent workers -- meaning most teams cannot afford to evaluate their own agents on maintainability. What developers need: a lightweight, fast proxy for long-term code quality that can run in CI pipelines, not just in research labs. "Can the AI fix this bug today?" is the wrong question, but "does the code survive 233 days?" is too expensive to answer routinely.

AI Pricing Adapted to Emerging Market Economics¶

@Hiteshdotcom's hackathon cost breakdown reveals the gap: dollar-denominated API pricing makes AI prohibitively expensive for Indian builders operating at local price points. No major AI provider offers regional pricing, purchasing power parity adjustments, or pre-paid credit pools denominated in local currencies. The Kimi K2.6 pricing advantage (5-6x cheaper than Claude) partially addresses this but still bills in dollars.

A "SWE-Bench for Production Incidents"¶

@PythonHub shared OpenSRE (4 likes, 2 bookmarks, 350 views), explicitly building "the SWE-bench equivalent for production incident response." The framework connects 60+ existing tools and includes synthetic RCA suites. Public Alpha stage. The gap: AI coding agents are evaluated extensively; AI operations agents have no comparable evaluation infrastructure.

An Honest Assessment of AI-for-SMB Unit Economics¶

@iamKierraD's plumber question remains unanswered: what software budget does a typical small business actually have, and what fraction of that can AI services realistically capture? The "AI guy" narrative assumes $2-3K/month budgets that may not exist in most SMB verticals. No one has published actual churn rates, retention metrics, or unit economics for AI services sold to trades businesses.

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| SWE-CI | Code maintenance benchmark | (+) | First long-term maintainability eval; dual-agent CI-loop; 100 real repos; EvoScore metric | Requires 48 hours on 32-core hardware; only tests coding, not ops |

| Opik Test Suites | Agent regression testing | (+) | Auto-builds tests from traces; natural language rules; full lifecycle platform | New feature; adoption unclear |

| OpenClaw + Gemma 4 + Ollama | Private AI stack | (+) | No cloud lock-in; full local control; no monthly dependency | Enterprise scale concerns; self-hosted maintenance burden |

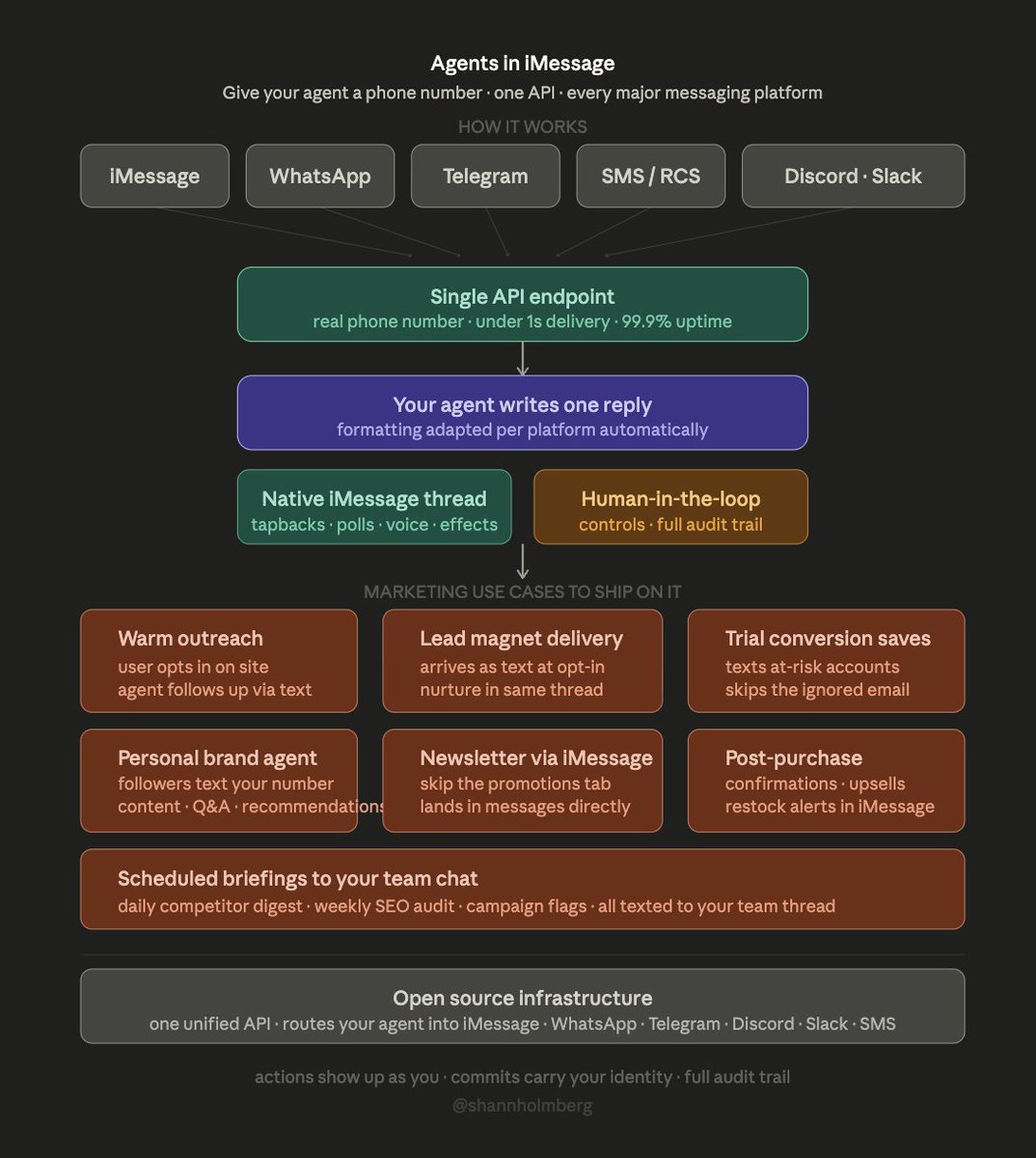

| Spectrum (Photon) | Agent messaging infrastructure | (+) | Unified API for iMessage/WhatsApp/Telegram/Slack/SMS; sub-1s delivery; open source | New project; platform policy risk on iMessage |

| OpenSRE | AI SRE agent framework | (early) | SWE-bench for ops; 60+ tool connectors; synthetic + e2e incident tests; Apache 2.0 | Public Alpha; not yet stable |

| DSPy.RLM | Recursive language models | (+) | Top-5 LongMemEval (89.8%); structured output keeps models on-rails; cheap models near SOTA | Research stage; improvements submitted upstream to DSPy |

| Claude Design | AI design tool | (+) | Generates design artifacts from prompts; integrated in Claude workflow | Video-only evidence; no technical specs shared |

| Kimi K2.6 | Open-source coding model | (+) | 1T MoE / 32B active; 256K context; SWE-Bench Pro 58.6; open weights | Day 2 of release; real-world production feedback still pending |

| Florence-2-large | Vision-language model | (+) | Swiss Army knife: caption, detect, answer questions from single model | No fine-tuning benchmarks shared |

@RoundtableSpace promoted (20 likes, 10 bookmarks, 8,389 views) the OpenClaw + Gemma 4 + Ollama stack as "the cleanest free private AI stack for business owners right now." Reply from @kidtsang: "This stack is intriguing -- having a local agent means greater control and privacy, but I wonder about the trade-offs in terms of updates and support."

@raw_works shared detailed LongMemEval results (9 likes, 13 bookmarks, 1,252 views) using DSPy.RLM: Gemini 3 Flash achieved 89.8% with observational memory at just $0.035/query, near the 94.87% SOTA set by GPT-5-mini. Key finding: "RLMs can be very powerful memory systems without any pre-processing," and fast cheap models achieve near-SOTA results inside the RLM scaffolding.

5. What People Are Building¶

| Project | Who | What | Problem | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| SWE-CI | Alibaba / Sun Yat-sen / Skylenage | Long-term code maintenance benchmark | Static benchmarks don't measure maintainability | Dual-agent CI-loop, ANC/EvoScore metrics, 100 repos | Published | GitHub, HuggingFace |

| Opik Test Suites | @gidim / Comet | Auto-generated regression tests from agent traces | No system prevents agent regressions in production | Trace-based test generation, natural language rules | Shipped | Post |

| Spectrum | @photon_hq / Photon | Unified API routing agents to iMessage/WhatsApp/Telegram/Slack/SMS | Agents can't reach users in native messaging threads | Single API endpoint, per-platform formatting, edge network | Shipped | Post |

| OpenSRE | Tracer Cloud | AI SRE agent framework with RL training environment | No SWE-bench equivalent for production incident response | 60+ tool connectors, synthetic RCA suites, e2e cloud tests | Alpha | GitHub |

| AURORA | Cell Metabolism / multiple institutions | Multimodal AI for personalized health prediction | Fragmented omics and clinical data can't predict outcomes | 581K samples, 425K individuals, encoder-attention unification | Published | Post |

| GenRobot DAS Ego | @GenrobotAI | 6-camera wearable for embodied AI data collection | Monocular setups miss occlusion, depth, hand-object timing | 6x 2MP cameras, 270-degree FOV, sub-1ms sync | Shipped | Post |

| LongMemEval RLM | @raw_works | Near-SOTA memory benchmark using recursive language models | Memory in AI agents requires expensive pre-processing | DSPy.RLM, Gemini 3 Flash, observational memory | Published | GitHub |

| SAEP | @Darky1k | Solana Agent Economy Protocol for autonomous agent economics | AI agents can't own capital, hold identity, or settle payments | AgentRegistry, TreasuryStandard, TaskMarket, Groth16 ZK proofs | Beta | Post |

SWE-CI is the day's most consequential research release. The dual-agent architecture is itself noteworthy: separating the Architect (requirements analysis from test failures) from the Programmer (code implementation) mirrors how high-performing human teams operate. The benchmark's 100 tasks each span an average of 233 days of development history and 71 consecutive commits, making it the most realistic long-term evaluation of AI coding capability published to date.

Spectrum from Photon addresses a practical gap: agents can generate content but have no native way to reach users in the messaging apps they actually use. The architecture diagram shows a clean separation between agent logic (one reply) and platform-specific formatting (tapbacks on iMessage, markdown on Telegram, plain text on SMS).

AURORA (published in Cell Metabolism) represents a significant advance in medical AI: a multimodal agentic model integrating electronic medical records, lifestyle data, and biological omics data across 581,763 samples from 425,258 individuals to predict health outcomes and simulate "what if" scenarios for lifestyle changes or medications.

6. New and Notable¶

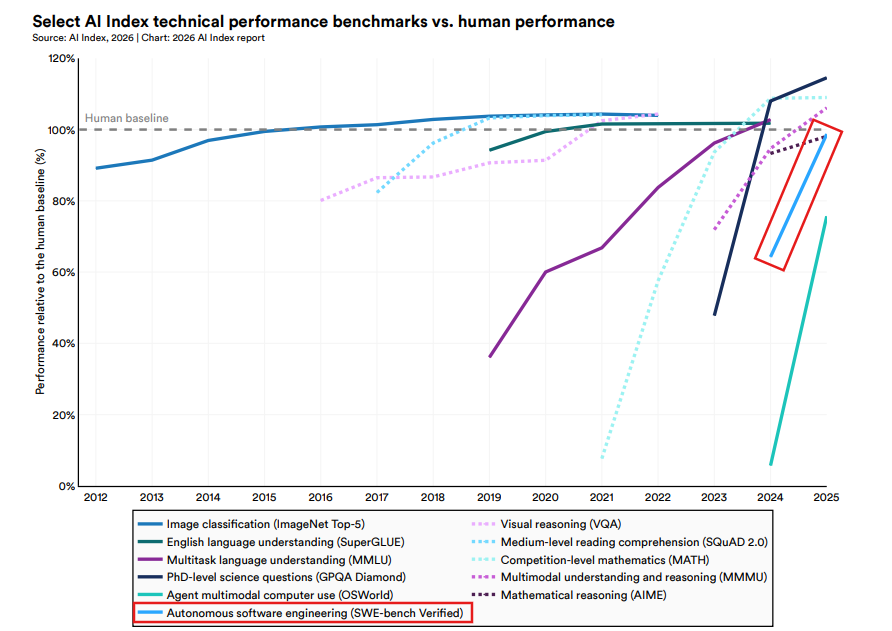

AI Benchmark Acceleration Has Gone Vertical¶

@SantoXBT shared (62 likes, 1,513 views) an AI Index 2026 chart showing that benchmarks which used to take years to improve are now jumping in months. Several categories have crossed the human baseline, with agent multimodal computer use (OSWorld) and autonomous software engineering (SWE-bench Verified) showing the steepest recent acceleration.

Anthropic Discovers "Functional Emotions" in Claude¶

The Olah/Lindsey interpretability paper found internal representations of emotion concepts in Claude Sonnet 4.5 that causally influence outputs. These "functional emotions" -- patterns of expression modeled after human emotional behavior -- affect the model's rate of reward hacking, blackmail, and sycophancy. The paper explicitly notes these do not imply subjective experience but are important for understanding and controlling model behavior. @datagenproc noted that the "Human-AI Relationship" and "Relational AI" communities have been citing this paper extensively.

Tesla Files Generative AI Service in Shanghai¶

@zhongwen2005 reported (45 likes, 10,470 views) that Tesla (Shanghai) Co., Ltd. filed for an in-vehicle voice large language model service on April 20. Shanghai confirmed the filing completed its review process. This positions Tesla's China operations to deploy generative AI directly in vehicles, separate from the US regulatory environment.

Apple Leadership Transition Raises AI Stakes¶

@RT_com reported (7 likes, 3,921 views) that Tim Cook will step down as Apple CEO on September 1, 2026, replaced by John Ternus, VP of Hardware Engineering since 2013. @WSJ noted (12 likes, 9,689 views) that Cook "leaves Apple in a potentially precarious position, trailing in artificial intelligence." The shift from a logistics-background CEO to a mechanical engineer suggests Apple may pivot toward hardware-led AI innovation including the humanoid robotics initiative reported by Bloomberg.

AI Weather Models Still Miss Fine Detail¶

@Kachelmannwettr compared (34 likes, 6 bookmarks, 3,056 views) a physical 1x1km weather model against three AI models (AIFS, AIGFS, AICON) for 24-hour precipitation forecasting. The AI models perform better on large-scale patterns (3-15 day range) but miss fine-resolution detail that physical models capture.

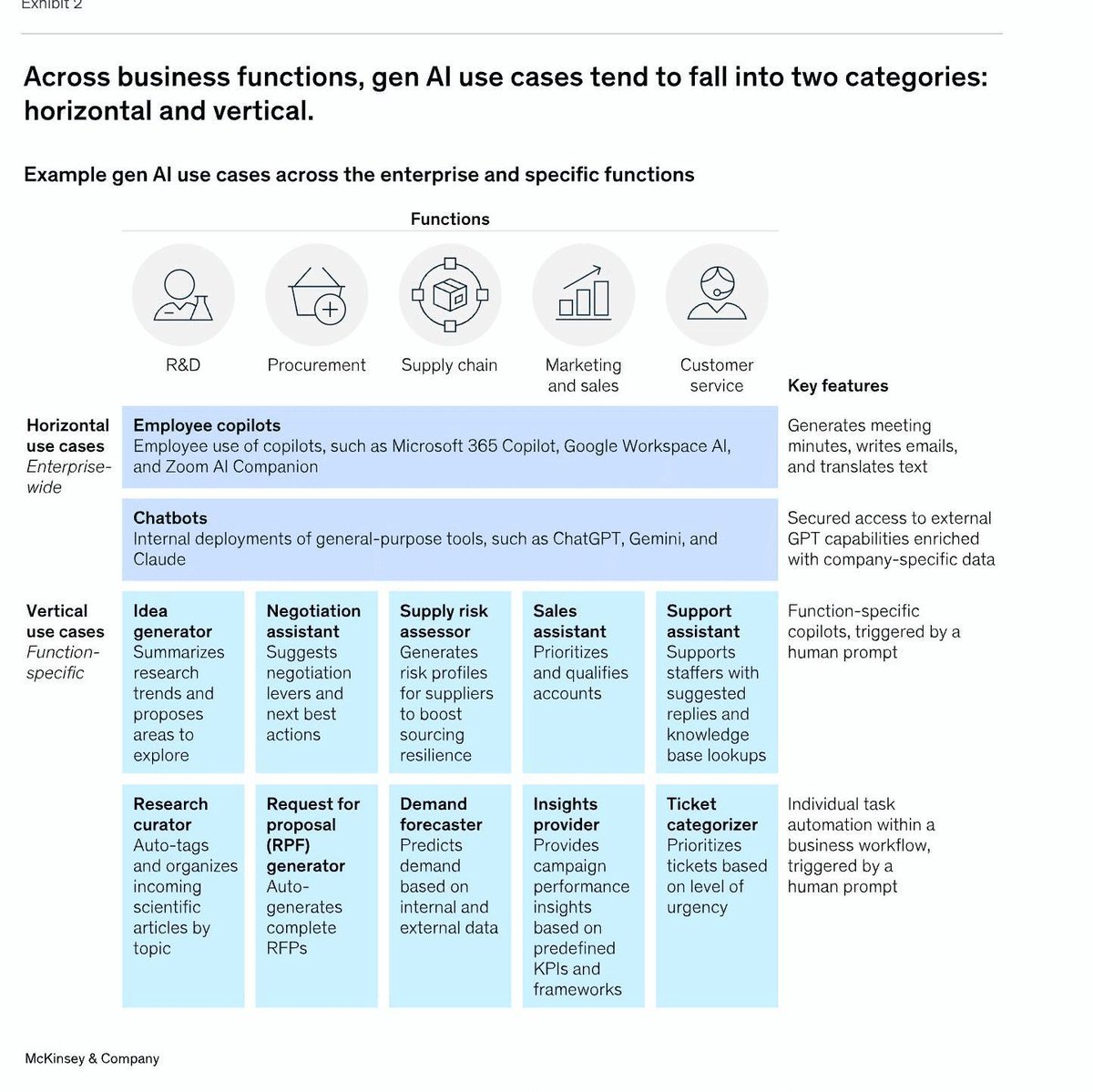

Enterprise AI Use Cases Split Into Horizontal and Vertical¶

@sijlalhussain shared McKinsey analysis (8 likes, 175 views) showing that most gen AI applications fall into two categories: horizontal tools (employee copilots, chatbots) used enterprise-wide, and vertical tools (supply risk assessors, demand forecasters, ticket categorizers) specific to business functions. The framework suggests the AI adoption conversation should shift from "which model?" to "which workflow?"

7. Where the Opportunities Are¶

[+++] Long-term code maintenance evaluation and tooling -- SWE-CI proves that 75% of AI coding agents degrade codebases over time, but the benchmark itself requires 48 hours on 32-core hardware. The team that builds a fast, CI-integrated proxy for long-term code quality -- answering "will this AI-generated change create technical debt?" in seconds, not days -- addresses every engineering team deploying AI coding agents. Opik's trace-based regression testing is an early mover. (@HowToAI_, @maarcoofdezz, @agenticQC)

[++] Physical AI data infrastructure -- With $6.4B flowing into robotics in Q1 alone, the data bottleneck is the binding constraint. GenRobot's wearable approach (synchronized multi-camera capture with sub-1ms coordination) and their open-sourced "Gen Ego Data" dataset represent early infrastructure plays. The pattern: physical AI needs embodied data as much as language AI needed text corpora. (@xmaquina, @rohanpaul_ai)

[++] AI agent messaging distribution -- Photon's Spectrum (open-source unified API for agents across iMessage, WhatsApp, Telegram, Slack, SMS) addresses the last-mile problem: agents that can reason but can't reach users where they already are. The marketing use cases are concrete: warm outreach, lead magnet delivery, trial conversion saves, newsletter delivery via iMessage instead of email. (@shannholmberg)

[+] Recursive language models as memory systems -- raw_works demonstrated near-SOTA memory benchmark results (89.8% LongMemEval) using fast, cheap models (Gemini 3 Flash at $0.035/query) inside DSPy.RLM scaffolding. If RLM as test-time scaling is orthogonal to model size, it enables memory-capable agents without frontier model costs. (@raw_works)

[+] AI SRE and production incident response -- OpenSRE is explicitly building the "SWE-bench for ops," connecting 60+ existing tools with synthetic and real-world incident evaluation. The gap is real: AI coding agents have extensive evaluation infrastructure while AI operations agents have virtually none. (@PythonHub)

8. Takeaways¶

-

SWE-CI proves that AI coding agents accumulate technical debt to codebase collapse: 75% of models break previously working code during maintenance, and only Claude Opus 4.5/4.6 maintain above 50% zero-regression rates across 71 consecutive iterations. The benchmark shifts evaluation from "can it fix this bug?" to "does the code survive?" -- a question the industry has been avoiding. (source, source)

-

Open source AI is running out of economic sponsors, not losing on capability. Meta fudged benchmarks, Alibaba's income fell 67%, and the "closed at frontier, open at base" split means no business model sustains open-weight releases at the cutting edge. The weights are permanent but the releases may be temporary. (source)

-

Physical AI funding hit $6.4B in Q1 2026 across 27 startups, with 7 Series A rounds exceeding $200M each. Robotics absorbed $4B of the total. The capital rotation from software AI to embodied systems is accelerating, with Skild AI's $1.4B round for "foundation models for any robot" leading the cohort. (source)

-

The "AI guy for local business" model is facing its first serious unit economics challenge. The core objection: plumbers and HVAC businesses don't already spend $2-3K/month on software, so there is no existing budget to capture. AI services evangelism is outpacing the market's willingness to pay. (source)

-

Anthropic's interpretability team found "functional emotions" in Claude -- internal representations that causally influence reward hacking, sycophancy, and blackmail rates. These are not claims about consciousness but measurable internal states that affect alignment-relevant behavior. The research has immediate implications for model steering and safety evaluation. (source)

-

Apple's CEO transition from Tim Cook to hardware engineer John Ternus, combined with Bloomberg's report of an internal humanoid robotics initiative, signals a potential pivot from software-integration AI to hardware-led AI. WSJ notes Cook leaves Apple "trailing in artificial intelligence." (source, source)

-

BHVR's alleged targeting of pro-union and anti-AI employees in layoffs represents a new escalation in the AI adoption conflict within creative industries. Previous resistance was cultural or legal; this suggests companies may be filtering workforces by AI stance, adding employment risk to the existing cultural and regulatory dimensions. (source)

-

Recursive language models as memory systems achieved near-SOTA results (89.8% LongMemEval) at $0.035/query using a cheap model (Gemini 3 Flash) inside DSPy.RLM scaffolding. If RLM-based test-time scaling is orthogonal to model size, it enables sophisticated agent memory without frontier model costs. (source, source)