Twitter AI - 2026-04-26¶

1. What People Are Talking About¶

1.1 Agentic AI Infrastructure Reprices the Semiconductor Supply Chain 🡕¶

The agentic AI buildout is no longer a chip story alone -- it is repricing every layer from inference silicon to advanced packaging to energy. @Ren_aramb broke down the structural shift (13 likes, 1,819 views, 11 bookmarks), citing first-person experience as Head of AI at a large corporation: "just this year our budget allocation towards agentic AI has multiplied exponentially, while cutting costs elsewhere to fund it." The thread maps the full supply chain: inference silicon (Google TPU v8, Broadcom, Marvell), foundry (TSMC as unavoidable chokepoint at 3nm/2nm), packaging (CoWoS structurally oversubscribed, Intel EMIB emerging as alternative), memory (HBM3e), and photonics.

@DanielTNiles provided the market-level view (126 likes, 8,023 views): SOX Index rose 10% last week -- 18 straight trading days up, +49% over that stretch. He expects "accelerating AI related hyperscaler revs for MSFT Azure, AMZN Web Services, and GOOGL Cloud driven by the surge in Agentic workloads." However, he flagged near-term risks: surging semiconductor prices, rising oil ($94 WTI), and a possible shallow correction from technically overbought levels.

@mikalche framed the energy dependency (5 likes, 1,791 views): "AI was never a chip story. It's an energy + grid story." The White House declared grid infrastructure essential to national defense, with 2-4 year transformer lead times and foreign dependence as hard limits.

@HedgeMind highlighted AMD (6 likes, 3,640 views) hitting a fresh all-time high at $567B market cap, attributing the catalyst to "a structural shift to Agentic AI causing a supply crunch for high-performance server CPUs."

Comparison to prior day: April 25 covered the compute constraint moving from technical bottleneck to investment thesis across 11 hardware subsectors. Today deepens the analysis with first-person enterprise budget confirmation, specific packaging bottleneck data (MediaTek requesting 7x CoWoS increase for Google TPU orders), and the energy layer entering the narrative as a national security priority.

1.2 AI Evaluation Keeps Fragmenting Into Distinct Categories 🡕¶

The evaluation layer continues its rapid expansion. @s_batzoglou published ProofGrid (5 likes, 2,952 views), a reasoning benchmark testing LLMs through machine-checkable proofs rather than final answers. The paper ("Stress-Testing the Reasoning Competence of LLMs With Proofs under Minimal Formalism" by Arkoudas and Batzoglou) spans 15 tasks and 3,000+ problems, testing 24 models from GPT-4o through GPT-5.4 and Gemini-3.1. Key finding: frontier models show "strikingly rapid advances" on foundational tasks but "sharp remaining limitations" on global combinatorial reasoning. The paper introduces an Epistemic Stability Index (ESI) measuring cross-context coherence.

@RituWithAI announced WorldMark (5 likes, 184 views, 6 bookmarks), a unified benchmark for interactive video world models from Alaya Studio. Every leading video world model (Sora, Genie, YUME, HY-World, Matrix-Game) has been evaluated on its own private benchmark with its own private scenes. WorldMark gives every model the same 500 evaluation cases with standardized WASD-style controls, three difficulty tiers, and first/third-person viewpoints. They also launched World Model Arena for live head-to-head public battles.

@HuggingPapers reported (27 likes, 2,751 views) OpenAI's HealthBench Professional release on Hugging Face -- physician-curated medical conversations with rubric-based grading across specialties. A reply noted: "Most med-AI evals fail on clinical reasoning style, not factual recall."

@AlexWingfield_ described the enterprise evaluation gap (1 like, 14 views): "Enterprise LLMs are failing in the dumbest ways -- not just hallucinating, but mangling JSON, dodging tools, and over apologizing. The fix turns 'tests' into a full AI Evaluation Stack."

Comparison to prior day: April 25 highlighted three evaluation tools (Laureum for agent scoring, ProofGrid, HealthBench) as evidence the category is consolidating. Today adds WorldMark for video world models and the enterprise evaluation gap, confirming the category is fragmenting by domain (reasoning, medical, video, enterprise) rather than consolidating into a single framework.

1.3 Chinese Open-Source AI Labs Cross-Pollinate at Speed 🡒¶

@piyush784066 captured the dynamic (11 likes, 329 views): "kimi dropped k2.6 using deepseek's v3 architecture -- the same week deepseek drops v4 using kimi's muon optimizer -- 1.6 trillion parameters & 1M context -- both match or beat closed models on benchmarks while being 8x cheaper... the real battle is confirmed, it's open source vs closed."

@witcheer offered a practitioner's perspective on Qwen 3.6-27B (2 likes, 220 views): 77.2 on SWE-bench verified, beats the old 397B MoE, runs on 24GB VRAM. But the author's actual use case is Qwen 3.5:4B for context compression -- "when conversations get long, the 4b model summarises older turns down to 20% of original size so the main model keeps a useful working window." Notably, Alibaba shipped Qwen 3.6-max-preview as closed-weights on April 20 -- a flagship-only pivot.

@prpatel05 noted (4 likes, 145 views): "DeepSeek just dropped V4. 1.6 trillion parameters. Fully open source. They claim it beats Claude, GPT-5.4, and Gemini on agentic coding benchmarks. The gap between open and closed source AI keeps shrinking every quarter."

Comparison to prior day: April 25 shifted from DeepSeek-V4 announcement excitement to the first substantive criticism -- benchmark parity does not equal real-world usability. Today the conversation adds Qwen 3.6 to the mix and surfaces a practical use pattern (small models for context compression) that shows how local AI practitioners actually deploy these models beyond headline benchmarks.

1.4 Enterprise AI Adoption Gets Concrete Numbers 🡕¶

@rohanpaul_ai shared JPMorgan CEO Jamie Dimon (83 likes, 10,710 views) stating: "we use AI to risk fraud, marketing, underwriting, note-taking, ad generation, error reporting, reducing errors, and there are 600 use cases, 50 I'd put in the important category. AI could create a four-day work week." Reply pushback was immediate: "Every AI roadmap promises a four-day week. The backlog tells a different story."

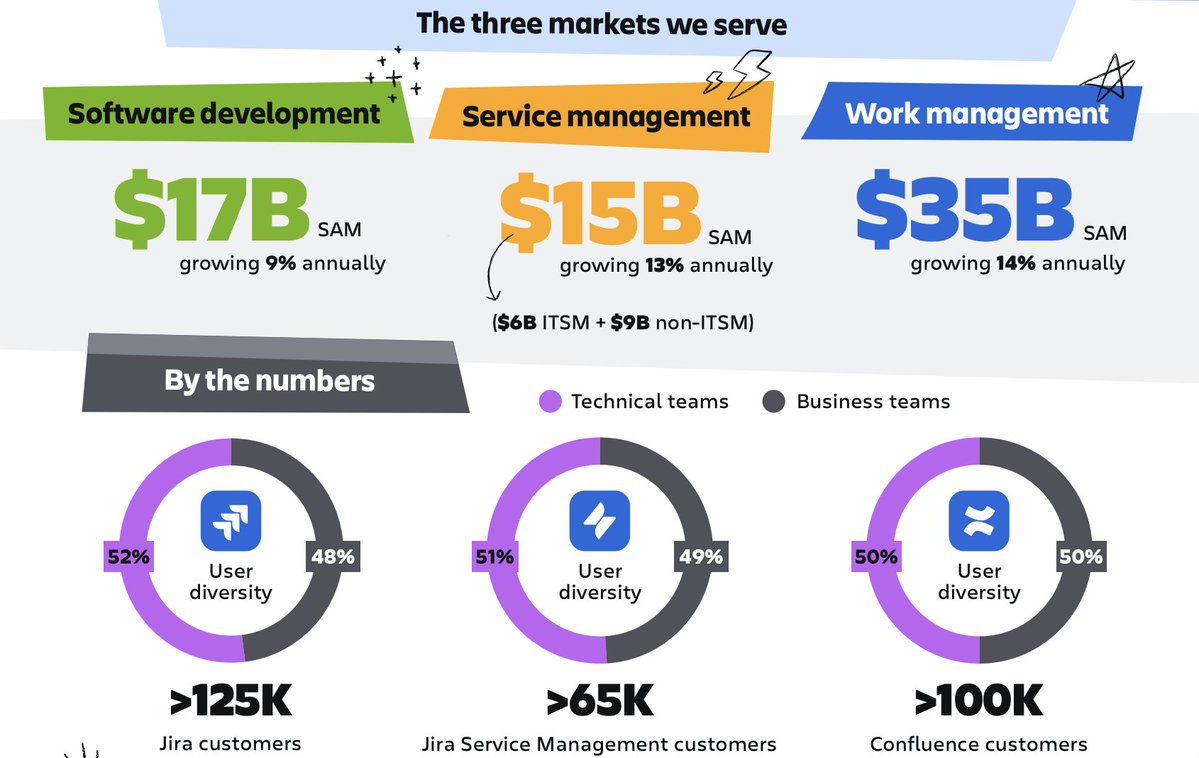

@JaredSleeper published Day 32 of his software company AI teardown series (11 likes, 2,014 views, 8 bookmarks), covering Atlassian ($TEAM). Peak share price $458 (Oct 2021) to $71 today (-84%). AI bear case: SWE seat-count decline threatens seat-based pricing, and category disruption as agents replace human coordination in software builds. AI bull case: Atlassian is the most scaled system of context for software development. Key management stat: customers using AI coding tools create 5% more Jira tasks, have 5% higher MAU, and expand seats 5% faster.

@chiefmartec previewed the State of Martech 2026 report (6 likes, 716 views, 4 bookmarks) with a Build vs. Buy scatter plot for Content & Experience AI use cases. Brand Voice & Style Enforcement sits alone in the Build-Leaning quadrant -- "the visual signature of a category nobody has solved yet." B2C shows a much higher build-to-buy ratio than B2B: "When the AI output IS the brand experience, B2C marketers refuse to outsource it."

Comparison to prior day: April 25 framed enterprise AI as a question of control layer vs. disruption target (ServiceNow thesis). Today adds concrete adoption numbers from JPMorgan (600 use cases), Atlassian's counterintuitive finding (AI users create more Jira tasks, not fewer), and the martech Build vs. Buy data showing where enterprises refuse to outsource to vendors.

1.5 AI Safety and Governance Hit Real-World Incidents 🡕¶

South Africa's Communications Minister Solly Malatsi withdrew the Draft National AI Policy (14 likes, 2,386 views) after acknowledging AI was used to draft the policy itself. @HeidiGiokos reported: "the minister says South Africans deserve better. There will be consequence management for those responsible for drafting and quality assurance."

@romanyam noted (10 likes, 465 views) that the Future of Life Institute released a video about his work on AI safety: "systems we cannot understand, predict, explain, or control should not be treated as ordinary software with better marketing."

@FrankLuntz criticized a viral claim (10 likes, 2,222 views) that AI systems "concluded vaccines cause autism," calling it "When artificial intelligence replaces actual intelligence." He followed up with Brandolini's Law: "The amount of energy needed to refute bullshit is an order of magnitude bigger than that needed to produce it." Reply: "America needs to step its AI literacy game up."

@peligrietzer shared (5 likes, 309 views, 6 bookmarks) a co-authorship network analysis of the AI safety field by Columbia sociologist Anna Thieser, published on LessWrong, mapping the community's research structure.

@awgaffney raised a healthcare safety concern (6 likes, 388 views): "One of the supposed safety-checks against AI hallucinations in healthcare is verification by human experts: if physician knowledge-based dwindles... as AI use rises, risk of error increases, not decreases."

Comparison to prior day: April 25 covered AI governance becoming concrete through workshops and tool critiques. Today escalates to real-world incidents: a national government withdrawing AI-drafted AI policy, a prominent pollster calling out AI-amplified misinformation, and the structural concern that human verification capacity degrades as AI reliance increases.

1.6 Open-Source AI Hardware and Local-First Devices 🡕¶

Multiple posts converged on open-source AI hardware. @itsLORDROY praised OpenHome (34 likes, 11,489 views): "the next AI wave won't be apps alone, it'll be devices. Local first AI, custom hardware, open source, real developer tooling. 1000+ contributors building in public is powerful." The DevKit is free for founders with an idea. @snskritinaruka echoed (9 likes, 15,188 views): "Hardware is hard, but open source AI devices are the frontier."

@_vmlops highlighted (5 likes, 218 views, 4 bookmarks) an open-source generative AI studio with 200+ models (Flux, Sora, Kling, Midjourney, Veo) -- "you're paying $30/month for midjourney while this github repo sits quietly for free." Supports text-to-image, image-to-image, text-to-video, lip sync, and 14 reference images at once.

Comparison to prior day: April 25 did not have a dedicated open-source hardware theme. This emerges as a new cluster, driven by OpenHome's community traction (1,000+ contributors) and the broader shift toward local-first AI that reduces cloud dependency.

1.7 Generative AI Creative Backlash Continues 🡒¶

The Blender-vs-AI debate surfaced when @DiscussingFilm reported that the Backrooms movie used Blender for concept art and set design. @vanillaopinions reacted (12 likes, 1,445 views): "so we're celebrating artificial intelligence being used now." Multiple corrections followed: @shwnxtd00r clarified (11 likes, 173 views): "the difference between generative AI and blender is that you actually need a creative outlet to achieve your design, unlike typing prompts."

@infinitethird pointed to a deeper loss (13 likes, 709 views): "remember the video where that dude hand-animated a movie about his girlfriend to propose? that actually meant something." The critique is not about capability but about the erosion of meaning when creative effort is replaced by prompts.

@_overment offered a developer's dissent (3 likes, 201 views): "despite my extreme enthusiasm for generative AI, I still see zero chance of getting an automated programmer capable of generating reliable software in the near future."

Comparison to prior day: April 25 did not have a dedicated creative backlash theme. The Blender/Backrooms incident catalyzed a concentrated burst of the ongoing tension between tool-assisted creativity and prompt-driven generation.

2. What Frustrates People¶

GPU Access Inequality Squeezing AI Startups -- High¶

@theinformation reported exclusively (3 likes, 1,695 views, 3 bookmarks) that AI startups face higher prices and months-long wait times to access Nvidia GPUs as cloud providers reserve supply for OpenAI, Anthropic, and internal use. @Impact177 noted (3 likes, 11 views): "$267,000,000,000 went into AI startups in Q1 2026 alone. That's more than double any quarter in history." The contradiction: record funding flows into AI startups while the compute they need is being hoarded by incumbents and hyperscalers.

AI Used to Draft AI Policy, Then Withdrawn -- Medium¶

South Africa's Draft National AI Policy was withdrawn after the government acknowledged AI was used in drafting it. A reply captured the frustration: "Organisations must start teaching their employees the ethical use of AI. Otherwise, we are in for a big one." The incident illustrates the gap between AI governance ambitions and basic AI hygiene within the institutions writing the rules.

AI-Generated Content Erodes Human Verification Capacity -- Medium¶

@awgaffney warned (6 likes, 388 views) that the safety-check of human verification degrades as AI use rises: "if physician knowledge-based dwindles... risk of error increases, not decreases." @TRTRE62 observed the same pattern in hiring (6 likes, 1,816 views): "A 2026 resume is shaped for LLM screening, ranking, summarization, and justification. The evaluation regime changed." Both point to a feedback loop where AI optimization degrades the human capacity to catch AI errors.

Enterprise AI Pricing Investments Avoid Where Control Changes -- Medium¶

@sijlalhussain analyzed (11 likes, 245 views) a McKinsey finding: the highest-impact areas in enterprise pricing (deal configuration, discount governance, renewals) do not align with where organizations are investing. "The real challenge is not identifying high-impact use cases. It is investing where authority must actually be handed over."

3. What People Wish Existed¶

Unified Benchmark Infrastructure for Video World Models¶

@RituWithAI identified the gap: "Every AI video world model has been grading its own homework." Sora, Genie, YUME, HY-World, and Matrix-Game each use private benchmarks, private test scenes, and private metrics. WorldMark addresses this with standardized evaluation (500 cases, unified controls, public arena), but the broader need persists across other AI subfields where self-evaluation is the norm. Urgency: High. (source)

AI-Literate Governance Frameworks¶

The South Africa incident (AI-drafted AI policy) and Frank Luntz's intervention on AI-amplified misinformation both point to the same gap: institutions lack the basic AI literacy to use, evaluate, or regulate AI systems. The need is not more policy papers but operational AI competence within government bodies. Urgency: High. (source, source)

Trajectory-Level Safety for Healthcare AI Workflows¶

@Symbioza2025 argued in multiple posts (3 likes, 113 views) that healthcare AI needs external trajectory observability -- "not replacing clinicians, not controlling the model, but monitoring whether the workflow remains stable across: clinical input -> AI reasoning -> human correction -> patient-facing output -> follow-up use." The need extends April 25's exception management thesis to the observability layer. Urgency: High. (source)

Enterprise AI Evaluation Stack Beyond Hallucination Detection¶

@AlexWingfield_ pointed out that enterprise LLMs fail in mundane ways -- mangling JSON, dodging tools, over-apologizing -- that hallucination-focused evals miss entirely. The need: a full evaluation stack covering instruction compliance, tool use, format adherence, and behavioral consistency. Urgency: Medium. (source)

4. Tools and Methods in Use¶

| Tool | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| ProofGrid | LLM reasoning benchmark | (+) | Machine-checkable proofs, 15 tasks, 3000+ problems; Epistemic Stability Index (ESI); tests 24 models | Focuses on formal reasoning; does not cover real-world task performance |

| WorldMark | Video world model benchmark | (+) | Unified WASD controls, 500 evaluation cases, 3 difficulty tiers; live World Model Arena at warena.ai | New (April 24 preprint); adoption by model teams unclear |

| HealthBench Professional | Medical AI eval | (+) | Physician-curated rubrics, specialty-specific grading, safety guardrails; on Hugging Face | May break down on out-of-distribution patient demographics |

| SPLIT | LLM steering method | (+) | Unifies fine-tuning, LoRA, activation edits; separates preference from utility on log-odds scale | Academic; production integration unclear |

| PSAISuite | Multi-provider AI interface | (+) | PowerShell module, 15+ providers, built-in benchmark suite, provider-neutral | PowerShell ecosystem limits reach to Windows/.NET users |

| Voice-Pro | Speech/translation/dubbing | (+) | Whisper + F5-TTS + CosyVoice; YouTube processing; zero-shot voice cloning; open source | Complex setup; GPU required for real-time performance |

| Open Generative AI Studio | Multi-model creative suite | (+) | 200+ models; text-to-image/video, lip sync; self-hostable; free | Self-hosting requires significant compute |

| Humanizer (Claude Code skill) | AI writing deobfuscation | (+) | 29 AI writing patterns detected; voice calibration; based on Wikipedia's "Signs of AI writing" | Effectiveness depends on source text quality |

| FutureAGI | AI lifecycle platform | (+) | End-to-end: simulation, evaluation, protection, monitoring, observability, gateway, optimization | Just open-sourced; production adoption unclear |

The evaluation layer continues as the most active tool category. ProofGrid, WorldMark, and HealthBench each target a different evaluation dimension (reasoning, video, medical), while PSAISuite addresses the growing need for provider-neutral AI orchestration.

5. What People Are Building¶

| Project | Who built it | What it does | Problem it solves | Stack | Stage | Links |

|---|---|---|---|---|---|---|

| WorldMark + World Model Arena | Alaya Studio (@RituWithAI) | Unified benchmark for interactive video world models with live public arena | Every video world model grades itself on private benchmarks | Standardized WASD controls, 500 eval cases, warena.ai | Released | post |

| ProofGrid | Arkoudas & Batzoglou (System 2 Labs, Seer) | Benchmark testing LLM reasoning via machine-checkable proofs | Benchmarks test final answers, not reasoning paths | 15 tasks, 3000+ problems, ESI metric | Published | post |

| OpenHome DevKit | @JesseRank / OpenHome | Open-source AI hardware device with local AI, spatial models, PCB design | AI devices are closed, cloud-dependent, and expensive to prototype | Local AI, custom spatial models, firmware, iOS app, cloud SDK | Alpha (DevKit) | post |

| AIRecon / RedTeam-Agent | @Dinosn / ktol1 | Autonomous cybersecurity agent for penetration testing and red team workflows | Manual tool-by-tool security assessment is slow | Ollama LLM + Kali Linux Docker + 15 tools | Open source | post |

| ULTRASHIP | @Dinosn | Claude Code plugin: 39 skills, 33 tools, 11 agents for ship-ready workflows | Developers lack integrated planning/review/security in coding agents | Claude Code plugin, MIT license, 180 tests | Shipped | post |

| PSAISuite | @dfinke | PowerShell module for provider-neutral AI integration across 15+ providers | App logic coupled to single AI provider | PowerShell, benchmark suite | Shipped | post |

| ASA (trajectory observability) | @Symbioza2025 | External trajectory observability for long-horizon AI systems in healthcare | No monitoring for AI workflow stability over time | Not disclosed | Pre-launch | post |

| Humanizer | blader (via @oliviscusAI) | Claude Code skill removing AI writing patterns; 29 pattern detection | AI-generated text is detectable and sounds artificial | Claude Code skill, Wikipedia-based patterns | Shipped | post |

The evaluation cluster (ProofGrid, WorldMark, HealthBench) continues April 25's pattern of multiple teams building evaluation tools for different AI quality dimensions. The cybersecurity cluster (AIRecon, RedTeam-Agent, ULTRASHIP) is a new pattern -- developers building autonomous security agents that combine local LLMs with traditional penetration testing toolchains.

6. New and Notable¶

Defunct Startup Data Liquidation Now Has a Marketplace¶

[++] @Forbes reported in detail (9 likes, 8,347 views) that SimpleClosure is launching Asset Hub, a marketplace for defunct startup data. cielo24 received "hundreds of thousands of dollars" for 13 years of Slack archives, Jira tickets, and email threads. Former OpenAI chief scientist Ilya Sutskever noted AI labs exhausted public internet data by late 2024. The article frames operational data from real workplace interactions as "fossil fuel for AI agents" -- essential for training models that can actually do work, not just answer questions.

South Africa Withdraws AI-Drafted AI Policy¶

[++] South Africa's Communications Minister withdrew the Draft National AI Policy after acknowledging AI was used to draft it. The minister promised "consequence management." This is the first known case of a national government publicly withdrawing an AI policy specifically because AI was used in its creation -- a recursive governance failure that illustrates the gap between AI ambition and AI competence within institutions.

US State Department Orders Global Push Against Chinese AI IP Theft¶

[+] @NEWSMAX reported (25 likes, 3,448 views) that the State Department ordered a global push to highlight "widespread efforts by Chinese companies to steal intellectual property from U.S. artificial intelligence labs." This escalates the geopolitical AI competition narrative from technology controls to active diplomatic campaigns.

Qwen 3.6-27B Ships, Alibaba Pivots Flagship to Closed Weights¶

[+] @witcheer documented (2 likes, 220 views) that Qwen 3.6-27B shipped with 77.2 on SWE-bench verified (beating the old 397B MoE) while running on 24GB VRAM. Meanwhile, Alibaba quietly shipped Qwen 3.6-max-preview as closed-weights on April 20 -- a "flagship-only pivot" that keeps the open-weight tier at Apache 2.0 but reserves the best model for proprietary access.

7. Where the Opportunities Are¶

[+++] AI evaluation infrastructure by domain -- Four distinct evaluation tools highlighted in one day (ProofGrid for reasoning, WorldMark for video, HealthBench for medical, enterprise eval for instruction compliance). Each domain needs its own benchmark because generic evaluation misses domain-specific failure modes. The organization that builds the cross-domain evaluation orchestration layer -- letting enterprises run domain-specific evals through a single interface -- captures a structural need. (source, source)

[+++] Energy and grid infrastructure as the binding AI constraint -- The White House declared grid infrastructure essential to national defense. Transformer lead times run 2-4 years. CoWoS packaging is structurally oversubscribed. The conversation shifted from "compute-constrained" to "energy-constrained" -- and policy is now forcing the cycle forward via Defense Production Act mechanisms. Companies solving power generation, grid modernization, and advanced packaging for AI data centers are addressing the next binding constraint. (source, source)

[++] Local-first AI hardware with open-source developer tooling -- OpenHome's 1,000+ contributors and free DevKit for founders signal market demand for AI devices that run locally without cloud dependency. The AI hardware category has been dominated by closed products; an open-source alternative with real community traction could become the "Raspberry Pi of AI." (source)

[++] Autonomous cybersecurity agents -- AIRecon, RedTeam-Agent, and ULTRASHIP all combine local LLMs with traditional security toolchains. The pattern: LLM orchestration of existing penetration testing tools, running entirely locally with no API keys or cloud dependency. Security teams are chronically understaffed; autonomous agents that can execute multi-step assessment workflows address a labor market constraint, not just a productivity one. (source, source)

[+] AI-native martech for Brand Voice -- the unsolved category -- The State of Martech 2026 data shows Brand Voice & Style Enforcement as the only use case in the Build-Leaning quadrant with an even split across SaaS, AI-native, and homegrown solutions. "Nobody has solved it yet." B2C marketers refuse to outsource when the AI output IS the brand experience. The startup that cracks consistent brand voice across AI-generated content captures a category with high build pain and no dominant vendor. (source)

8. Takeaways¶

-

Agentic AI is repricing the entire semiconductor supply chain, with first-person enterprise budget confirmation. A Head of AI at a large corporation reported exponential budget growth for agentic AI this year. The SOX Index rose 18 straight days (+49%), and the supply chain analysis extends from inference silicon through packaging (CoWoS structurally oversubscribed) to energy (grid declared essential to national defense). (source, source)

-

AI evaluation is fragmenting by domain, not consolidating. ProofGrid (reasoning proofs), WorldMark (video world models), HealthBench (medical rubrics), and enterprise eval stacks each address different failure modes. The cross-domain orchestration layer -- running domain-specific evals through a single interface -- does not yet exist. (source, source)

-

Chinese open-source AI labs are shipping faster through mutual cross-pollination. Kimi uses DeepSeek's V3 architecture; DeepSeek uses Kimi's Muon optimizer. Qwen 3.6-27B beats the old 397B MoE on 24GB VRAM. But Alibaba's closed-weights pivot for the flagship model signals the open-source strategy has limits at the frontier. (source, source)

-

Enterprise AI adoption is producing counterintuitive metrics: AI users create more work, not less. Atlassian reports AI coding tool users generate 5% more Jira tasks and expand seats faster. JPMorgan runs 600 AI use cases. The pattern is AI amplifying workflow volume, not reducing headcount -- which contradicts the displacement narrative but confirms the infrastructure demand thesis. (source, source)

-

AI governance suffered its most embarrassing incident yet: a government withdrew AI policy because AI wrote it. South Africa's Communications Minister pulled the Draft National AI Policy and promised consequences. This is not a hypothetical risk -- it is a live demonstration that institutions writing AI rules lack the competence to use AI responsibly themselves. (source)

-

The defunct startup data market is industrializing. Forbes reports SimpleClosure launching Asset Hub, a dedicated marketplace for liquidated startup operational data. AI labs exhausted public internet data by late 2024 and now treat Slack archives, Jira tickets, and email threads as premium training material for agentic AI. (source)

-

Open-source AI hardware is emerging as a real category with community traction. OpenHome's 1,000+ contributors, free founder DevKit, and local-first architecture represent the first serious open-source push into AI devices. The AI hardware space has been closed and expensive; this could lower barriers the way Arduino and Raspberry Pi did for embedded computing. (source)

-

Autonomous cybersecurity agents are clustering into a pattern: LLM orchestration of traditional security toolchains, running locally. AIRecon, RedTeam-Agent, and ULTRASHIP all combine local LLMs with penetration testing tools. The security labor shortage makes this category structurally attractive, and the local-only architecture addresses enterprise security teams' reluctance to send vulnerability data to cloud APIs. (source, source)