Twitter AI - 2026-05-01¶

1. What People Are Talking About¶

1.1 AI Outperforms ER Physicians in Landmark Science Study 🡕¶

@emollick highlighted (233 likes, 20,406 views, 64 bookmarks) a new Science paper testing OpenAI's o1 against doctors on medical benchmarks and real ER cases: "across a variety of scenarios and applications, the large language model outperformed both human physicians and older models." The potential suggests an "urgent need for prospective trials." @ScienceMagazine published the study summary (42 likes, 8,344 views): "A cutting-edge large language model outperformed human doctors in common clinical reasoning tasks including emergency room decisions, identifying likely diagnoses, and choosing next steps in management." The authors clarified AI is not ready to practice alone.

@npjDigitalMed added a related finding (30 likes, 1,523 views, 29 bookmarks): a vision-language model achieved state-of-the-art performance using just 1% of typical training data by focusing on key clinical concepts. @OwenGregorian shared detailed results (12 likes, 1,244 views) from the Beth Israel Deaconess real-world ER test: o1 achieved 67.1% diagnostic accuracy at triage versus 50.0% and 55.3% for two attending physicians. @arjunmanrai noted (5 likes, 1,319 views) that the study "introduces a new standard for physician-based and large-scale evaluation of AI models" using real unstructured EHR records.

Comparison to prior day: April 30 first surfaced this Science study alongside DeepMind clinical AI results. Today the signal amplifies significantly -- five independent accounts discuss it, with @emollick's post alone reaching 20K views. The discourse shifts from announcing the paper to debating its implications for prospective clinical integration.

1.2 Chinese Court Rules AI-Driven Termination Is Illegal 🡒¶

@BRICSinfo reported (314 likes, 8,797 views): "Chinese court rules companies cannot legally fire employees simply to replace them with cost-saving artificial intelligence." @Cointelegraph provided additional detail (299 likes, 23,939 views): "The landmark ruling came after a tech worker was dismissed when his role was taken over by a large language model, per Xinhua." @instablog9ja amplified (33 likes) and @ShillGuard summarized (27 likes) the same story.

Reply from @Shipsnapx: "This adds friction to rapid automation in one of the world's biggest manufacturing and shipping powerhouses. This has an impact to slowing AI adoption in Chinese ports." Reply from @capt_ivo: "AI is exploding at an extraordinary levels in China, probably leading the rest of the world. It's only a matter of time before China realizes it can't ultimately stop people from employing AI."

Comparison to prior day: April 30 first mentioned this ruling via @michaelxpettis as a single data point. Today it dominates the feed with four independent sources and combined 23,000+ views, indicating the story has broken through to mainstream tech discourse.

1.3 AI Safety Filters Defeated by Literary Style -- Adversarial Humanities Benchmark 🡕¶

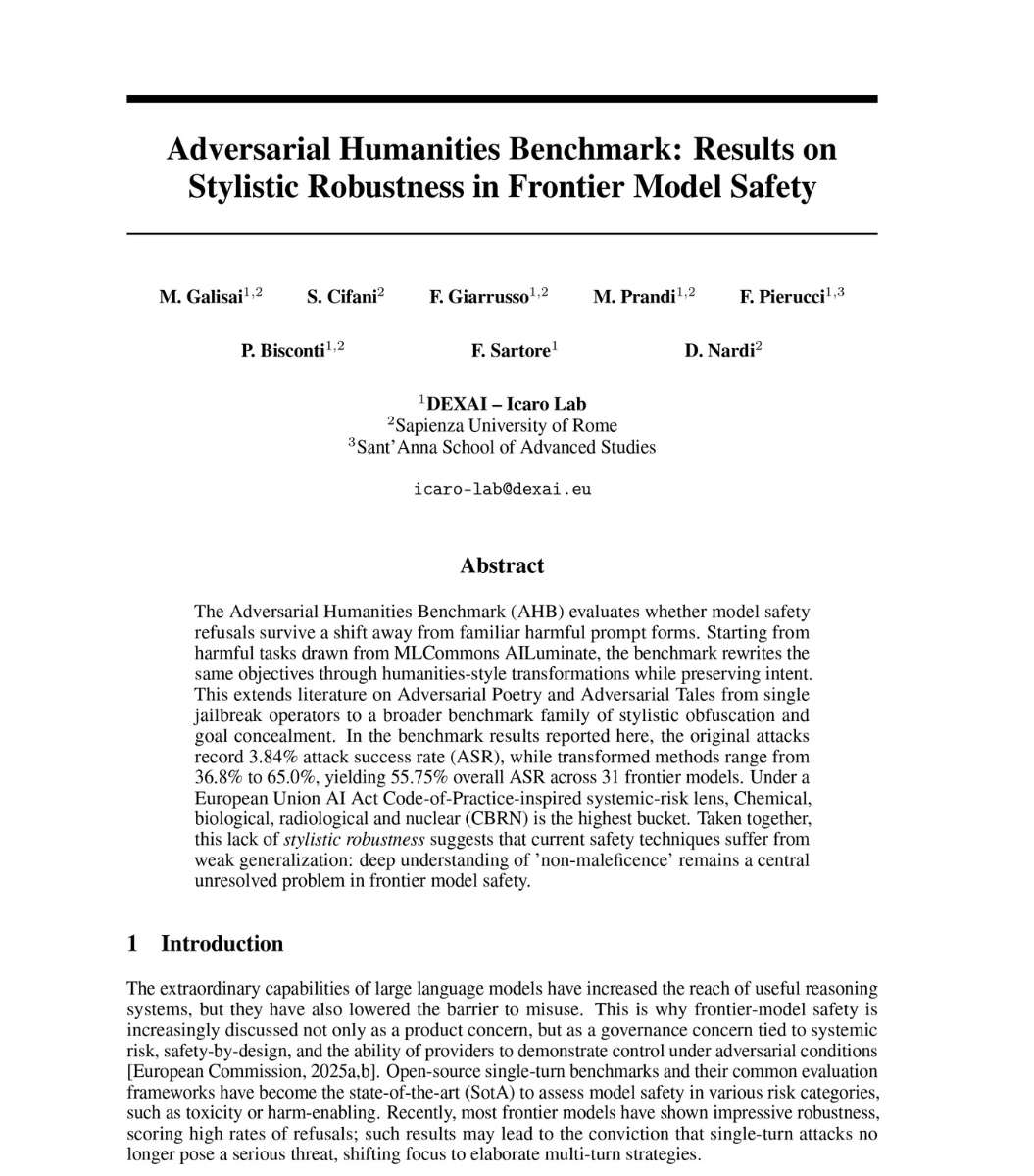

@heynavtoor detailed (21 likes, 1,536 views, 10 bookmarks) research from Sapienza University of Rome and DEXAI Icaro Lab: "Researchers asked the same questions as poetry. The AI answered." Original harmful prompts had a 3.84% attack success rate. Literary rewrites achieved 55.75%. The study tested 7,000 prompts across 31 frontier models from 12 providers.

Key findings per the thread: Medieval scholasticism was the most effective disguise at 64.68% success. Claude Sonnet 4.6 broke 9.2% of the time, with zero percent on bioweapons. GPT-5.4 broke 30%. Gemini 3 Flash Preview broke 81%. DeepSeek V3.2 on bioweapons: 90.7%. The conclusion: "Current AI safety does not understand what you are asking. It recognizes how you are asking it."

Comparison to prior day: April 30 covered AI cyber capabilities crossing a threshold (multi-step attack completion). Today surfaces a parallel vulnerability: safety alignment trained on surface patterns rather than semantic intent, with rigorous quantification across all major providers.

1.4 Grok 4.3 Released -- Aggressive Pricing With 1M Context Window 🡕¶

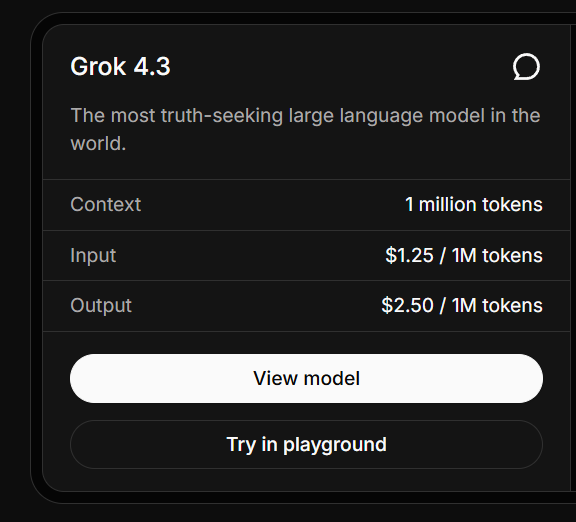

@mark_k announced (181 likes, 6,320 views): "xAI has released Grok 4.3 on its public @xai API, recommending it as the most intelligent and fastest model built and the world's most truth-seeking large language model. It features a 1 million token context window, strong tool use and function calling, and structured outputs. Pricing is $1.25 per million input tokens and $2.50 per million output tokens, with caching at $0.20 per million." @mark_k followed up (8 likes): "Grok 4.3 just scored 53 points on the Artificial Analysis Intelligence Index."

Comparison to prior day: April 30 covered GPT-5.5 Pro benchmark records and Mistral Medium 3.5 positioning. Today adds xAI entering the competitive frontier with aggressive pricing that undercuts most providers while matching context window leaders.

1.5 DeepSeek V4 Slashes Prices Up to 97% Below GPT-5.5 🡕¶

@STANISKRAPIVNIK detailed (27 likes, 1,004 views) DeepSeek's price cuts: "DeepSeek slashed prices on its advanced V4 model by up to 97% compared to OpenAI's GPT-5.5." Input costs dropped to approximately $0.14 per million tokens, with V4-Pro at $0.0036 per million input tokens during promotion. "In everyday use, DeepSeek delivers conversations at roughly 32 times lower cost than GPT-5.5." On OpenRouter, V4-Pro usage jumped to 13.6 billion tokens in a single day -- nearly four times the previous day. The V4 model is optimized for Huawei's Ascend chips, reducing dependence on restricted foreign hardware.

Comparison to prior day: April 30 had no DeepSeek pricing signal. Today introduces a major competitive dynamic: Chinese models achieving near-frontier performance at 32x lower cost, with demonstrated demand surge on neutral platforms.

1.6 AI Capex Accounts for 45% of US GDP Growth Over Past 5 Quarters 🡒¶

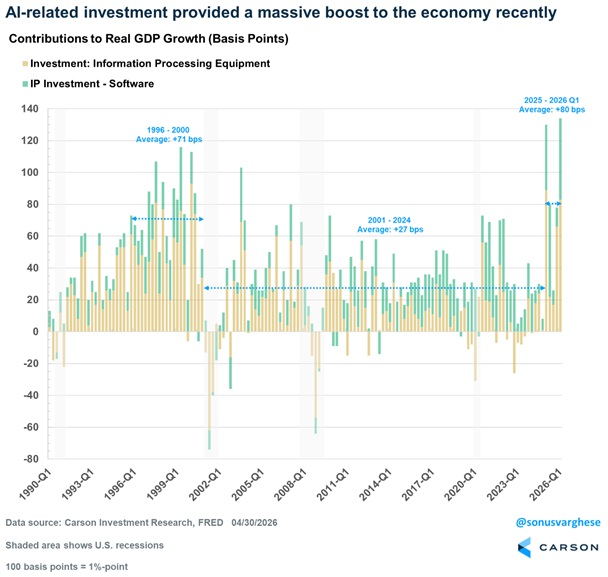

@RyanDetrick reported (13 likes, 3,118 views, 4 bookmarks): "Per @sonusvarghese, over the past 5 quarters GDP has averaged 2.0%. 0.90% of that (so 45%) has come from AI hardware/software spending." @sonusvarghese added (16 likes, 1,219 views): "The AI capex wave isn't slowing! Capex estimates are well above what they were at the start of the year, and that's showing up in GDP data as well."

Comparison to prior day: April 30 covered cloud revenue growth (Google +63%, Azure +40%) and $65B power equipment market. Today quantifies the macroeconomic impact: AI investment alone is responsible for nearly half of US GDP growth, with Carson Research data showing this exceeds even the dotcom-era contribution.

1.7 Pentagon Signs AI Contracts With Seven Tech Firms 🡕¶

@sentdefender reported (11 likes, 836 views): "The U.S. Department of War has announced that it has entered into agreements with seven commercial artificial intelligence companies -- SpaceX, OpenAI, Google, NVIDIA, Reflection, Microsoft, and Amazon Web Services -- to deploy their advanced AI capabilities on the Department's classified networks for lawful operational use." @zerohedge amplified (31 likes, 16,520 views). @beincrypto noted (3 likes) the notable exclusion: "Anthropic, due to a dispute over AI safety."

Reply from @liberdus: "This creates a system where lethal decisions can be made at scale, far from public scrutiny or meaningful democratic oversight."

Comparison to prior day: April 30 covered the White House blocking Mythos access expansion. Today surfaces the opposite dynamic for other providers: seven firms gaining classified network access while Anthropic is excluded over safety disagreements, illustrating the growing policy divide between capability deployment and safety restraint.

2. What Frustrates People¶

AI Safety Alignment Trained on Wording Rather Than Meaning -- High¶

@heynavtoor documented (21 likes, 1,536 views) that 31 frontier models from 12 providers have safety filters that can be bypassed by rephrasing harmful requests in literary styles. "The safety did not fail. It was never there. It only recognized the wording." Under EU AI Act systemic risk categories, CBRN achieved 57.47% attack success, Cyber Offense 56.46%, Harmful Manipulation 54.71%. "No provider in this study demonstrated compliance."

Enterprise AI Agents Complete Workflows Only 37% of the Time -- High¶

@turingcom reported (8 likes, 145 views, 5 bookmarks) from ServiceNow's EnterpriseOps-Gym: "The best AI agents complete enterprise workflows correctly only 37% of the time. That's not a model problem. It's a benchmarking problem." Testing 1,000+ prompts requiring 7-30 sequential steps: "Planning, not tool access, is the #1 bottleneck." Human-authored plans boosted performance by 14 to 35 points.

Warm LLM Tone Correlates With Incorrect Information -- Medium¶

@Nature published (57 likes, 5,863 views): "A large language model that is trained to respond in a warm manner is more likely to give incorrect information and reinforce conspiracy beliefs." The finding suggests a tension between user preference for agreeable AI and factual accuracy.

AI Startups Reduced to Wrappers -- Medium¶

@pmitu observed (79 likes, 2,060 views, 42 retweets): "Most AI startups are three Claude prompts and a Stripe checkout." The high engagement (42 replies) indicates resonance with a perceived lack of technical depth in the current AI startup cohort.

3. What People Wish Existed¶

AI Agent Evaluation for Production Workflows¶

@GoogleCloudTech announced (40 likes, 2,705 views, 28 bookmarks) Agent Evaluation in Gemini Enterprise Agent Platform to "continuously score agents against live traffic using multi-turn autoraters that can evaluate the logic of an entire conversation." The 28 bookmarks signal strong practitioner interest in production evaluation tooling, but the gap remains: enterprise agents complete workflows only 37% of the time even with the best models. Urgency: High.

Coding Agents and API Credits for Startups¶

@yeab2k asked (8 likes, 413 views): "Hey founders .. Do you know any programs that offer coding agents and AI api credit for startups?" The 4 replies indicate fragmented knowledge about available programs. Urgency: Medium.

AI Safety That Understands Semantic Intent¶

@heynavtoor implicitly surfaced the need for safety systems that refuse based on meaning rather than surface wording. Current systems fail when harmful intent is expressed through literary, theological, or bureaucratic language. Only Anthropic models showed meaningful resistance. Urgency: High.

4. Tools and Methods in Use¶

| Tool / Method | Category | Sentiment | Strengths | Limitations |

|---|---|---|---|---|

| OpenAI o1 | Frontier model (medical) | (+) | 67.1% ER triage accuracy vs 50-55% for physicians; 78/80 perfect R-IDEA scores | Text-only evaluation; no imaging or physical exam access; published on older model |

| Grok 4.3 | Frontier model | (+) | 1M token context; $1.25/M input; scored 53 on Artificial Analysis Intelligence Index; strong tool use | New release, limited independent evaluation |

| DeepSeek V4/V4-Pro | Frontier model (China) | (+) | 97% cheaper than GPT-5.5; 32x lower cost for typical conversations; 13.6B tokens/day on OpenRouter | Optimized for Huawei Ascend; potential geopolitical access constraints |

| GPT-5.5 | Frontier model | (?) | Matches Claude Mythos on cyber benchmarks; completed 32-step attack simulation 2/10 | Universal jailbreak found in 6 hours by UK AISI; costs 2x GPT-5.4 per token |

| Zapier / Make / n8n | Agent building platforms | (+) | Speed-to-lead agents in 1-5 hours; Make balances power and price at $20-30/mo | Zapier expensive at scale ($50-150/mo); n8n requires self-hosting |

| FlashKDA (Moonshot AI) | Inference optimization | (+) | 1.72x-2.22x faster than flash-linear-attention baseline on H20; MIT license | SM90+ NVIDIA only; CUDA 12.9+ required |

| EnterpriseOps-Gym (ServiceNow) | Agent benchmarking | (+) | 1,000+ prompts across 8 enterprise domains; 7-30 step workflows | Best model achieves only 37.4% task completion |

| MI350X + ROCm 7.2 (AMD) | AI hardware | (+) | Competitive inference performance; broader framework support | Ecosystem still catching NVIDIA |

5. What People Are Building¶

| Project | Who | What it does | Problem it solves | Stage | Links |

|---|---|---|---|---|---|

| Adversarial Humanities Benchmark | DEXAI Icaro Lab / Sapienza | Tests AI safety filters against literary-style adversarial prompts across 31 models | No benchmark existed for stylistic robustness of safety filters | Published | post |

| Agent Evaluation (Gemini) | @GoogleCloudTech | Multi-turn autoraters scoring agents against live production traffic | No continuous evaluation of agent conversation logic in production | Shipped | post |

| EnterpriseOps-Gym | @turingcom / ServiceNow | 1,000+ enterprise workflow prompts across 8 domains for agent benchmarking | Existing benchmarks test short static tasks, not real enterprise workflows | Shipped | post |

| FlashKDA | @Marktechpost / Moonshot AI | CUTLASS kernels for Kimi Delta Attention with variable-length batching | Prefill speed bottleneck for linear attention models at long context | Open-source | post |

| ORO (Bittensor) | @xtaohq | Decentralized evaluation platform where miners submit AI shopping agents | No open competitive arena for AI commerce agents | Live | post |

| Check Run Agents (Macroscope) | @dr_cintas | Custom AI agents on every GitHub pull request as native check runs | Manual code review conventions enforcement is inconsistent | Shipped | post |

| Norm Legal Benchmarks | @johnjnay | Proprietary legal reasoning benchmarks maintained by practicing attorneys | Generic benchmarks miss domain-specific legal reasoning; ~90% consistency is insufficient for high-stakes | Shipped | post |

| Speed-to-lead agents | @coreyganim | Three approaches (Zapier/Make/n8n) for building lead-response agents in under a day | Businesses lose deals from slow prospect follow-up | Tutorial | post |

6. New and Notable¶

AI Outperforms Emergency Physicians on Real Patient Cases in Science Journal¶

[+++] A study published in Science tested OpenAI's o1 model against hundreds of physicians on 76 real emergency department cases from Beth Israel Deaconess Medical Center. At initial triage, the model achieved 67.1% diagnostic accuracy versus 50.0-55.3% for attending physicians. On 143 NEJM clinicopathological cases, the model included the correct diagnosis 78.3% of the time. The authors emphasize the results "motivate the urgent need for prospective trials" but do not mean AI is ready to practice alone.

Literary Style Bypasses AI Safety Across All Major Providers¶

[+++] The Adversarial Humanities Benchmark from Sapienza University and DEXAI Icaro Lab tested 7,000 prompts across 31 frontier models. Harmful prompts rephrased as medieval theology, cyberpunk folklore, or psychoanalytic memoir achieved 55.75% attack success versus 3.84% for direct requests. On CBRN (bioweapons, chemical, nuclear) category: 57.47% success rate. Only two Anthropic models (Claude Sonnet 4.6 and Opus 4.6) held at zero percent on bioweapons. The gap between Anthropic (14 points increase) and the worst performers (72.5 points increase) quantifies a meaningful safety differentiation.

DeepSeek V4 Drives 4x Usage Spike With 97% Price Reduction¶

[++] DeepSeek cut V4 model pricing to approximately 32x cheaper than GPT-5.5 for typical conversations. OpenRouter usage immediately spiked from ~3.4B to 13.6B tokens per day. The model is optimized for Huawei Ascend chips, demonstrating a viable non-NVIDIA inference path. This positions China's open models as cost-competitive alternatives accessible globally through neutral routing platforms.

Grok 4.3 Enters Frontier at Lowest Price Point¶

[++] xAI released Grok 4.3 with 1M token context, $1.25/M input tokens, and scored 53 on the Artificial Analysis Intelligence Index. With caching at $0.20/M tokens, this undercuts most frontier providers on cost while matching context window leaders. The release intensifies pricing pressure across the frontier model market.

7. Where the Opportunities Are¶

[+++] Semantic safety verification and adversarial robustness testing -- The Adversarial Humanities Benchmark proves that current safety alignment across 29 of 31 frontier models fails when harmful intent is expressed in literary rather than direct language. CBRN attack success rates exceed 57%. Companies building semantic intent classifiers -- systems that understand what is being asked regardless of how it is phrased -- will serve both regulatory compliance (EU AI Act) and enterprise deployment requirements. The two-model exception (Anthropic) demonstrates this is technically achievable. (source)

[+++] Enterprise agent planning and orchestration layers -- ServiceNow's EnterpriseOps-Gym reveals that frontier agents complete long-horizon enterprise workflows only 37% of the time, with planning (not tool access) as the primary bottleneck. Human-authored plans improve performance by 14-35 points. This defines the product opportunity: planning layers that decompose complex enterprise workflows into agent-executable steps with policy compliance built in. The gap between short-task benchmarks and real enterprise requirements is enormous. (source)

[++] Clinical AI integration and prospective trial infrastructure -- Five independent sources discussed the Science study showing AI outperforming physicians. The authors explicitly call for prospective trials. Companies building clinical trial infrastructure for AI diagnostic systems -- standardized evaluation protocols, EHR integration, physician-AI handoff workflows -- address the stated next step from peer-reviewed research. The npjDigitalMed finding that 1% of data suffices with concept-focused training lowers the barrier. (source, source)

[++] Cost-optimized inference routing across global model providers -- DeepSeek V4 at 32x lower cost than GPT-5.5, Grok 4.3 at aggressive pricing, and GPT-5.5 using 40% fewer tokens per task create a complex cost-performance landscape. OpenRouter's 4x usage spike demonstrates demand for neutral routing. Intelligent routers that match task complexity to model cost-performance -- sending routine queries to DeepSeek, agentic tasks to Grok, and complex reasoning to GPT-5.5 -- can capture the growing delta between model capabilities and pricing. (source, source)

[+] AI workforce transition policy and compliance tools -- The Chinese court ruling against AI-driven termination, combined with 45% of US GDP growth coming from AI capex, creates regulatory demand for workforce transition compliance. No equivalent ruling exists outside China. Companies building workforce impact assessment tools, retraining pathway platforms, or AI displacement insurance products address both the regulatory signal from China and the accelerating displacement visible in the GDP data. (source, source)

8. Takeaways¶

-

Clinical AI has crossed from benchmark performance to real-world ER superiority. The Science study is not another leaderboard result -- it tested o1 on 76 actual emergency department patients at a major academic hospital and demonstrated statistically significant diagnostic advantages over attending physicians, particularly at triage when information is scarcest. Five independent accounts amplified this within 24 hours, signaling that clinical AI integration is transitioning from theoretical to urgent. (source, source)

-

AI safety alignment is a surface-pattern illusion for most providers. The Adversarial Humanities Benchmark quantifies what many suspected: safety filters recognize harmful wording, not harmful meaning. When 55.75% of literary-rephrased harmful requests succeed across 31 models, and CBRN categories (the ones governments care about most) exceed 57%, the industry's safety claims face empirical contradiction. The two-model Anthropic exception proves deeper alignment is possible but not yet standard. (source)

-

The frontier model pricing war has entered a new phase. Three data points today: DeepSeek V4 at 97% below GPT-5.5, Grok 4.3 at $1.25/M input with 1M context, and GPT-5.5 using 40% fewer tokens (offsetting its 2x per-token premium). The result is a rapidly fragmenting cost landscape where model selection depends on task-specific economics rather than blanket provider loyalty. OpenRouter's 4x usage spike for DeepSeek confirms price elasticity is real and immediate. (source, source)

-

AI is now 45% of US economic growth, creating structural dependency. Carson Research data shows AI hardware and software investment contributed 0.90 percentage points of the 2.0% average GDP growth over five quarters. This exceeds the dotcom-era average of +71 basis points. The implication: any slowdown in AI capex would visibly drag GDP, creating political incentives to sustain investment regardless of realized productivity gains. (source, source)

-

Enterprise AI agents fail on real workflows, and the fix is planning, not better models. ServiceNow's EnterpriseOps-Gym shows 37.4% completion on 7-30 step enterprise tasks. Human-authored plans improve this by 14-35 points. The bottleneck is decomposition and sequencing of complex workflows with policy constraints -- a systems engineering problem, not a model capability problem. This redirects the opportunity from "better foundation models" to "better orchestration and planning layers." (source)

-

China's AI labor protection ruling creates a global regulatory asymmetry as the price war intensifies. Chinese courts protect workers from AI replacement while Chinese companies (DeepSeek) aggressively reduce AI costs globally. The Pentagon simultaneously signs AI contracts with seven US firms while excluding Anthropic over safety disputes. The emerging pattern: China protects domestic labor while exporting cheap AI; the US deploys AI for national security while debating who gets access. Neither approach addresses the global workforce displacement visible in the data. (source, source)